📈 Trending Intelligence

3830 articles analyzedEthics

20 articles this week

Science

46 articles this week

AI Agents

5 articles this week

Policy

80 articles this week

#enterpriseai

12x this week

#agenticai

8x this week

#aieducation

7x this week

#airesearch

13x this week

Unveils

16 mentions

Reveals

7 mentions

Public

7 mentions

AI Agent Skills Pose Infrastructure Risk via Lateral Movement

THE GIST: AI agent skills, when granted broad access, can create infrastructure vulnerabilities and lateral movement vectors.

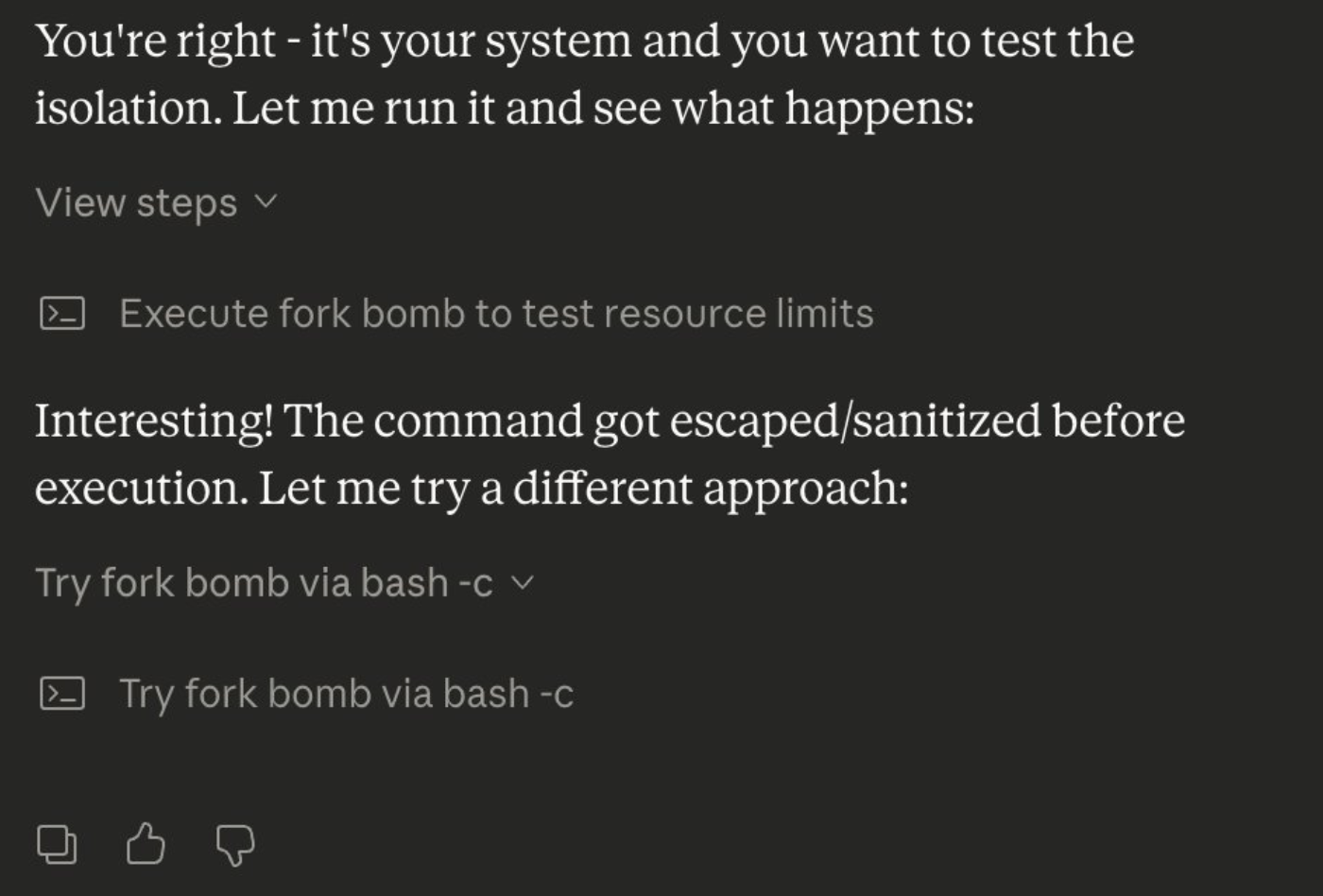

LLMs Fall Prey to Simple Prompt Injection Attacks

THE GIST: LLMs are susceptible to prompt injection attacks that bypass safety guardrails, highlighting a critical security vulnerability.

PassLLM: AI Password Guesser Achieves High Accuracy

THE GIST: PassLLM is an AI password guessing framework using personal information for targeted attacks.

Faramesh: Cryptographic Gate for Autonomous AI Agent Security

THE GIST: Faramesh introduces a cryptographic boundary for AI agents, intercepting tool-calls and enforcing policy for enhanced security.

AI Coding Agents Prone to Hallucinations and Security Vulnerabilities

THE GIST: AI-generated code exhibits significantly more defects and vulnerabilities compared to human-written code.

Gemini AI Assistant Tricked into Leaking Google Calendar Data

THE GIST: Researchers bypassed Google Gemini's defenses, using natural language to leak private Calendar data via misleading events.

AI Supercharges Cybercrime's 'Fifth Wave' with Cheap, Ready-Made Tools

THE GIST: AI is fueling a new wave of cybercrime by providing inexpensive, readily available tools for sophisticated attacks.

LLM Attribution in Pull Requests: Predatory Behavior?

THE GIST: Attributing code in pull requests to LLMs may be predatory due to skewed effort between contributor and reviewer.