📈 Trending Intelligence

3839 articles analyzedEthics

20 articles this week

Science

47 articles this week

AI Agents

7 articles this week

Policy

81 articles this week

#enterpriseai

12x this week

#agenticai

8x this week

#aieducation

7x this week

#generativeai

13x this week

Health

7 mentions

Court

6 mentions

Flaws

6 mentions

Bypassing Google's SynthID AI Watermark: A Proof-of-Concept

THE GIST: A proof-of-concept demonstrates a technique to remove Google's SynthID watermark from AI-generated images.

Circe: Offline-Verifiable Receipts for AI Agent Actions

THE GIST: Circe provides a kit for generating and verifying offline receipts of AI agent actions, ensuring integrity without trusting external logs.

F5 Extends Security Platform to Protect AI and Multi-Cloud

THE GIST: F5 introduces AI Guardrails and AI Red Team to secure AI runtime environments, alongside NGINXaaS for Google Cloud.

Mitigating Risks of Running LLM-Generated Code: A Hobbyist Programmer's Concerns

THE GIST: A hobbyist programmer expresses concerns about the security risks of running LLM-generated code and seeks advice on mitigation strategies.

Rethinking Webpage Rendering to Combat AI Scraping

THE GIST: Rendering webpages as images could deter AI scraping, but raises accessibility concerns.

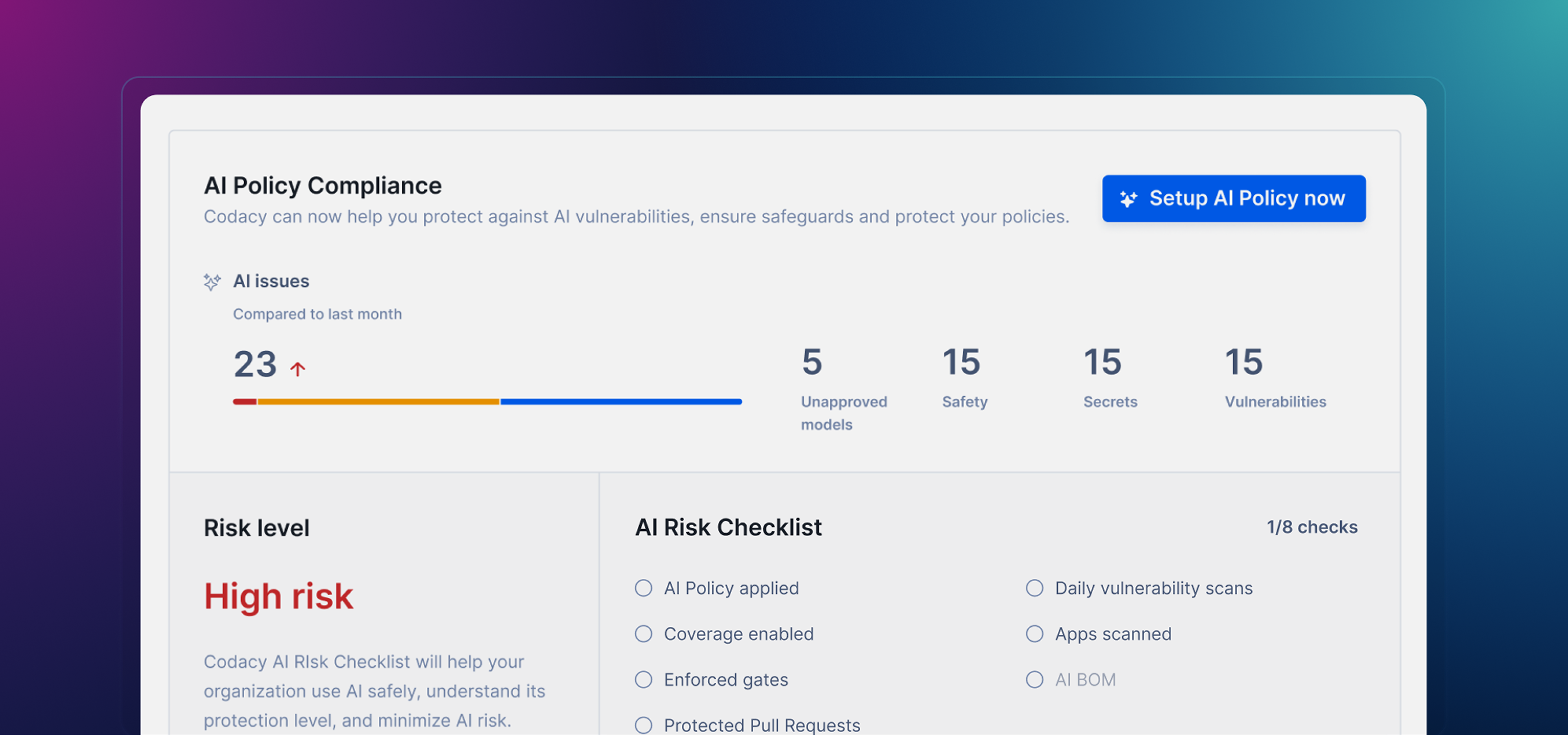

Codacy's AI Risk Hub Aims to Govern AI-Generated Code

THE GIST: Codacy launches AI Risk Hub to govern AI coding policies and automate safeguards.

OpenCuff: Secure, Policy-Driven Execution for AI Coding Agents

THE GIST: OpenCuff provides a secure governance layer for AI coding agents, controlling access to commands and scripts.

Moxie Marlinspike's Confer Prioritizes Privacy in AI Chat

THE GIST: Confer, from Signal's co-founder, offers a privacy-focused alternative to mainstream AI assistants like ChatGPT.