Results for: "Access"

Keyword Search 9 results

Sandbox AI Agents with Bubblewrap: A Lightweight Security Solution

THE GIST: Bubblewrap offers a lightweight alternative to Docker for sandboxing AI agents like Claude Code, enhancing security.

Pentagon Eyes Integrating Musk's Grok AI into Military Networks

THE GIST: The Pentagon plans to integrate Elon Musk's Grok AI into military networks, despite past controversies.

Signal's Moxie Marlinspike Aims to Revolutionize AI Privacy with Confer

THE GIST: Moxie Marlinspike, Signal creator, introduces Confer, an open-source AI assistant ensuring user data privacy through encryption and verifiable open-source software.

M5Stack Launches StackChan: Open-Source AI Desktop Robot via Crowdfunding

THE GIST: M5Stack's StackChan, an open-source AI desktop robot based on the ESP32-S3, is now available on Kickstarter.

AI Scrapers Force Websites to Implement Bot Protection Measures

THE GIST: Websites are implementing bot protection measures like Anubis to combat aggressive AI scraping that causes downtime.

Yolo-Cage: Hardened Kubernetes Sandbox for AI Coding Agents

THE GIST: Yolo-Cage is a Kubernetes sandbox that isolates AI coding agents to prevent secret exfiltration and unauthorized code modification.

AI Comments: A New Convention for Human-AI Code Collaboration

THE GIST: A proposed convention, 'AI Comments' (/*[ ... ]*/), aims to improve human-AI collaboration in codebases by highlighting intent and constraints.

India Considers AI Training Data Royalties: A Global Shift?

THE GIST: India's draft proposal could require AI firms to pay royalties for using copyrighted Indian data to train their models.

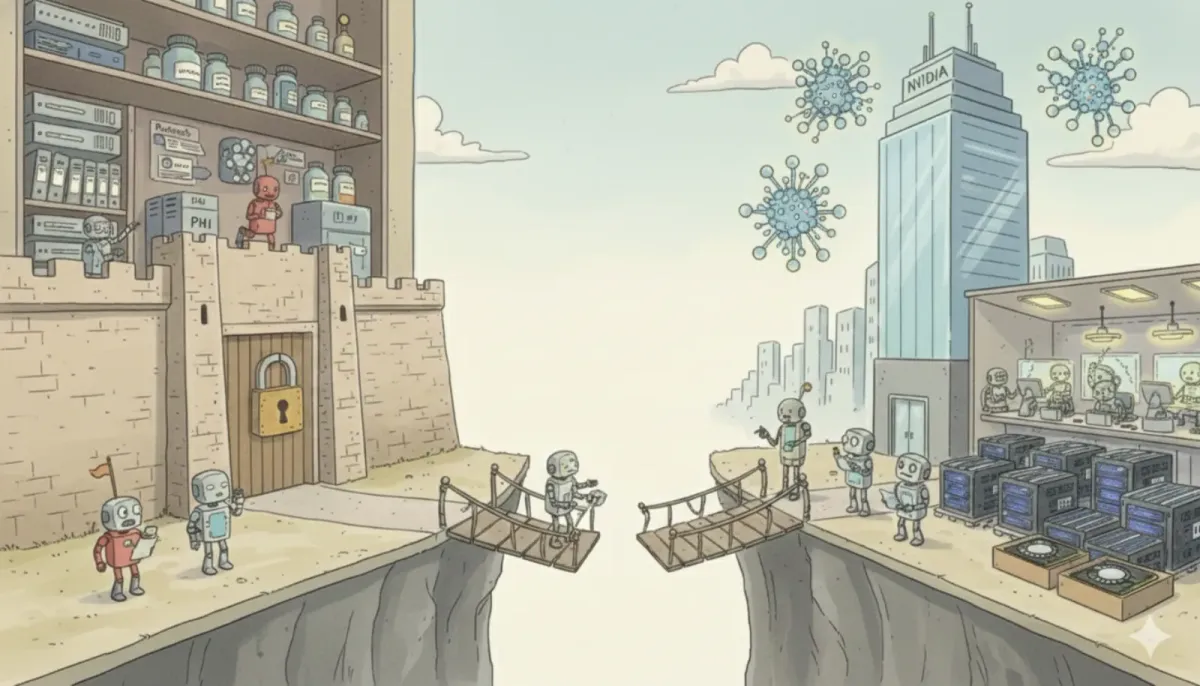

Nvidia & Eli Lilly's $1B AI Drug Lab Faces Data Access Hurdles

THE GIST: Nvidia and Eli Lilly's $1B AI drug discovery lab faces challenges in accessing and utilizing sensitive pharmaceutical data.