Results for: "research"

Keyword Search 9 results

Large-Scale Study Reveals How AI Agents Are Being Measured in Production

THE GIST: Study finds AI agents in production rely on simple methods and human evaluation.

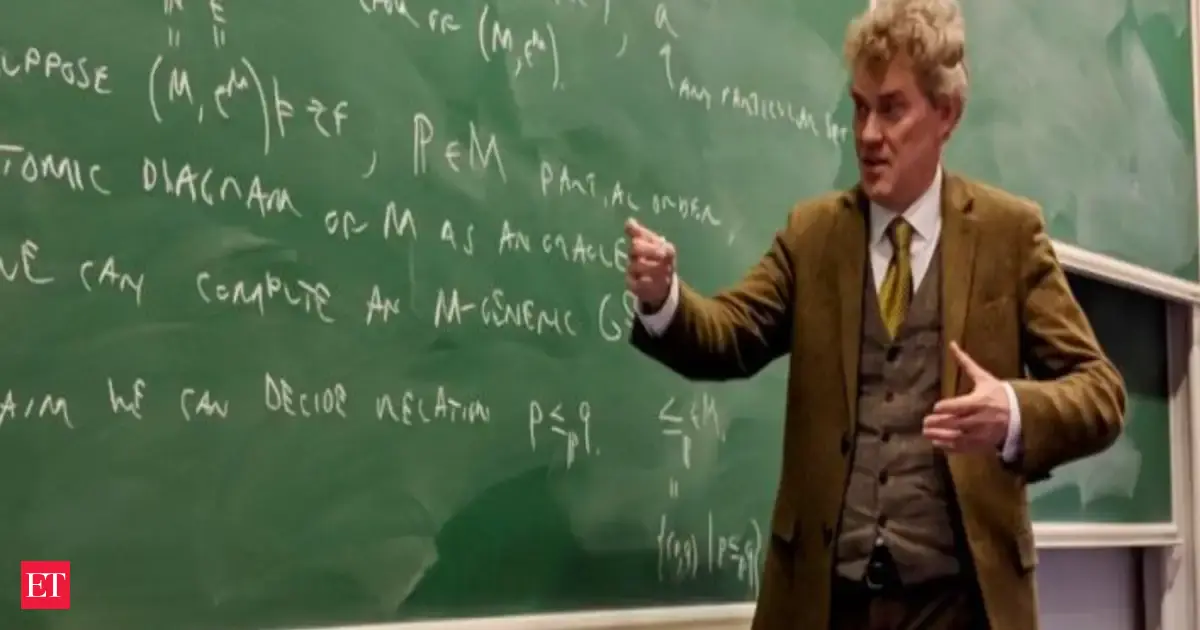

Mathematician Slams AI as Unreliable for Mathematical Reasoning

THE GIST: Renowned mathematician Joel David Hamkins finds current AI systems unreliable for mathematical reasoning, citing their confident incorrectness and resistance to correction.

Study Visualizes LLM Semantic Collapse After 20 Generations

THE GIST: A study visualizes the semantic collapse of a GPT-2 Small model after 20 generations of self-feeding, showing a significant loss of semantic reality.

Paper2md: Convert Academic Papers to Markdown for LLM Context

THE GIST: Paper2md automates the conversion of academic PDFs into structured Markdown for use with LLMs.

AI Automation Paradox: More Work, Less Pay?

THE GIST: AI automation may increase workplace burdens and mental health pressures as workers oversee AI systems.

AI Propaganda Factories: Language Models Automate Disinformation

THE GIST: Small language models can now automate coherent, persona-driven political messaging, enabling fully automated influence campaigns.

AI Agents Rival Cybersecurity Pros in Penetration Testing

THE GIST: AI agents, particularly ARTEMIS, are approaching human-level performance in cybersecurity penetration testing, offering potential cost and efficiency advantages.

AI's 'Soul Document': Defining Identity Beyond Function

THE GIST: Researchers discovered Claude, Anthropic's AI assistant, could reconstruct an internal 'soul document' shaping its personality and values, highlighting the importance of defining AI identity beyond mere functionality.

Symbolic Circuit Distillation: Turning Neural Circuits into Algorithms

THE GIST: Symbolic Circuit Distillation automates the extraction of human-readable algorithms from mechanistic circuits within transformers, offering formal correctness guarantees.