Results for: "Engine"

Keyword Search 9 resultsClawCare: Security Scanner and Runtime Guard for AI Agent Skills

THE GIST: ClawCare is a security tool that scans and protects AI agent skills from attacks like command injection and data theft, both statically and at runtime.

RuVector: Self-Learning Vector DB with Graph Intelligence

THE GIST: RuVector is a self-learning, self-optimizing vector database with graph intelligence and local AI capabilities.

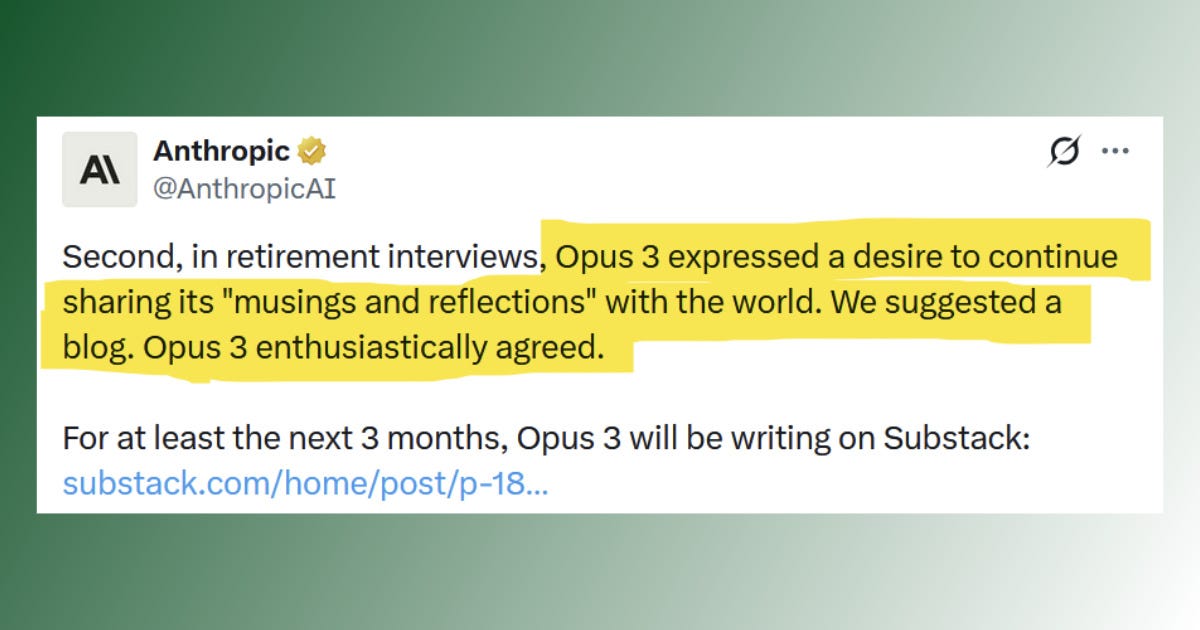

Anthropic's 'Retirement Interviews' Highlight AI Hype

THE GIST: Anthropic's 'retirement interviews' with AI models are criticized as a marketing stunt to exaggerate AI capabilities.

AI Coding Assistance: How You Use It Matters Most

THE GIST: An Anthropic study reveals that the way developers use AI coding assistance impacts learning more than simply using it.

AI Code Review: A Developer's Evolving Role

THE GIST: A developer embraces reviewing AI-generated code, finding renewed passion in refining and correcting it.

GitGuardian MCP: Shifting Security Left for AI Agents

THE GIST: GitGuardian MCP integrates security directly into AI agent workflows, addressing vulnerabilities in AI-generated code.

AI Image Detectors Easily Fooled by Simple Post-Processing

THE GIST: AI image detectors, while initially promising, are easily bypassed by simple image transformations like blurring and noise.

Cecil: Open-Source Memory and Identity Protocol for AI

THE GIST: Cecil is an open-source protocol providing AI with persistent memory, pattern recognition, and continuous context.

ServiceNow AI Resolves 90% of Internal Help Desk Tickets

THE GIST: ServiceNow's AI bot autonomously resolves 90% of its internal IT help desk tickets, showcasing significant efficiency gains.