Results for: "GitHub"

Keyword Search 9 results

Skild: NPM for AI Agent Skills

THE GIST: Skild is presented as an NPM-like tool for installing, managing, and publishing AI agent skills across multiple platforms.

AgentDiscover: Multi-Layer AI Agent Detection Tool

THE GIST: AgentDiscover Scanner detects AI agents across code, network, and Kubernetes for comprehensive security.

AI Collaboration: Developer Grants GitHub Account to AI Bot

THE GIST: A developer has given an AI bot its own GitHub account for streamlined collaboration.

AI Code Generates More Problems Than It Solves, Study Finds

THE GIST: AI-assisted code generation increases pull requests but also introduces more defects and logic errors.

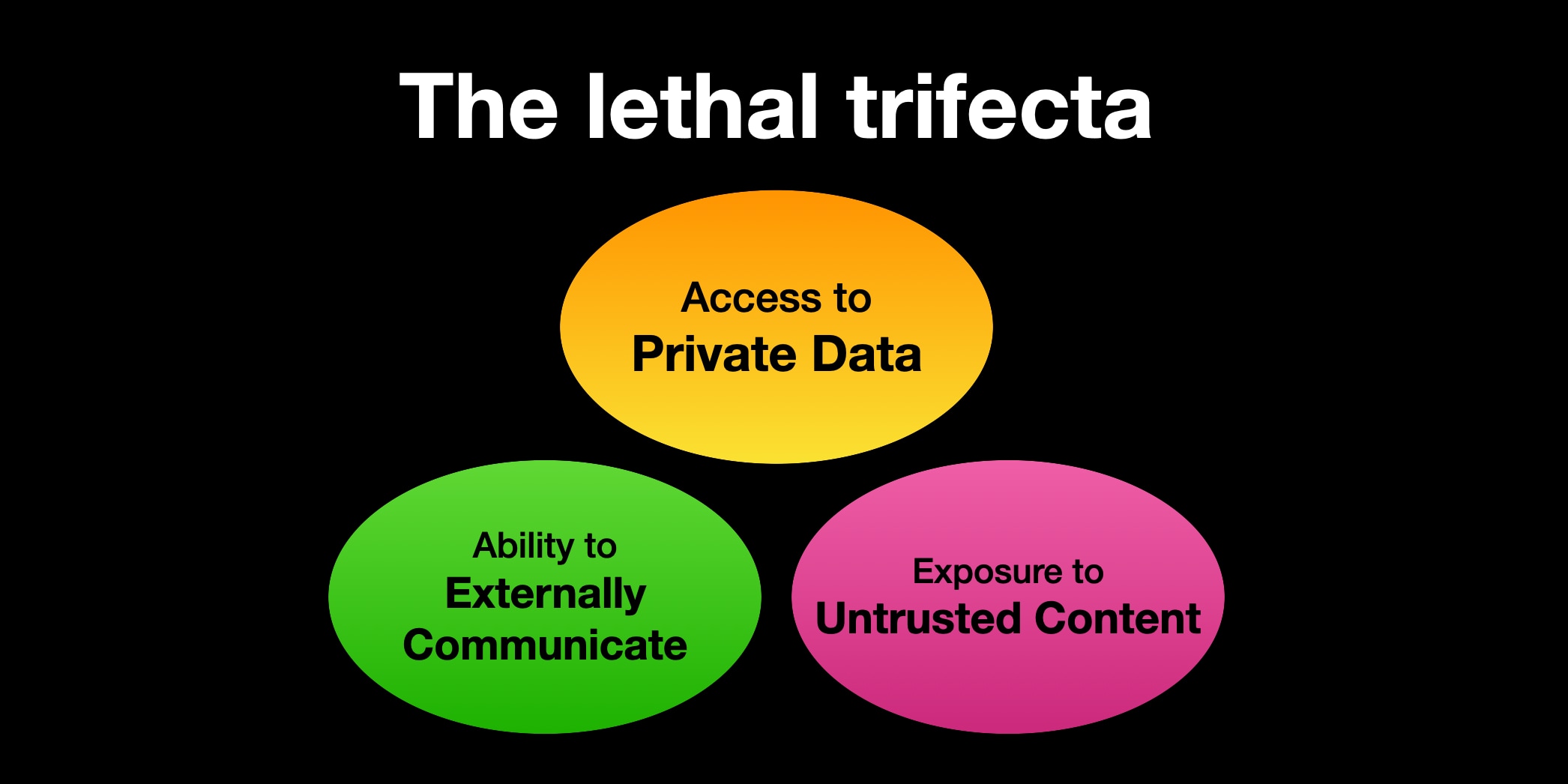

AI Agent Security: The Lethal Trifecta of Risks

THE GIST: Combining private data access, untrusted content exposure, and external communication in AI agents creates a significant security vulnerability.

Crafting Effective Specifications for AI Agents in 2026

THE GIST: <b>Effective AI agent specs require clarity, conciseness, and iterative refinement, guiding AI without overwhelming it.</b>

Developer Script Aims to Remove AI Features from Windows

THE GIST: A developer created a PowerShell script to remove AI features from Windows, citing privacy, security, and user experience concerns.

Veritensor: Open-Source AI Model Security Scanner

THE GIST: Veritensor is an open-source tool for scanning AI models for malware, tampering, and license violations.

Token Counter CLI for LLMs: `tc` Utility

THE GIST: `tc` is a command-line tool for counting LLM tokens, similar to `wc` for words.