Results for: "Reveals"

Keyword Search 9 results

AI Job Displacement: American Workers' Adaptability Assessed

THE GIST: A study finds a positive correlation between AI exposure and adaptive capacity among American workers, but identifies vulnerable pockets.

China's AI Ecosystem Mapped: Public Registry Reveals Thousands of Companies

THE GIST: China's public algorithm registry offers a detailed view of its booming AI ecosystem, tracking thousands of companies.

AI Code Assistants Still Degrade Code Quality in 2025, CMU Study Finds

THE GIST: A CMU study reveals that AI coding assistants continue to negatively impact code quality through mid-2025.

AI Fuels Research Output, Narrows Scientific Focus

THE GIST: AI boosts individual researcher productivity but narrows the scope of scientific inquiry, leading to less original research.

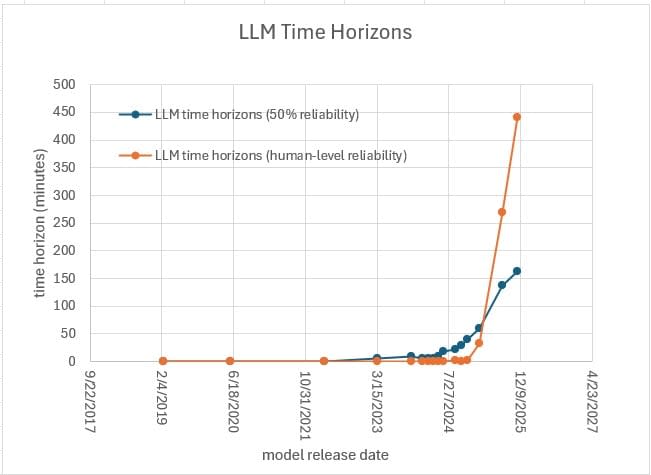

METR Underestimates LLM Time Horizons, Suggests Analysis

THE GIST: Analysis suggests METR's benchmarks may underestimate LLM time horizons due to flawed human baselines.

Sam Altman's Perspective on AI Model Power: A Critical Look

THE GIST: Altman's view on 'power' in LLMs is challenged by gpt-oss-120b's poor performance on real-world conversational benchmarks.

AI Coding Assistant Cursor Boosts Velocity, Raises Code Complexity

THE GIST: A study reveals Cursor AI boosts coding velocity but increases code complexity and static analysis warnings.

AI Content Detection: $5 GPTs Rival $300 SaaS Tools

THE GIST: A 90-day test reveals that ChatGPT Custom GPTs ($5/mo) perform closely to standalone AI detection/humanization SaaS tools costing $50-300/mo.

AI Coding Success Hinges on Steering, Anchoring, and Persistence

THE GIST: Analysis of 4.6k AI coding threads reveals that 'steering' (correcting the AI), 'anchoring' (providing specific context), and persistence are key to successful collaboration.