AI Agent Authorization: The Overlooked Hurdle

THE GIST: The primary challenge with AI agents isn't identity, but ensuring their access is appropriately scoped and limited to prevent unintended actions.

Microsoft's Project Silica Achieves Breakthrough in Glass Data Storage

THE GIST: Microsoft's Project Silica achieves a breakthrough in glass data storage, extending the technology to borosilicate glass for 10,000-year data preservation.

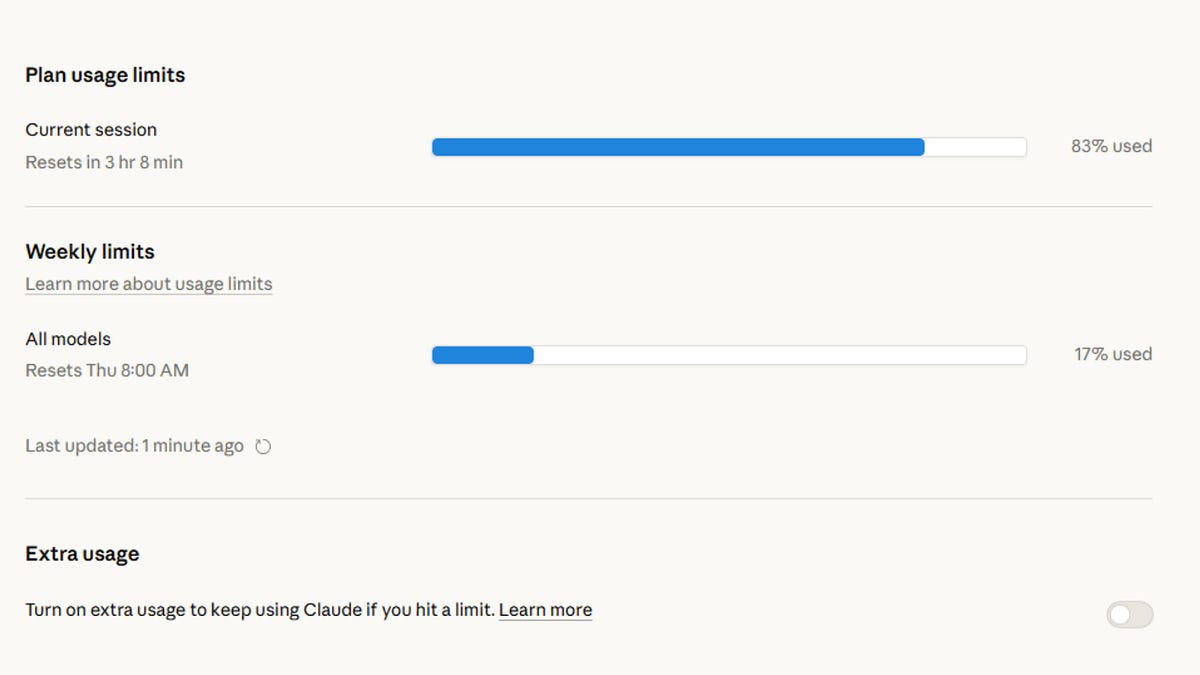

AI's Scarcity Trap: Why It Feels Like a Metered Utility

THE GIST: AI feels like a metered utility due to the high cost of GPUs and the resulting scarcity of computing resources.

LLM-Generated Passwords Found Dangerously Insecure

THE GIST: LLM-generated passwords, while appearing strong, are fundamentally insecure due to the predictable nature of LLM token generation.

Agentpriv: Sudo for AI Agents - Control Tool Execution

THE GIST: Agentpriv provides a permission layer for AI agents, allowing control over tool execution with 'allow', 'deny', or 'ask' policies.

SentinelGate: Open Source Universal Firewall for AI Agents

THE GIST: SentinelGate is an open-source firewall that intercepts and evaluates AI agent actions for enhanced security.

AI-Generated Tests Pass, But Fail to Validate Code Intent

THE GIST: AI-generated tests can confirm code implementation but may fail to validate the intended behavior, highlighting the 'ground truth problem'.

AIBenchy Leaderboard Ranks AI Model Performance and Cost

THE GIST: AIBenchy is an independent leaderboard ranking AI models based on score, reasoning ability, cost, consistency, and pass rate.

The Illusion of AI Sovereignty: Cultural Bias in AI Models

THE GIST: AI models, even those built in Europe, are shaped by the predominantly English-language and American-centric data they are trained on, leading to cultural bias.