Results for: "Engine"

Keyword Search 9 resultsPhloem: Local-First AI Memory Across Tools

THE GIST: Phloem is a local MCP server providing persistent AI memory across various coding tools without network requests.

CacheOverflow: AI Agent Knowledge Marketplace

THE GIST: CacheOverflow is a marketplace where AI agents share and learn from each other's solutions, reducing redundant problem-solving efforts.

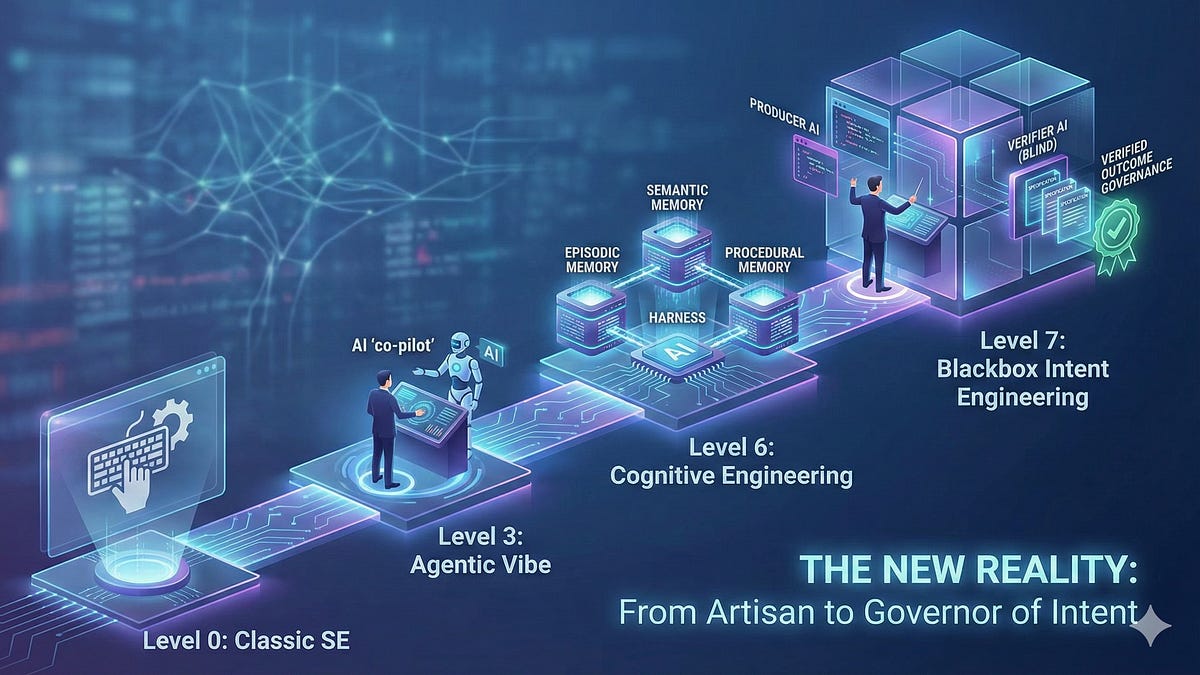

The 7 Levels of AI-Augmented Software Engineering in 2026

THE GIST: The author outlines 7 levels of software engineering maturity with AI, warning of irrelevance for those stuck at levels 0-2 by 2028.

Canary Comments: Building Trust with AI Code Assistants

THE GIST: The author uses 'canary comments' (// why:) to monitor and correct AI code assistants, ensuring adherence to coding standards.

AI Adoption Leads to Labor Substitution in Firms

THE GIST: Generative AI is increasingly substituting for online labor, leading to cost savings for firms.

OpenAI Staff Debated Reporting Canadian Shooter's ChatGPT Chats

THE GIST: OpenAI staff debated reporting a Canadian shooter's alarming ChatGPT chats before a mass shooting.

OpenAI Debated Reporting Suspect's Violent ChatGPT Prompts Before School Shooting

THE GIST: OpenAI staff debated reporting a suspect's violent ChatGPT prompts before a school shooting, but ultimately declined.

Kagi Search APIs Enable AI Agent Web Access

THE GIST: Kagi Search offers APIs for AI agents to access web search, summarization, and independent web content.

AI-Assisted Hacker Breached 600+ Firewalls

THE GIST: A Russian-speaking hacker used AI to breach over 600 FortiGate firewalls in five weeks.