MAKO: Open Protocol Reduces LLM Token Consumption by 93% for Web Content

THE GIST: MAKO is an open protocol that optimizes web content for LLMs, reducing token consumption by 93% by providing a structured, token-efficient version of web pages.

AI Safety and Corporate Power Concerns Raised at UN Security Council

THE GIST: Jack Clark's UN Security Council remarks emphasize AI safety challenges and the concentration of AI development power in the private sector.

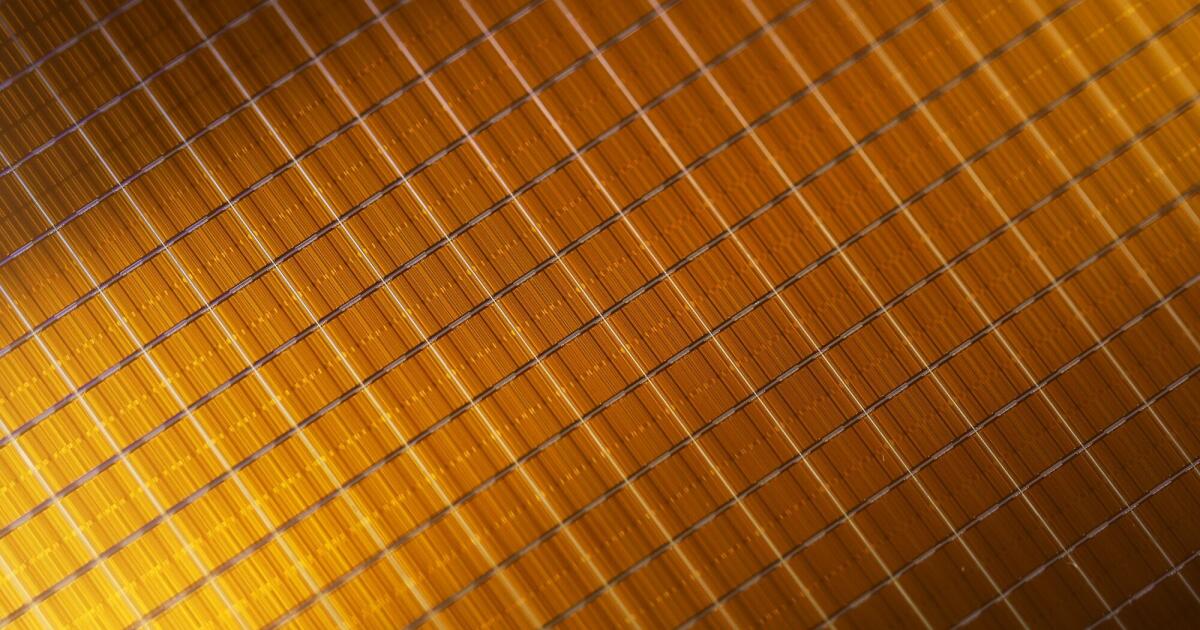

AI Giants Hoarding Memory Chips, Driving Hyperinflation

THE GIST: AI companies' demand for memory chips is causing shortages and driving prices to hyperinflation levels, impacting consumer electronics.

AI System Vulnerability: Developer Breaches Own System in Minutes

THE GIST: A developer successfully breached their own AI workflow in minutes, highlighting a critical lack of security considerations in AI agent system design.

The AI Trilemma: Governance Challenges and Economic Realities

THE GIST: AI governance faces challenges due to economic incentives, international competition, and the complexity of potential harms.

AI Model Efficiency Hinges on Memory Management

THE GIST: Efficient memory management is becoming crucial for AI model performance, impacting costs and query efficiency.

MCP Codebase Index Reduces AI Token Usage by 87% for Code Navigation

THE GIST: MCP Codebase Indexer reduces token usage by 87% by parsing codebases into structural metadata, enabling efficient AI-assisted code navigation.

Mumpu: Middleware Adds Long-Term Memory to LLM Agents

THE GIST: Mumpu is middleware that gives any LLM application long-term memory by extracting knowledge, building connections, and injecting relevant context.

NVIDIA's Nemotron 2 Nano 9B Japanese Achieves SOTA Performance in SLMs

THE GIST: NVIDIA releases Nemotron-Nano-9B-v2-Japanese, a small language model achieving state-of-the-art performance for Japanese language understanding and agent capabilities.