AI-Generated Code: 13 Lessons After One Year of Full Automation

THE GIST: An engineer shares 13 lessons learned from a year of 100% AI-generated code, emphasizing the importance of initial setup and continuous monitoring.

Anthropic Eyes $20B Funding Round Amidst AI Race

THE GIST: Anthropic is reportedly finalizing a $20 billion funding round, signaling intense competition in the AI frontier.

Study: AI Chatbots Offer 'Dangerous' Medical Advice

THE GIST: A University of Oxford study reveals AI chatbots provide inaccurate and inconsistent medical advice, posing risks to users.

LLMs Simulate Societies of Thought for Enhanced Reasoning

THE GIST: Google research suggests LLMs simulate multiple personalities to improve reasoning and problem-solving.

AI Coding Agents: Prioritize Understanding Over Blind Generation

THE GIST: Effective AI coding requires developers to deeply understand the task before using agents for implementation.

AI-Powered Satellites Could Replace Nuclear Treaties

THE GIST: AI and satellites could monitor nuclear weapons in the absence of traditional treaties.

NanoSLG: Multi-GPU LLM Server Achieves 5x Speedup

THE GIST: NanoSLG is a lightweight LLM inference server supporting pipeline, tensor, and hybrid parallelism, achieving significant throughput improvements.

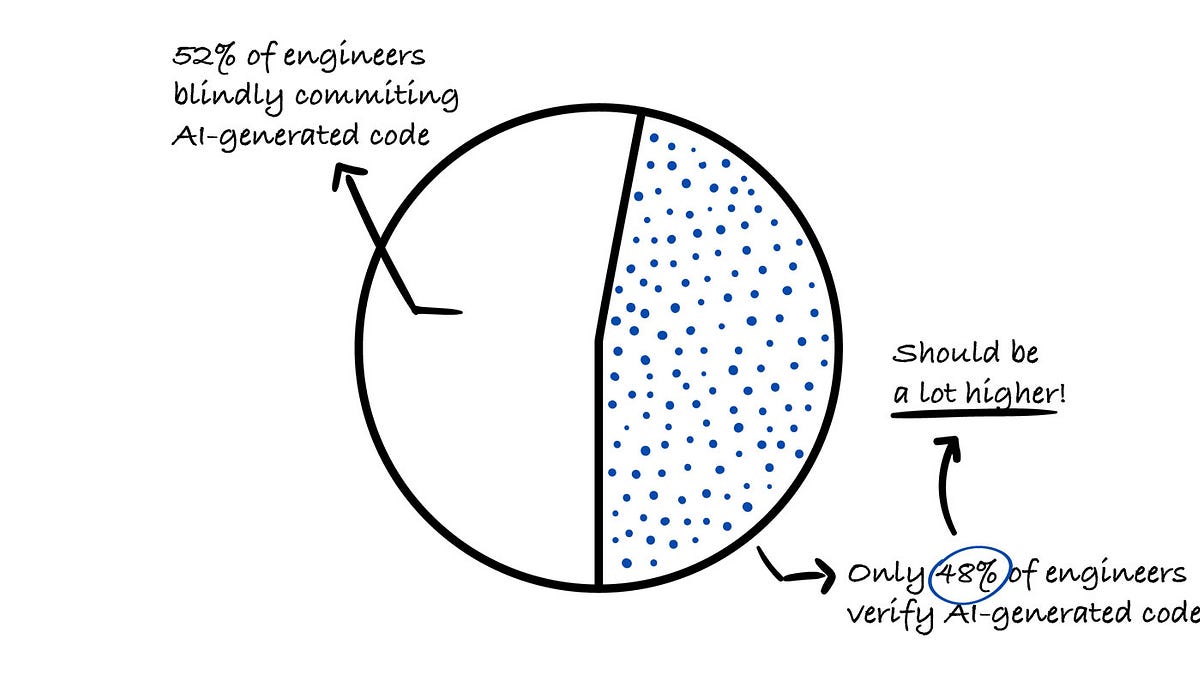

Engineers Show Alarming Lack of Verification Despite AI Trust Issues

THE GIST: A recent survey reveals that 96% of engineers don't fully trust AI-generated code, yet only 48% verify its accuracy.

PaperBanana Automates Academic Illustration for AI Research

THE GIST: PaperBanana is an agentic framework automating publication-ready academic illustrations using VLMs and image generation, benchmarked against NeurIPS 2025 publications.