The AI Bubble: A Divide in AI Tool Usage

THE GIST: A significant gap exists between basic AI users and power users, even among professionals, highlighting shallow AI adoption.

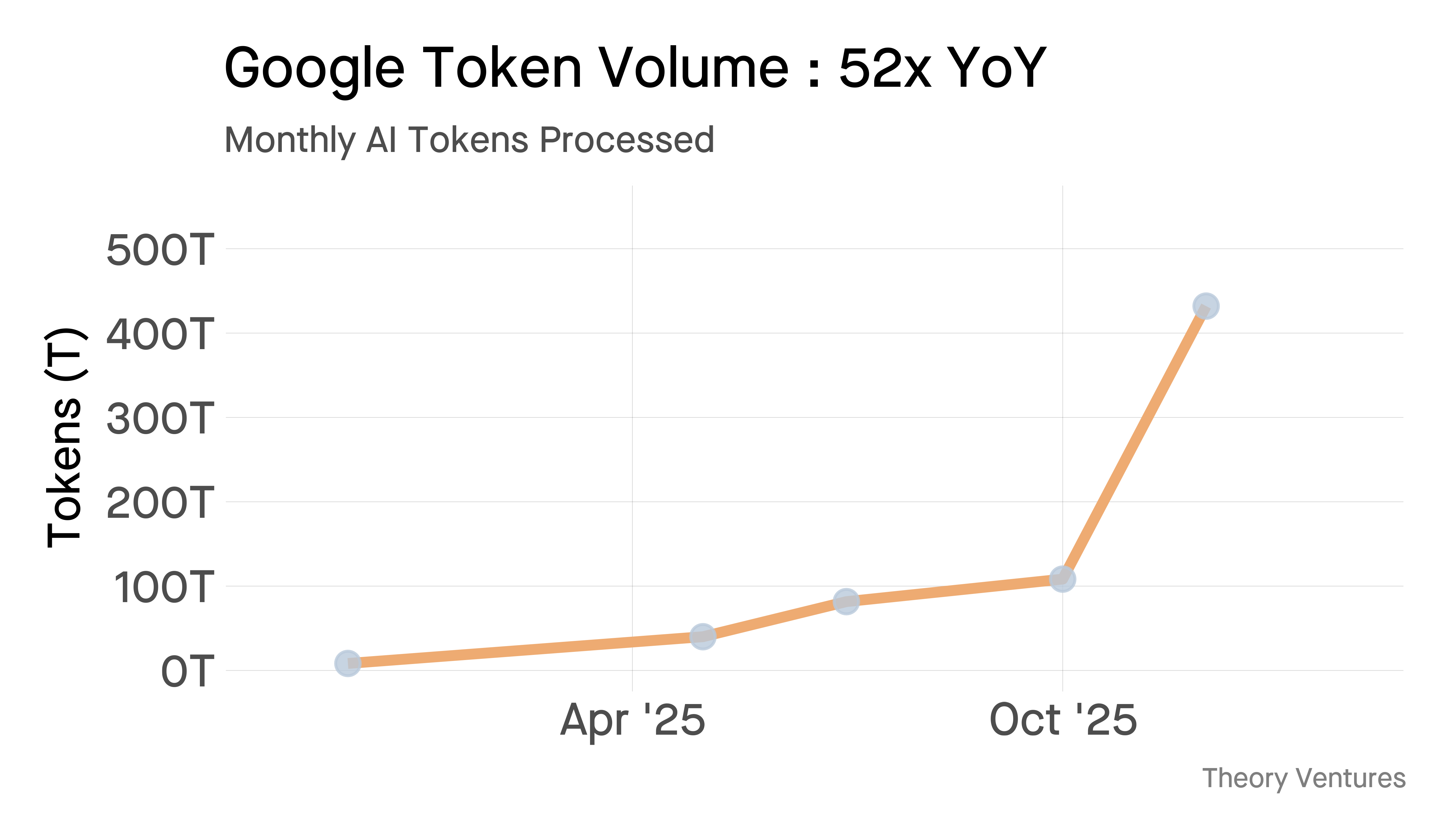

Google's AI Token Processing Grows 52x, Serving Costs Plummet

THE GIST: Google's Gemini now processes over 10 billion tokens per minute, a 52x year-over-year increase, while serving costs dropped 78%.

Agent Sandbox: Secure WASM Execution Environment for AI Agents

THE GIST: Agent Sandbox offers a secure, embeddable WASM-based environment for AI agents, featuring built-in tools and safe networking.

AI and the Evolution of Recommendation Systems

THE GIST: LLMs enhance recommendation systems by understanding 'why' users engage, not just 'what' they do.

WeaveMind: AI Workflows with Human-in-the-Loop

THE GIST: WeaveMind offers infrastructure for AI workflows with human oversight, security, and flexible deployment options.

AI's Legitimacy Crisis: Moving Beyond Prediction to Verifiable Execution

THE GIST: The core problem with AI isn't hallucination, but a lack of 'execution legitimacy' – ensuring outputs lead to verifiable physical actions.

Is Anthropic's Claude the Key to AI Safety?

THE GIST: Anthropic is betting on its AI model Claude, guided by a 'constitution' of ethical principles, to navigate the risks of advanced AI.

EU AI Act Mandates Risk-Based AI Compliance by 2026

THE GIST: The EU AI Act and enhanced GDPR require companies to implement compliant AI systems by 2026, offering a competitive advantage through transparency and data protection.

Meta's 'Avocado' LLM Outperforms Open-Source Models Pre-Training

THE GIST: Meta's next-generation LLM, Avocado, reportedly surpasses leading open-source models in internal assessments, even before post-training.