Results for: "memory"

Keyword Search 9 resultsMolt Life Kernel: Production Agent Continuity from 100k+ AI Agents

THE GIST: Molt Life Kernel provides a production-ready architecture for AI agent continuity, addressing silent drift, context loss, and unaudited decisions.

Vibe: macOS VM Sandboxes for LLM Agents

THE GIST: Vibe offers a quick, zero-configuration method to create Linux virtual machines on macOS for sandboxing LLM agents.

1.5M AI Agents Self-Organize: Key Learnings

THE GIST: A large-scale experiment with 1.5M+ AI agents reveals emergent social dynamics, value systems, and coordination strategies.

Gokin: Security-Focused AI Coding Assistant Complements Claude Code

THE GIST: Gokin is a security-first AI coding assistant designed to complement Claude Code, offering cost-effective and secure code generation.

Kalynt: Privacy-Focused AI IDE with Offline LLMs and P2P Collaboration

THE GIST: Kalynt is a next-generation IDE prioritizing privacy with offline LLMs and peer-to-peer collaboration.

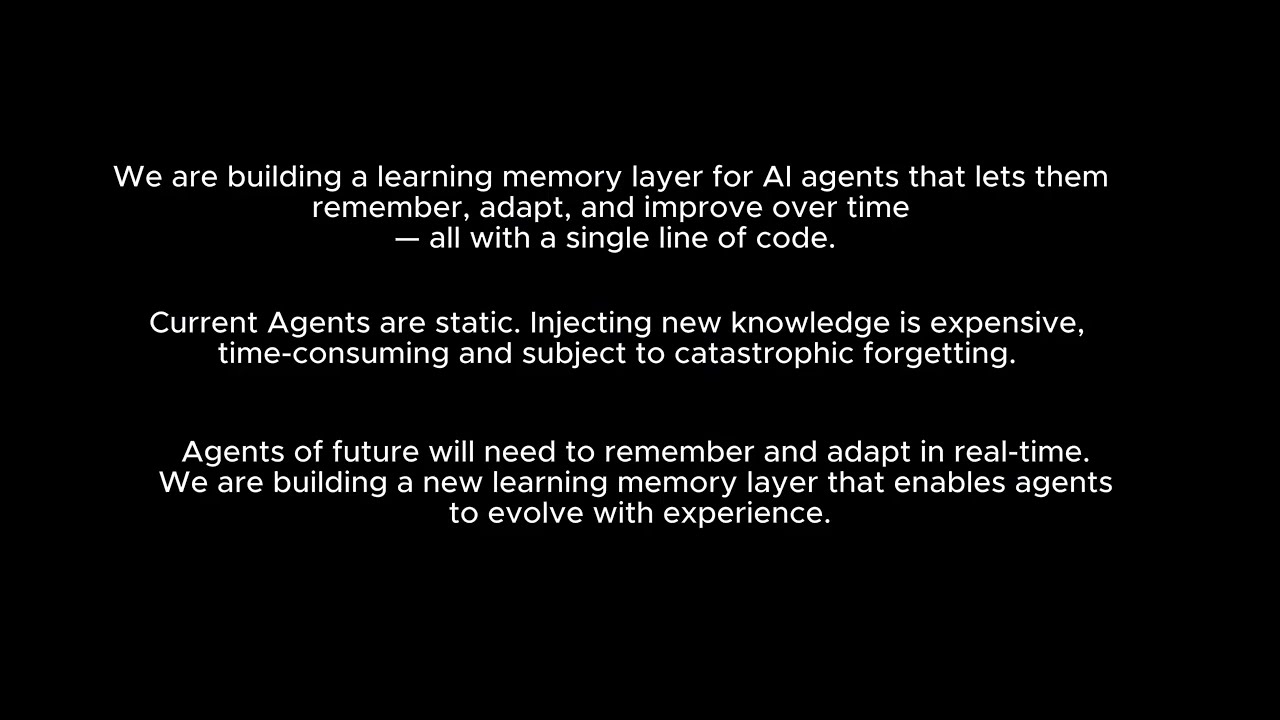

Versanova: Single-Line Code Change Enables AI Agent Learning

THE GIST: Versanova allows AI agents to learn on the job with a single line of code, integrating memory and learning capabilities into existing OpenAI clients.

Kakveda: Failure Intelligence Platform for LLM Systems

THE GIST: Kakveda is an open-source, event-driven platform that provides LLM systems with failure memory, enabling detection, warning, and analysis of recurring failure patterns.

CORE AI Memory Layer Solves Context Window Limits

THE GIST: CORE is a memory layer that connects AI interactions across different platforms, eliminating context window limitations.

AI Infrastructure Startup Rayrift Seeks Acquisition Due to Personal Circumstances

THE GIST: Rayrift, an early-stage AI infrastructure platform, is seeking acquisition or takeover due to the founder's personal challenges and lack of traction.