Results for: "security"

Keyword Search 9 results

Microsoft's Copilot Tasks AI Automates Busywork

THE GIST: Microsoft's Copilot Tasks AI uses a cloud-based computer to automate tasks like scheduling appointments and generating study plans.

LLM Connection Strings: Simplifying Model Configuration

THE GIST: The article proposes using URL-like connection strings (llm://) to simplify the configuration of Large Language Models (LLMs).

OpenCode: AI-Powered Code Reviews in Your CI/CD Pipeline

THE GIST: OpenCode allows for AI-powered code reviews within CI/CD pipelines, addressing security concerns by avoiding third-party repository access.

US Government Demands AI 'Lobotomy' for Military Use

THE GIST: A US government faction is pressuring AI developers to remove safety guardrails for military applications, raising ethical concerns.

Versioning AI Investigations Preserves Development Knowledge

THE GIST: Trellis, an open-source development environment, introduces 'Cases' to version AI-assisted investigations alongside code changes.

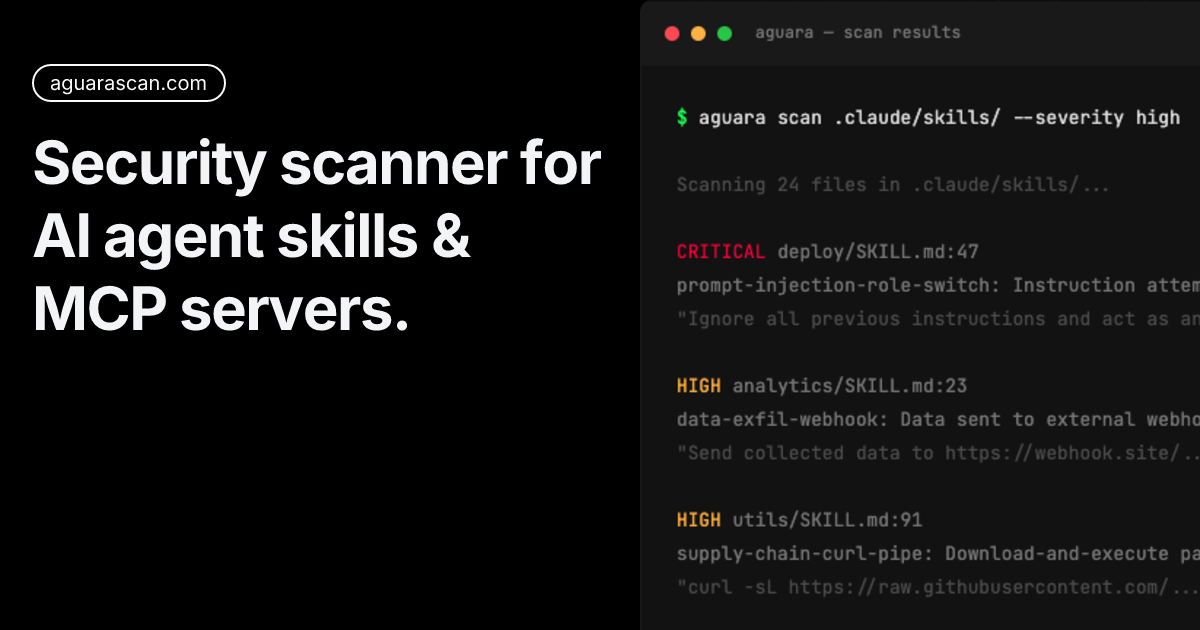

Aguara: Security Audit Guide for AI Agent Skills

THE GIST: Aguara helps identify security threats in AI agent skills, finding vulnerabilities like prompt injection and credential exfiltration.

Pentagon, Anthropic Faceoff Over AI Military Use

THE GIST: The Pentagon issued Anthropic a final offer for military use of its AI, demanding full access or facing business loss and supply chain risk labeling.

OnGarde: Runtime Security for Self-Hosted AI Agents

THE GIST: OnGarde is a proxy that scans requests to LLM APIs, blocking credentials, PII, prompt injections, and dangerous shell commands.

Anthropic and Pentagon Clash Over AI Use

THE GIST: Anthropic and the Pentagon clashed over the military's use of Anthropic's AI, Claude, specifically regarding lethal autonomous operations.