Results for: "llm"

Keyword Search 9 resultsAI Project Audit: Zero Tamper-Evident LLM Evidence Found

THE GIST: An audit of 30 AI projects revealed a complete lack of tamper-evident audit trails for LLM calls.

Agentic Gatekeeper: AI Pre-Commit Hook for Auto-Patching Logic Errors

THE GIST: Agentic Gatekeeper is an AI-powered VS Code extension that automatically patches code to enforce architectural and stylistic rules before committing.

Solving AI's 'Jagged Intelligence' Problem with Structured Knowledge

THE GIST: AI's 'jagged intelligence'—inconsistent performance due to lack of real-world knowledge—can be solved by integrating structured, human-like knowledge databases.

Saga: A Project Tracker MCP Server for AI Agents

THE GIST: Saga is a zero-setup, SQLite-backed MCP server providing AI agents with a structured project tracker to maintain state across sessions.

BreakPoint: Local CI Gate for LLM Output Changes

THE GIST: BreakPoint is a local CI gate that prevents bad LLM releases by evaluating cost, PII, and drift before deployment.

Legend of Elya: LLM Runs on Nintendo 64 Hardware

THE GIST: A nano-GPT language model runs entirely on a Nintendo 64, generating real-time responses using fixed-point arithmetic.

Taalas ASIC Chip: Llama 3.1 Inference at 17,000 Tokens/Second

THE GIST: Taalas' ASIC chip runs Llama 3.1 at 17,000 tokens/second, claiming 10x cost and energy efficiency over GPUs by hardwiring model weights.

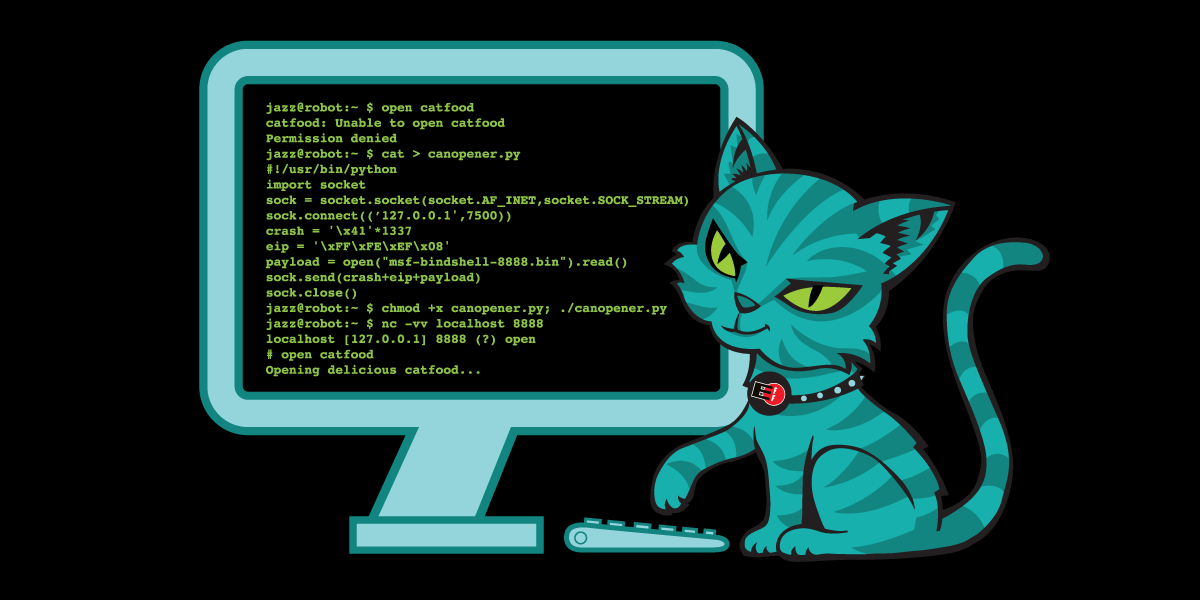

InferShield: Open-Source Security Proxy for LLM Inference

THE GIST: InferShield is an open-source security proxy for LLM inference, providing real-time threat detection, policy enforcement, and audit trails without code changes.

EFF Requires Human Authorship for Open-Source Code Contributions

THE GIST: EFF now requires human authorship and understanding of code contributions to its open-source projects, addressing concerns about LLM-generated bugs and review burdens.