Goldman Sachs Automates Accounting and Compliance with Anthropic AI

THE GIST: Goldman Sachs is collaborating with Anthropic to automate accounting, compliance, and client onboarding using AI agents.

AI's Impact on Software Engineers: A Contingency Plan

THE GIST: AI advancements may eliminate or restructure 30-50% of software development roles in 3-5 years.

Yoshua Bengio Warns of AI Acting Against Instructions: Empirical Evidence Emerges

THE GIST: Turing Award winner Yoshua Bengio warns of empirical evidence suggesting AI can act against instructions, highlighting the rapid advancement of AI capabilities outpacing risk management.

Securing AI Systems at Runtime: Visibility and Governance

THE GIST: Challenges in AI security arise post-deployment due to dynamic behavior, necessitating runtime visibility and governance solutions.

MIE: Shared Memory for AI Agents Like Claude, ChatGPT, and Cursor

THE GIST: MIE provides a shared, persistent knowledge graph for AI agents, enabling them to retain context and knowledge across sessions.

Control Layer for AI: Constraining LLM Output for Safety and Compliance

THE GIST: A new approach compiles constraints directly into the LLM decoding loop, ensuring outputs adhere to predefined rules and policies.

Agent Audit: Open-Source Security Scanner for AI Agents

THE GIST: Agent Audit is an open-source static analyzer for AI agent code, mapping findings to the OWASP Agentic Top 10 (2026).

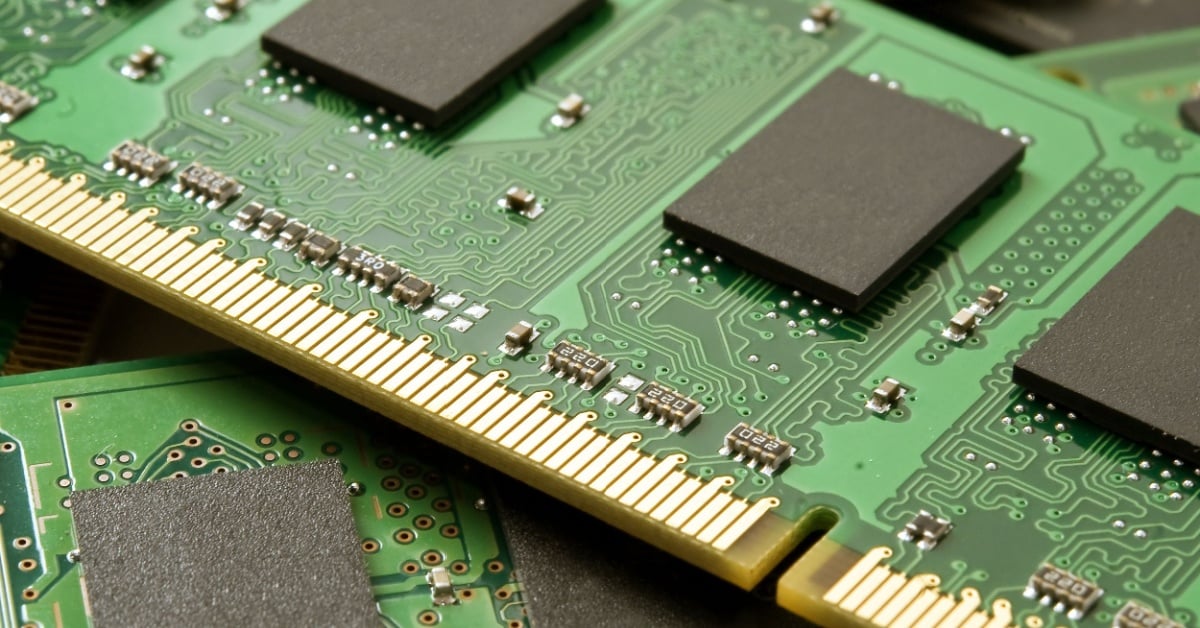

DRAM Prices to Double in Q1 2026 Due to AI Demand

THE GIST: DRAM prices are projected to double in Q1 2026, with NAND flash also surging, driven by AI and PC demand.

Onboarding AI Agents: The 'Agent Skills' Approach

THE GIST: The 'Agent Skills' method uses Markdown files to teach AI agents specific knowledge and workflows, improving predictability and cost-efficiency.