AcidTest: Security Scanner for AI Agent Skills

THE GIST: AcidTest is a security scanner for AI agent skills, identifying vulnerabilities before installation.

Educators Integrate AI, Emphasize Critical Thinking

THE GIST: Canadian educators are integrating AI into classrooms, setting rules, and encouraging responsible use alongside critical thinking.

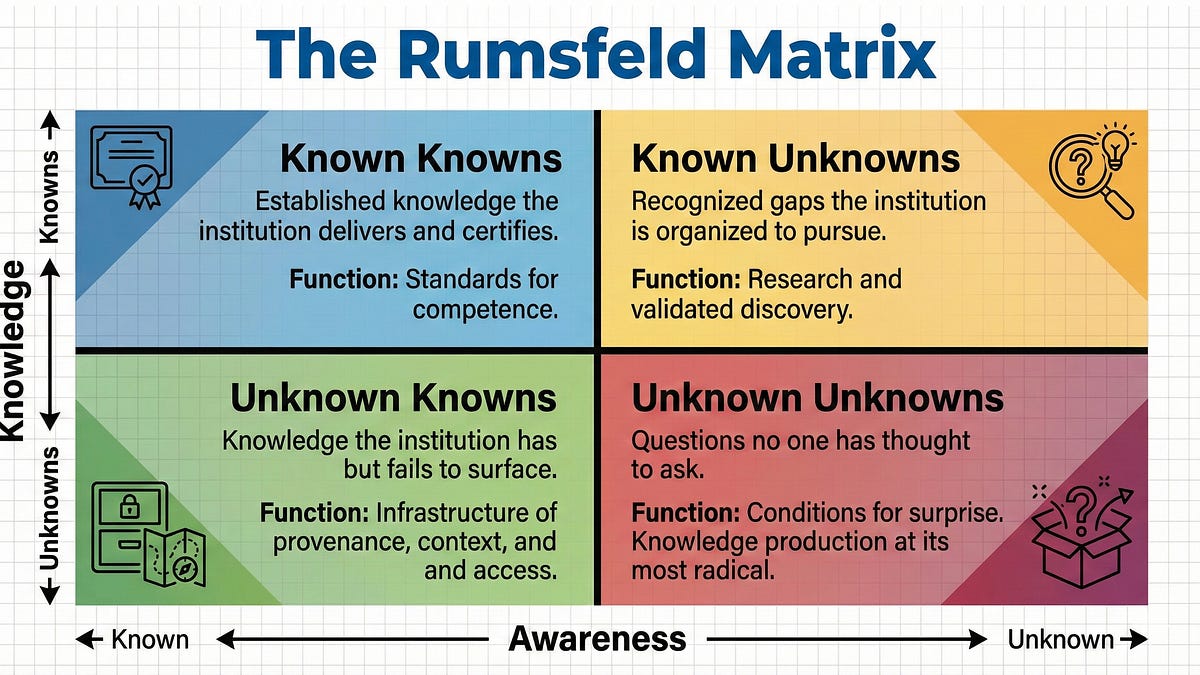

Applying the Rumsfeld Matrix to AI in Higher Education

THE GIST: The Rumsfeld Matrix helps universities address AI's impact on knowledge and education.

Lgit: AI-Powered Git Commits in Rust

THE GIST: Lgit is a command-line tool written in Rust that uses AI to generate commit messages for Git, supporting multiple AI providers and GPG signing.

Self-Healing AI System Uses Claude Code for Autonomous Recovery

THE GIST: An autonomous 4-tier recovery system for OpenClaw Gateway uses Claude Code to diagnose and fix problems, escalating to human alerts only when necessary.

Empusa: Open-Source Dashboard Prevents AI Agent API Credit Burnout

THE GIST: Empusa is an open-source dashboard designed to provide real-time monitoring, debugging, and intervention capabilities for autonomous AI agents, preventing API credit wastage.

Cheaper LLM Leads to Higher Costs Due to Hidden Issues

THE GIST: Switching to a cheaper LLM resulted in increased costs due to infinite loops and infrastructure issues.

LLM Contamination Paper's Cloning Suggests Silent Validation

THE GIST: Sustained cloning of an LLM contamination paper, coupled with zero public feedback, suggests silent validation by security-conscious organizations.

Cognee: Streamlining AI Agent Memory with Knowledge Graphs

THE GIST: Cognee is an open-source tool that uses knowledge graphs and vector search to create persistent and dynamic AI agent memory, replacing traditional RAG systems.