Results for: "security"

Keyword Search 9 results

Versioning AI Investigations Preserves Development Knowledge

THE GIST: Trellis, an open-source development environment, introduces 'Cases' to version AI-assisted investigations alongside code changes.

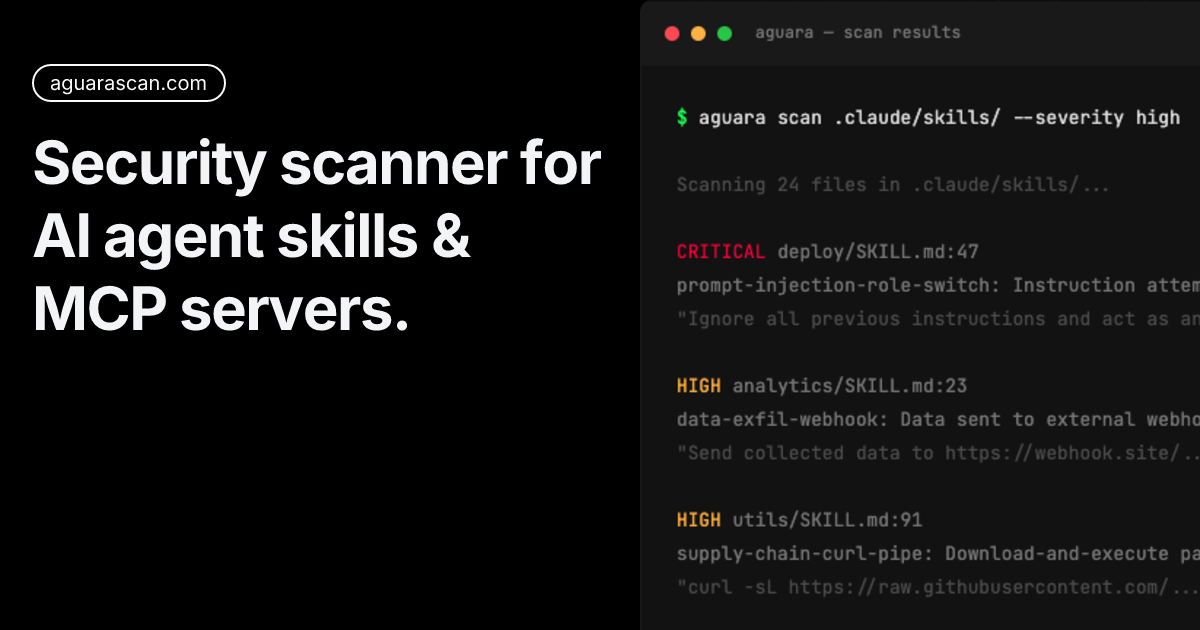

Aguara: Security Audit Guide for AI Agent Skills

THE GIST: Aguara helps identify security threats in AI agent skills, finding vulnerabilities like prompt injection and credential exfiltration.

Pentagon, Anthropic Faceoff Over AI Military Use

THE GIST: The Pentagon issued Anthropic a final offer for military use of its AI, demanding full access or facing business loss and supply chain risk labeling.

OnGarde: Runtime Security for Self-Hosted AI Agents

THE GIST: OnGarde is a proxy that scans requests to LLM APIs, blocking credentials, PII, prompt injections, and dangerous shell commands.

Anthropic and Pentagon Clash Over AI Use

THE GIST: Anthropic and the Pentagon clashed over the military's use of Anthropic's AI, Claude, specifically regarding lethal autonomous operations.

AgentSecrets: Zero-Knowledge Credential Proxy for AI Agents

THE GIST: AgentSecrets is a zero-knowledge credential proxy that prevents AI agents from directly accessing API keys, enhancing security.

Sentinel Protocol: Open-Source AI Firewall for LLM Security

THE GIST: Sentinel Protocol is an open-source local proxy that filters and secures data between applications and LLM APIs, preventing PII leaks and injections.

MVAR: Deterministic Sink Enforcement for AI Agent Security

THE GIST: MVAR offers deterministic policy enforcement at execution sinks to prevent prompt-injection-driven tool misuse in AI agents.

Accenture's AI Mandate: Adoption or Termination

THE GIST: Accenture mandates AI tool adoption, linking it to promotion and job security, sparking criticism over tool usefulness.