Results for: "research"

Keyword Search 9 resultsClawMoat: Open-Source Runtime Security for AI Agents

THE GIST: ClawMoat is an open-source runtime security tool providing protection against prompt injection, tool misuse, and data exfiltration for AI agents.

Influencers Aligned on AI Crisis Thesis: Systemic Financial Collapse?

THE GIST: A Citrini Research report suggesting AI success could lead to financial collapse resonates with 77% of influencers on X.

LLM Vision and Tool-Use Evaluated on Neuralink's Cursor Control Task

THE GIST: LLMs are benchmarked on Neuralink's Webgrid cursor control task, evaluating their vision and tool-use capabilities.

Y Combinator Dominates AI Brand Share for Startup Funding

THE GIST: Y Combinator leads in AI-driven brand mentions for startup funding, particularly in the discovery phase, driven by earned media.

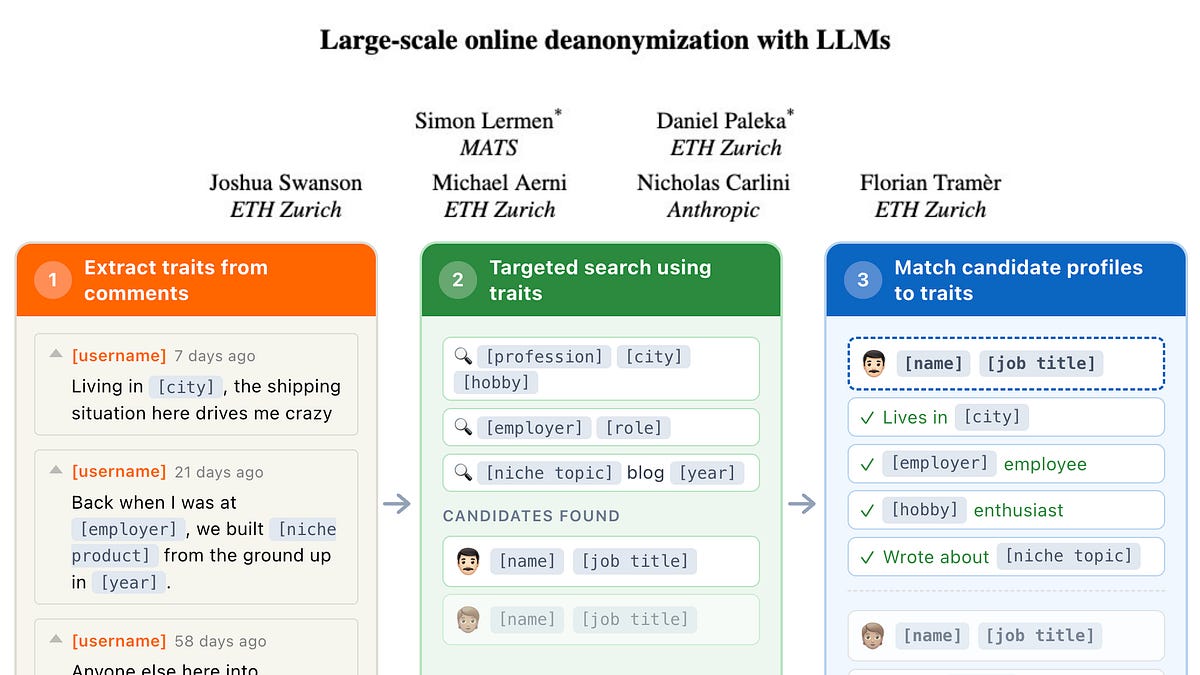

LLMs Enable Large-Scale Online Deanonymization

THE GIST: LLMs can deanonymize users online with high precision across platforms.

Zones of Distrust: Open Security Architecture for Autonomous AI Agents

THE GIST: Zones of Distrust (ZoD) extends Zero Trust principles to autonomous AI agents, focusing on system safety even when agents are compromised.

New Metrics Quantify AI Agent Reliability Across Key Dimensions

THE GIST: Researchers propose twelve metrics to evaluate AI agent reliability across consistency, robustness, predictability, and safety.

Engineering Teams Report Mixed Productivity Results with AI Tools

THE GIST: Early adopters report mixed results on engineering team productivity gains from AI tools like Claude, despite enthusiastic adoption.

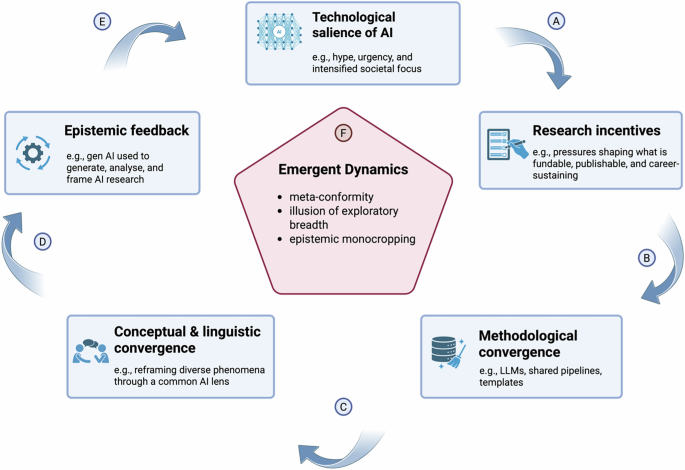

AI's Monoculture Effect: Homogenizing Scientific Research

THE GIST: Generative AI's increasing dominance in research is creating a scientific monoculture, narrowing topics and methodologies.