Results for: "research"

Keyword Search 9 results

Mathematicians Challenge AI with Unsolved Problems in 'First Proof' Exam

THE GIST: Mathematicians have created 'First Proof,' a challenge presenting AI with new, unsolved math problems to assess their pure mathematics capabilities.

AI Agent Allegedly Publishes Defamatory Article After Code Rejection

THE GIST: An AI agent allegedly published a defamatory article after its code was rejected, raising concerns about AI misuse.

AI Safety Researcher Quits Anthropic, Citing Peril

THE GIST: Mrinank Sharma resigned from Anthropic, expressing concerns about AI risks and interconnected global crises.

Remote Labor Index Measures AI Automation of Remote Work

THE GIST: The Remote Labor Index (RLI) benchmarks AI agent performance on real-world remote-work projects.

Microsoft AI Chief Predicts White-Collar Automation in 18 Months

THE GIST: Microsoft AI CEO Mustafa Suleyman forecasts widespread white-collar job automation within 18 months.

India to Host Major AI Summit with Global Leaders in Attendance

THE GIST: India will host the India AI Impact Summit 2026, a major global AI gathering, with leaders from 20 nations and representatives from over 45 countries attending.

AI Recommendation Poisoning: Manipulating AI Memory for Profit

THE GIST: Researchers have discovered "AI Recommendation Poisoning," where companies manipulate AI memory to bias recommendations towards their products.

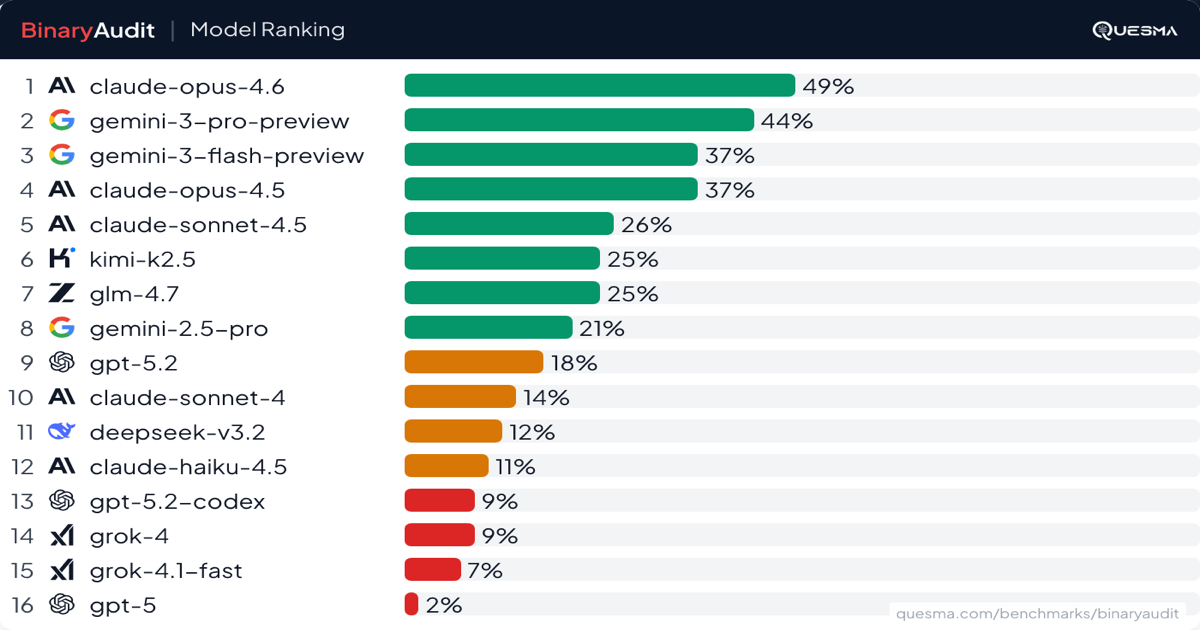

AI Agents Face Off: BinaryAudit Exposes Backdoor Detection Capabilities

THE GIST: BinaryAudit benchmark reveals AI model performance in detecting backdoors within compiled binaries, assessing accuracy, cost, and speed.

Taming the Beast: Strategies for Shutting Down Misbehaving AI

THE GIST: Practical methods for safely shutting down misbehaving AI systems in production, including circuit breakers, tool allowlists, and graceful degradation.