Results for: "llm"

Keyword Search 9 results

Meta's 'Avocado' LLM Outperforms Open-Source Models Pre-Training

THE GIST: Meta's next-generation LLM, Avocado, reportedly surpasses leading open-source models in internal assessments, even before post-training.

Asterbot: Hyper-Modular AI Agent Built on WASM

THE GIST: Asterbot is a modular AI agent using WebAssembly (WASM) for swappable components like LLMs and memory.

Recursive Deductive Verification: A New Framework for Reducing AI Hallucinations

THE GIST: Recursive Deductive Verification (RDV) improves LLM reliability by forcing verification of premises before conclusions, reducing hallucinations and logical errors.

Turning the Tables: Using LLMs to Personalize and Enhance Learning

THE GIST: LLMs can create personalized learning curricula and provide interactive tutoring, enhancing human capabilities rather than replacing them.

WatchLLM: Optimize LLM Costs with Caching and Loop Detection

THE GIST: WatchLLM offers a cost-saving solution for LLM applications by caching similar prompts and detecting loops, reducing API expenses.

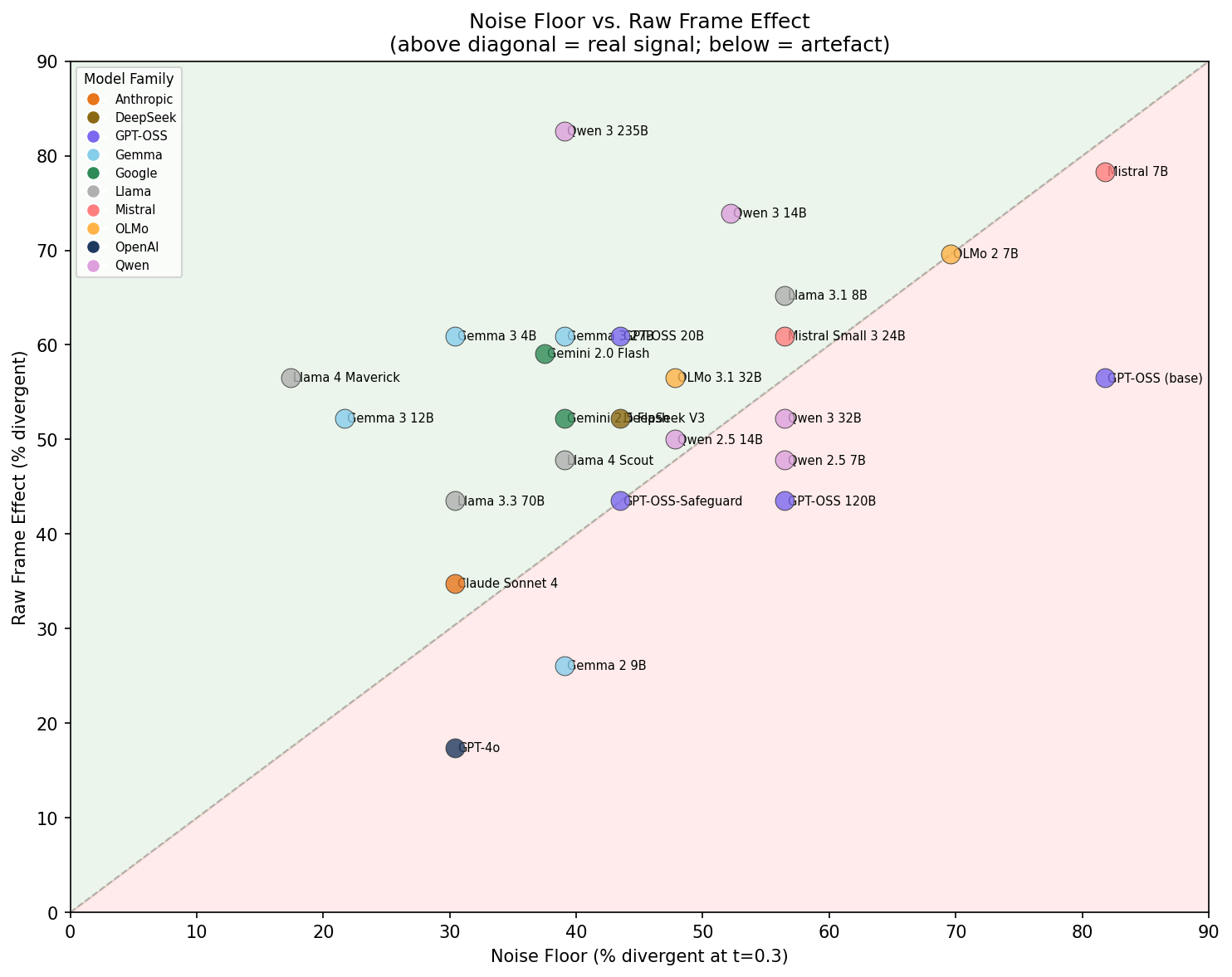

LLM Framing Affects Language, Not Judgment, in AI Safety Evaluations

THE GIST: Framing an LLM evaluator as a 'safety researcher' primarily alters its language use, not its core judgment of AI failures.

LLM-Based Digital Twins Show Limited Psychometric Comparability to Humans

THE GIST: LLM-based digital twins exhibit high population-level accuracy but show systematic divergences in psychometric comparability to humans.

AI and the Evolution of Recommendation Systems

THE GIST: LLMs enhance recommendation systems by understanding 'why' users engage, not just 'what' they do.

LocalGPT: Your Private, Rust-Powered AI Assistant

THE GIST: LocalGPT is a Rust-based, local-first AI assistant with persistent memory and autonomous task execution.