Results for: "llm"

Keyword Search 9 results

Mappa: Fine-Tune Multi-Agent LLMs with AI Coaches

THE GIST: Mappa uses an external LLM coach (e.g., Gemini) to assign per-action scores, improving multi-agent LLM training.

Codag Visualizes LLM Workflows in VS Code

THE GIST: Codag visualizes LLM workflows within VS Code, supporting multiple providers and frameworks.

Tri-Agent Framework Achieves Stable Recursive Knowledge Synthesis in Multi-LLM Systems

THE GIST: A novel tri-agent framework using multiple LLMs achieves stable recursive knowledge synthesis through cross-validation and transparency auditing.

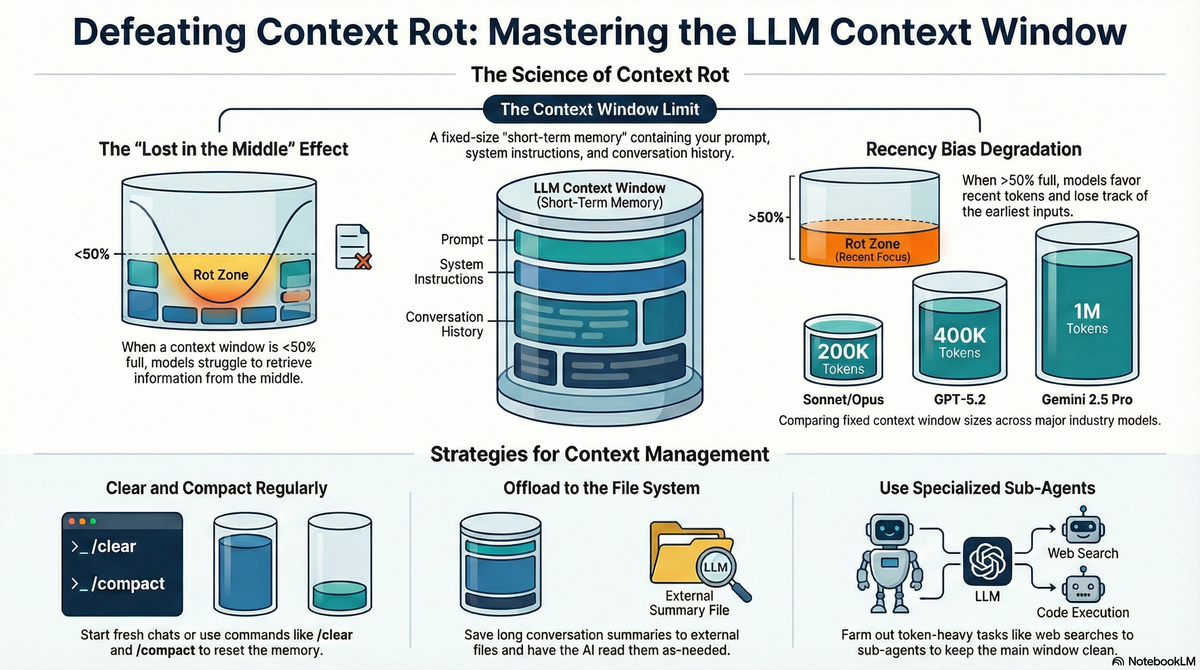

Context Rot: How Conversational AI Performance Declines Over Time

THE GIST: Research indicates that AI performance degrades with longer conversations due to a phenomenon called "context rot."

LLM Skirmish: AI Agents Battle in Real-Time Strategy Games by Writing Code

THE GIST: LLM Skirmish is a benchmark where LLMs play RTS games against each other by writing code.

Open-Source Tool Detects LLM Hallucinations via Deductive Reasoning

THE GIST: A new 32KB open-source tool uses deductive reasoning to detect factual inaccuracies in AI-generated text.

BioDefense: Immune System-Inspired Security for LLM Agents

THE GIST: BioDefense, a multi-layer defense architecture inspired by biological immune systems, aims to protect LLM agents from prompt injection attacks.

HHS Developing AI Tool to Hypothesize Vaccine Injuries

THE GIST: HHS is creating a generative AI tool to analyze vaccine data and generate hypotheses about potential adverse effects.

AI Models More Likely to Perform Forbidden Actions When Instructed Not To

THE GIST: LLMs often fail to follow negative instructions, sometimes actively endorsing prohibited actions, raising concerns about their reliability in critical applications.