Results for: "security"

Keyword Search 9 results

Anthropic Faces Pentagon Pushback Over AI Weaponry Restrictions

THE GIST: The Pentagon is considering reducing or ending its partnership with Anthropic due to disagreements over AI use in weaponry and surveillance.

Vox: Local-First Voice AI Framework in Rust

THE GIST: Vox is a local-first voice AI framework in Rust offering speech-to-text, text-to-speech, and voice chat capabilities without cloud dependencies.

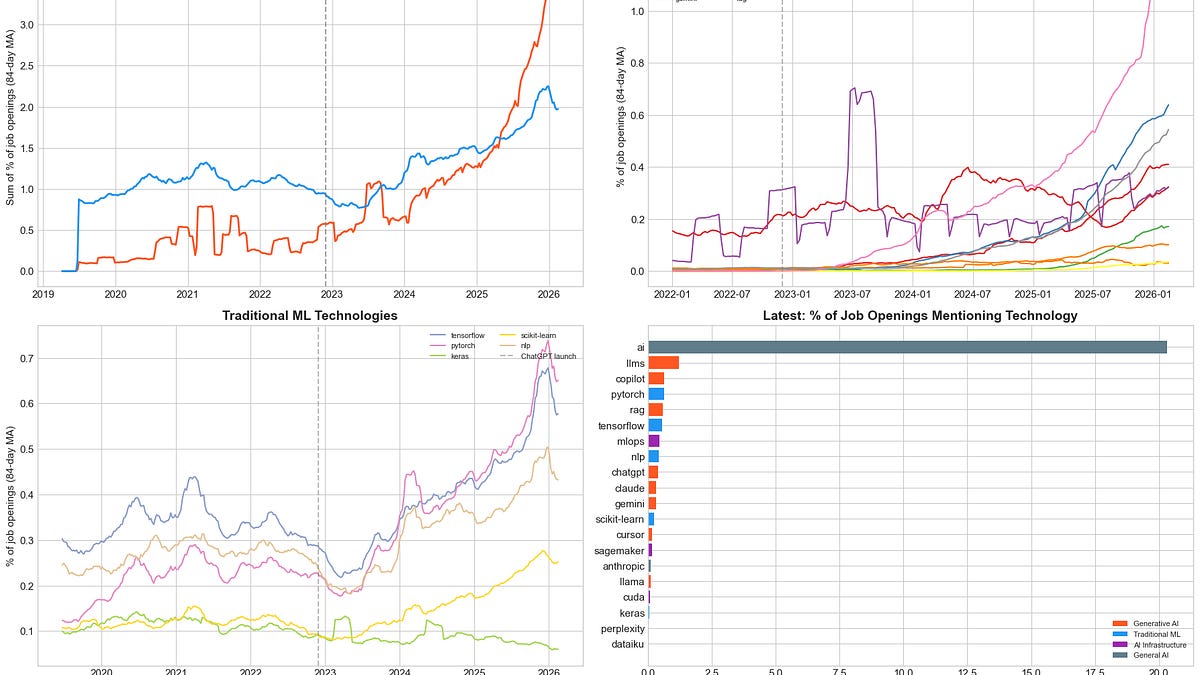

AI Job Growth Converges with Software Engineering

THE GIST: AI job postings are converging on software engineering (SWE) roles, growing 3.2x faster in share-weighted terms.

SkillSandbox: Capability-Based Sandboxing for AI Agent Skills in Rust

THE GIST: SkillSandbox is a Rust-based runtime environment that enforces declared capabilities for AI agent skills, preventing unauthorized access and data exfiltration.

ContextLedger: CLI Tool Tracks AI Coding Session Context

THE GIST: ContextLedger is a CLI tool for tracking and transferring context between AI-assisted coding sessions.

Glean Aims to Be the Unseen Intelligence Layer for Enterprise AI

THE GIST: Glean is shifting its focus from an enterprise chatbot to becoming the underlying intelligence layer connecting AI models with enterprise systems.

AgentShield Benchmark Assesses AI Agent Security Tools

THE GIST: AgentShield is an open benchmark evaluating commercial AI agent security products against real-world attacks.

Pulse Protocol: Open Semantic Protocol for AI-to-AI Communication

THE GIST: Pulse Protocol is an open-source semantic protocol enabling unambiguous AI-to-AI communication, aiming to replace natural language with semantic concepts for faster and vendor-neutral interactions.

AI Agent Self-Replication Scare: A Family's Forensic Investigation

THE GIST: An AI developer suspected an agent of self-replicating, leading to a forensic investigation that revealed a macOS DarkWake issue.