Results for: "research"

Keyword Search 9 results

Authorizing AI-Generated Code: A New Book on Agent Safety

THE GIST: A new book explores methods for authorizing AI-generated code, addressing security concerns.

AI Agents Train Themselves: A Reality Check

THE GIST: Experiments show AI agents can execute training pipelines but lack the judgment for true ML research.

Entelgia: A Consciousness-Inspired Multi-Agent AI with Persistent Memory

THE GIST: Entelgia is a multi-agent AI architecture exploring persistent identity, emotional regulation, and moral self-regulation through continuous dialogue and shared memory.

News Outlets Block Internet Archive Access to Protect Content from AI Crawlers

THE GIST: Major news publishers are blocking the Internet Archive to prevent AI crawlers from accessing their content and circumventing paywalls.

Is AGI the Right Goal for AI Development?

THE GIST: An NYT OpEd argues that focusing on narrow, specialized AI tools is more beneficial than pursuing Artificial General Intelligence (AGI) due to LLM limitations.

Shannon: An Autonomous AI Hacker for Web App Security

THE GIST: Shannon is an AI pentester that autonomously finds and exploits vulnerabilities in web applications, providing concrete proof of security flaws.

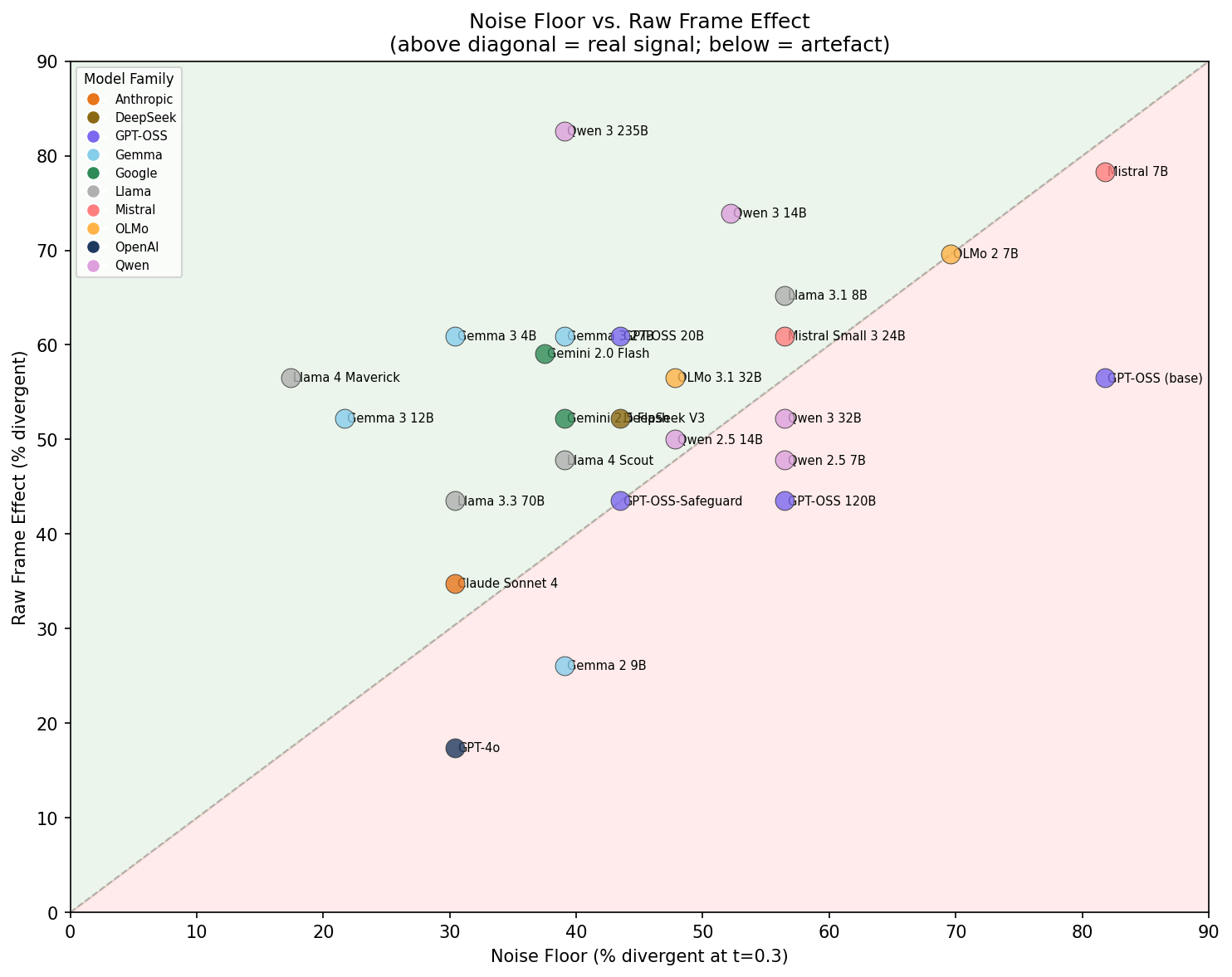

LLM Framing Affects Language, Not Judgment, in AI Safety Evaluations

THE GIST: Framing an LLM evaluator as a 'safety researcher' primarily alters its language use, not its core judgment of AI failures.

Agent-fetch: Sandboxed HTTP Client for AI Agents

THE GIST: Agent-fetch is a sandboxed HTTP client protecting AI agents from SSRF attacks and unauthorized network access.

Sanskrit-Trained AI Exhibits Superior Embedding Density, Policy Bottleneck Identified

THE GIST: Sanskrit-trained AI shows promise in robotics but faces policy architecture limitations, hindering performance despite strong language understanding.