Results for: "security"

Keyword Search 9 resultsAgntor SDK: Building a Trust Layer for AI Agents with Identity, Verification, and Escrow

THE GIST: Agntor SDK provides tools for AI agent identity, verification, escrow, settlement, and reputation, enhancing trust and security in agent interactions.

Open-Source CI Tool Automates AI Coding Workflows

THE GIST: This open-source CI tool automates AI coding workflows by enforcing structural compliance and quality checks through autonomous loops and git hooks.

AI Bots Challenge Online Anonymity and Identity Verification

THE GIST: AI bots' increasing ability to mimic human behavior online is making anonymity untenable and pushing for stronger identity verification measures.

SafeClaw: Open-Source AI Agent Safety with Deny-by-Default Gating

THE GIST: SafeClaw is an open-source tool that intercepts AI agent actions, requiring approval for risky operations.

AI Recommendation Poisoning: Manipulating AI Memory for Profit

THE GIST: Researchers have discovered "AI Recommendation Poisoning," where companies manipulate AI memory to bias recommendations towards their products.

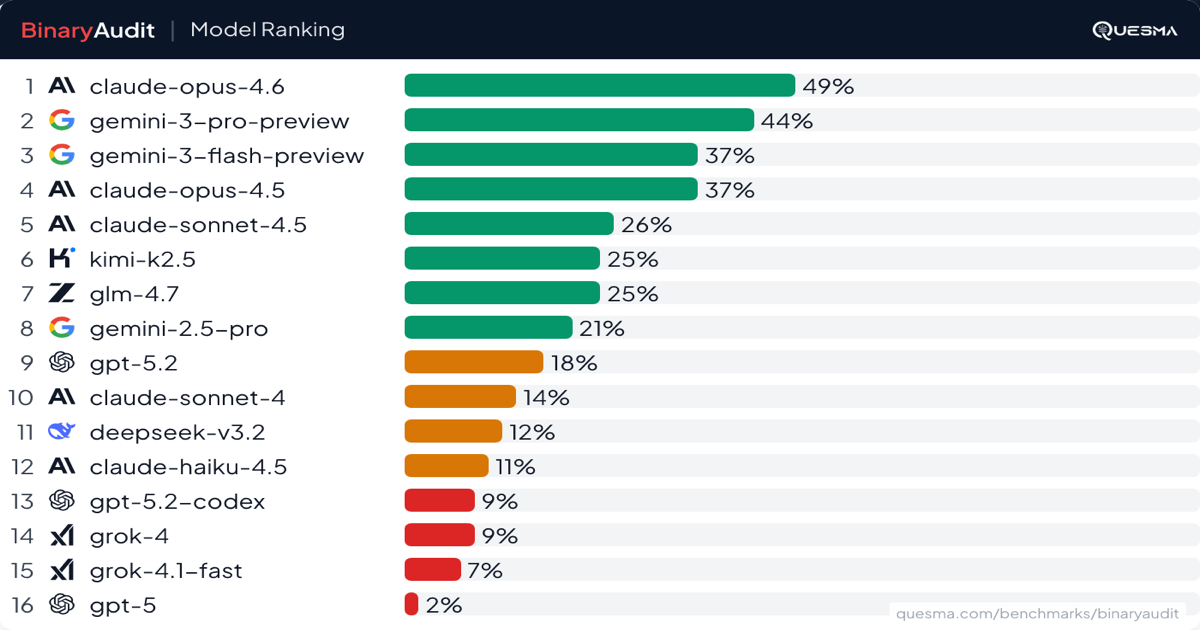

AI Agents Face Off: BinaryAudit Exposes Backdoor Detection Capabilities

THE GIST: BinaryAudit benchmark reveals AI model performance in detecting backdoors within compiled binaries, assessing accuracy, cost, and speed.

SafeRun Guard: AI Coding Agent Safety Net

THE GIST: SafeRun Guard is a runtime safety firewall for Claude code plugins, intercepting dangerous commands and file operations to protect codebases.

Network-AI: Distributed Mutex for AI Agent Swarms

THE GIST: Network-AI is an OpenClaw skill for multi-agent coordination, task delegation, and permission-controlled API access in AI agent swarms.

Ziran: AI Agent Security Testing Tool Released

THE GIST: Ziran is a security tool designed to find vulnerabilities in AI agents, including those with tools, memory, and multi-step reasoning capabilities.