Results for: "Access"

Keyword Search 9 results

News Outlets Block Internet Archive Access to Protect Content from AI Crawlers

THE GIST: Major news publishers are blocking the Internet Archive to prevent AI crawlers from accessing their content and circumventing paywalls.

AI Agent Security Audit Reveals Systemic Vulnerabilities in Public GitHub Repos

THE GIST: An audit of public AI agent configurations on GitHub reveals that 100% contain security vulnerabilities, including hardcoded credentials and network exposure.

PLP: An Open Protocol for Managing AI Prompts

THE GIST: PLP is an open protocol designed to decouple AI prompts from code, enabling version control, collaboration, and reusability via RESTful endpoints.

The AI Bubble: A Divide in AI Tool Usage

THE GIST: A significant gap exists between basic AI users and power users, even among professionals, highlighting shallow AI adoption.

Shannon: An Autonomous AI Hacker for Web App Security

THE GIST: Shannon is an AI pentester that autonomously finds and exploits vulnerabilities in web applications, providing concrete proof of security flaws.

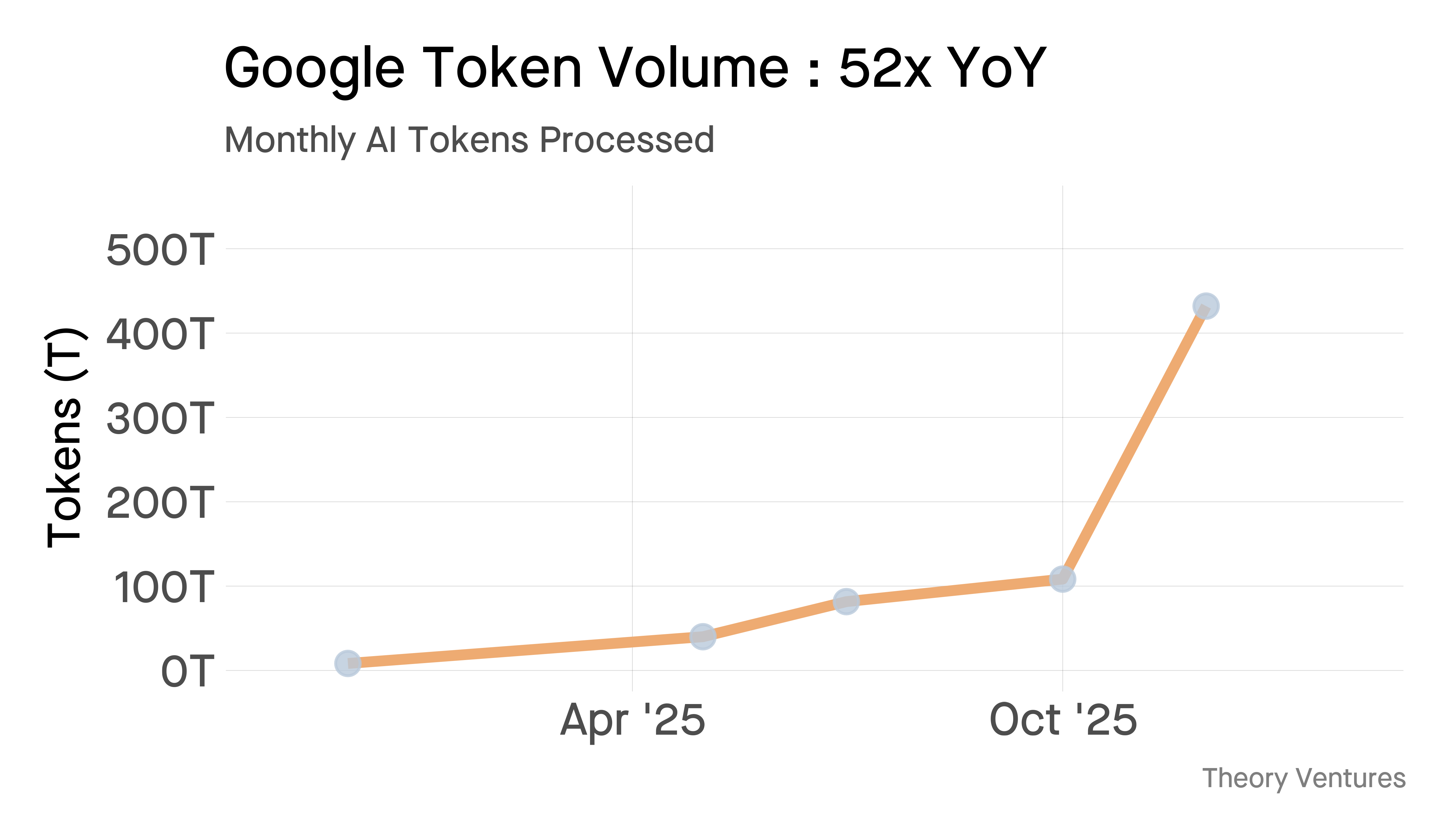

Google's AI Token Processing Grows 52x, Serving Costs Plummet

THE GIST: Google's Gemini now processes over 10 billion tokens per minute, a 52x year-over-year increase, while serving costs dropped 78%.

Sediment: Local Semantic Memory for AI Agents

THE GIST: Sediment is a local-first semantic memory solution for AI agents, combining vector search, relationship graphs, and access tracking in a single binary.

Turning the Tables: Using LLMs to Personalize and Enhance Learning

THE GIST: LLMs can create personalized learning curricula and provide interactive tutoring, enhancing human capabilities rather than replacing them.

Matchlock: Secure Sandboxing for AI Agents via MicroVMs

THE GIST: Matchlock is a CLI tool that runs AI agents in isolated microVMs, enhancing security by default.