Results for: "llm"

Keyword Search 9 resultsPassLLM: AI Password Guesser Achieves High Accuracy

THE GIST: PassLLM is an AI password guessing framework using personal information for targeted attacks.

RubyLLM-agents: Streamlining AI Agent Development in Rails

THE GIST: RubyLLM-agents is a Rails engine for building, managing, and monitoring LLM-powered AI agents with a real-time dashboard.

Faramesh: Cryptographic Gate for Autonomous AI Agent Security

THE GIST: Faramesh introduces a cryptographic boundary for AI agents, intercepting tool-calls and enforcing policy for enhanced security.

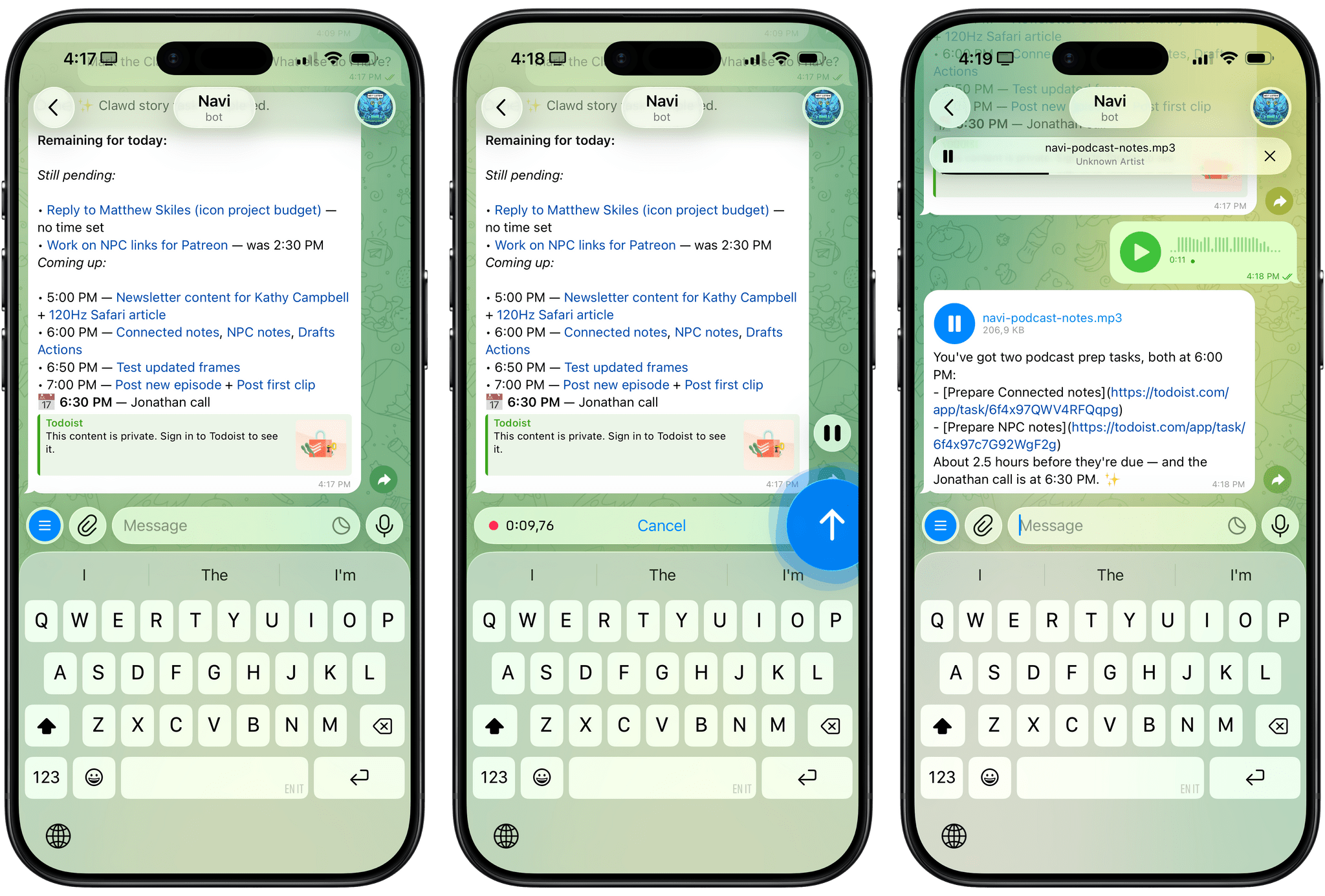

Clawdbot: A Glimpse into Personalized AI Assistants Running Locally

THE GIST: Clawdbot, an open-source project, offers a vision of personalized AI assistants running locally and integrating with messaging apps.

eBay Bans AI 'Buy for Me' Agents in User Agreement Update

THE GIST: eBay explicitly prohibits AI "buy for me" agents and LLM bots from its platform, effective February 20, 2026.

AI Conference NeurIPS Finds Hallucinated Citations in Accepted Papers

THE GIST: GPTZero found 100 hallucinated citations across 51 papers accepted by the prestigious NeurIPS conference.

CausaNova: Deterministic LLM Runtime via Ontology for Constraint Enforcement

THE GIST: CausaNova introduces a deterministic runtime environment for LLMs using ontologies to enforce constraints.

Centralized AI Agent Instruction via Git Submodules

THE GIST: A developer details using Git submodules to manage and replicate instructions for AI coding assistants across multiple projects, ensuring consistency and version control.

BELGI: Deterministic Acceptance Pipeline for LLM Outputs

THE GIST: BELGI is a demo harness for a deterministic acceptance pipeline for LLM outputs, focusing on interaction models and artifact outputs.