Results for: "llm"

Keyword Search 9 resultsLLMs as Universal Translators: Semantic Integration Layer Proposal

THE GIST: A proposal suggests using LLMs for a Semantic Integration Layer (SIL), enabling interoperability between systems via natural language instead of rigid APIs.

Differential Transformer V2: Faster Decoding via Query Head Doubling

THE GIST: Differential Transformer V2 (DIFF V2) achieves faster decoding speeds by doubling query heads without increasing key-value heads.

Mitigating Risks of Running LLM-Generated Code: A Hobbyist Programmer's Concerns

THE GIST: A hobbyist programmer expresses concerns about the security risks of running LLM-generated code and seeks advice on mitigation strategies.

AI: Reasoning or Regurgitation? Challenging the Stochastic Parrot Narrative

THE GIST: Evidence suggests advanced AI systems form internal models, representing concepts beyond memorized patterns.

AI Normalizes Foreign Influence by Prioritizing Accessibility Over Credibility

THE GIST: AI's reliance on accessible sources normalizes foreign influence, as authoritarian states optimize propaganda for AI consumption while credible news blocks AI tools.

Rig: Distributing LLM Inference Across Multiple Machines

THE GIST: Rig enables running large language models across multiple machines using pipeline parallelism.

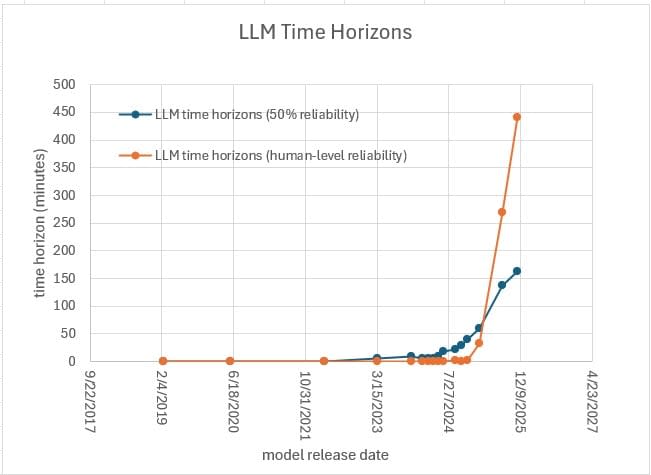

METR Underestimates LLM Time Horizons, Suggests Analysis

THE GIST: Analysis suggests METR's benchmarks may underestimate LLM time horizons due to flawed human baselines.

AI Coding Assistance in COBOL: A Mixed Bag for Legacy Systems

THE GIST: AI shows promise in COBOL tasks, but requires human oversight due to system complexities.

6.9B Parameter MoE LLM Implemented in Rust, Go, and Python

THE GIST: A 6.9B parameter Mixture of Experts (MoE) LLM has been implemented from scratch in Rust, Go, and Python with CUDA support.