Results for: "research"

Keyword Search 9 results

Extracting Backdoor Triggers in LLMs: A New Scanner

THE GIST: A new scanner identifies sleeper agent-style backdoors in language models by detecting memorized poisoning data and distinctive output patterns.

Adaption Labs Secures $50M to Develop Efficient AI Systems

THE GIST: Adaption Labs, founded by Sara Hooker and Sudip Roy, aims to create AI systems that use less computing power and adapt to tasks more efficiently, securing $50M in seed funding.

Smart AI Policy Requires Examining Real Harms and Benefits

THE GIST: Effective AI policy must balance potential harms, like bias and environmental impact, with benefits in science, accessibility, and accountability.

Open-Source AI Tool Outperforms LLMs in Literature Reviews

THE GIST: OpenScholar, an open-source AI tool, surpasses LLMs in literature reviews by linking information directly to a database of 45 million open-access articles, ensuring accurate citations.

AI and Higher Ed: An Impending Collapse?

THE GIST: The increasing embrace of AI in universities, from detecting plagiarism to creating assignments, may exacerbate existing issues and contribute to a crisis of confidence in higher education.

AI Math Startup Cracks Unsolved Problems

THE GIST: Axiom, an AI startup, has developed AxiomProver, an AI system that has solved four previously unsolved math problems.

OpenClaw AI 'Skills' Riddled with Malware

THE GIST: Researchers have discovered hundreds of malicious add-ons in the OpenClaw AI agent's marketplace, turning it into a malware delivery platform.

Tri-Agent Framework Achieves Stable Recursive Knowledge Synthesis in Multi-LLM Systems

THE GIST: A novel tri-agent framework using multiple LLMs achieves stable recursive knowledge synthesis through cross-validation and transparency auditing.

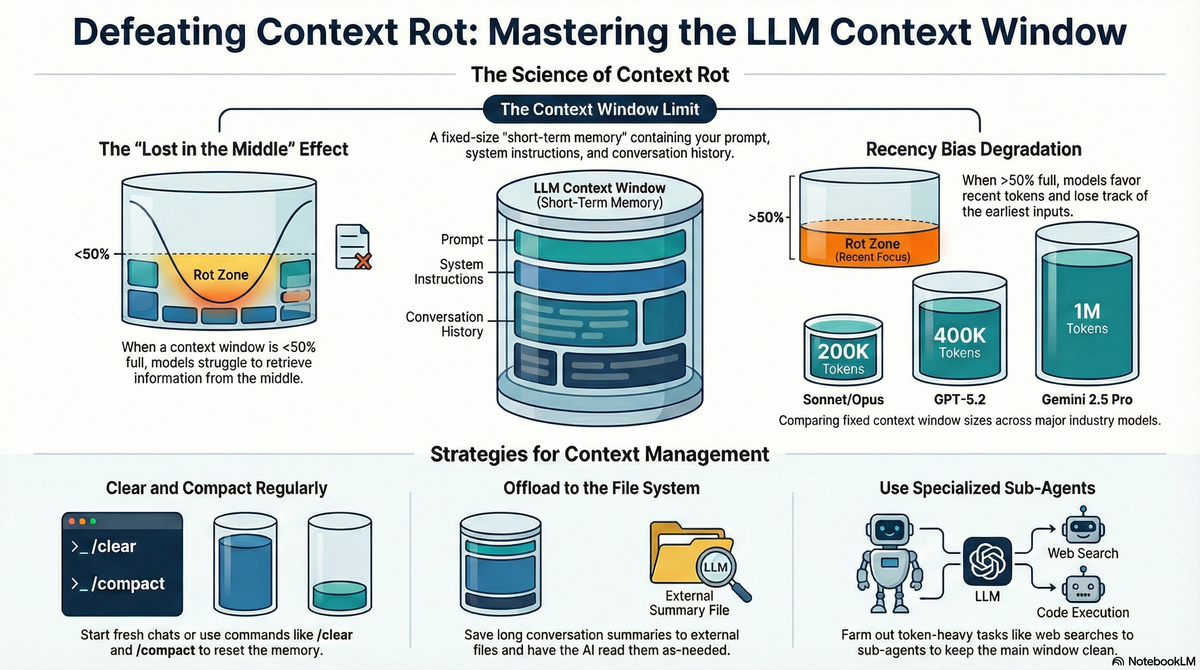

Context Rot: How Conversational AI Performance Declines Over Time

THE GIST: Research indicates that AI performance degrades with longer conversations due to a phenomenon called "context rot."