Results for: "llm"

Keyword Search 9 resultsSteganography Technique Hides Data in LLM-Generated Text

THE GIST: subtext-codec hides binary data within LLM-generated text using logit-rank steering.

AI Gateway Kit: Capability-Based Routing for LLMs in Node.js

THE GIST: AI Gateway Kit is a Node.js library for managing LLM usage with capability-based routing and rate limiting.

AstroReview: LLM-Driven Agents Tackle Astronomy's Proposal Bottleneck, Boost Acceptance Rates by 66%

THE GIST: AstroReview, an LLM-driven multi-agent framework, automates telescope proposal peer review, significantly improving efficiency, transparency, and proposal quality by addressing bottlenecks in access to modern observatories.

Distill Cleans RAG Context in 12ms, Boosts LLM Reliability Without Extra Calls

THE GIST: Distill, a new Go-based tool, efficiently removes 30-40% of redundant RAG context in approximately 12 milliseconds, dramatically improving LLM reliability and determinism without requiring additional LLM calls. It achieves this by intelligently clustering and re-ranking fetched chunks to provide 8-12 diverse and relevant pieces of information.

AI-Driven Software Development Sees 50% Speed Boost, Set to Go Mainstream in 2026

THE GIST: A CTO reports a 50% acceleration in software development workflow using LLMs like Claude and Cursor in 2025, predicting that such sci-fi level technology will become mainstream for all developers by 2026.

SafeBrowse Unveils Open-Source Prompt-Injection Firewall for AI Security

THE GIST: SafeBrowse is an open-source prompt-injection firewall designed to create a hard security boundary between untrusted web content and LLMs, blocking malicious instructions and poisoned data before it reaches the AI. It features over 50 prompt injection detection patterns and a policy engine for crucial data blocking.

AI Security Baseline 1.0 Launched: Essential Safeguards for LLM Applications by 2026

THE GIST: A new open and free AI Application Security Baseline 1.0 has been released, providing minimum standards for deploying production-ready LLM apps by 2026, covering pre-deployment, CI/CD, runtime, and compliance.

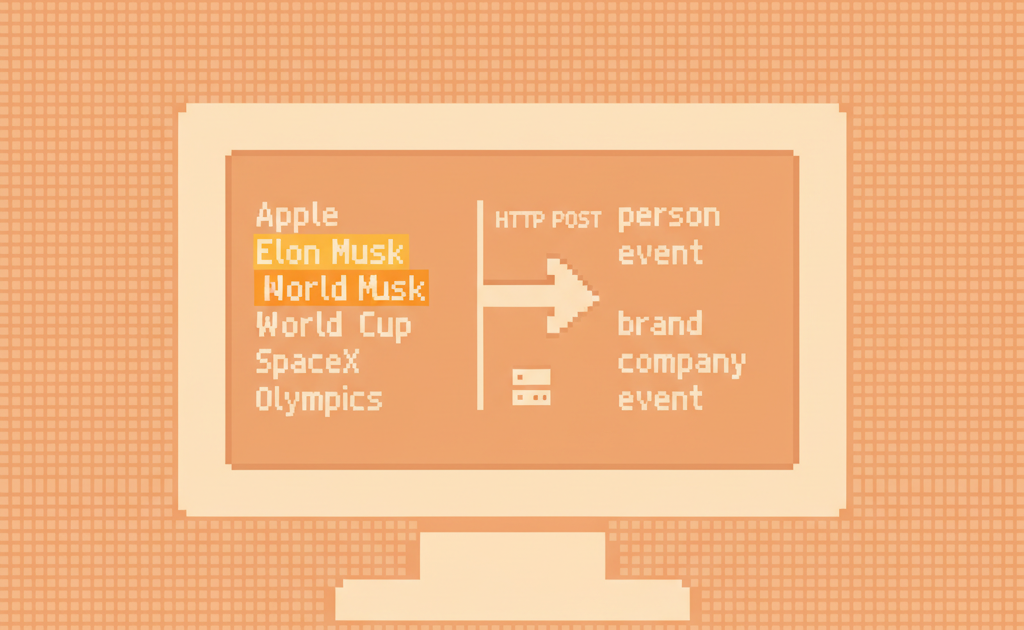

Heeb.ai Unveils LLM Mentions API: Track Brand Visibility and Sentiment in AI-Generated Answers

THE GIST: Heeb.ai has launched an LLM Mentions API, enabling automated tracking of brand mentions and sentiment within AI-generated responses from models like ChatGPT and Gemini, crucial for new-age brand visibility.

Yann LeCun Exits Meta to Launch Advanced AI Research Startup, Signaling Industry Shift

THE GIST: Artificial intelligence pioneer Yann LeCun is departing Meta as Chief AI Scientist at year-end to establish a new startup focused on advanced AI research, including understanding the physical world and complex reasoning. This move follows Meta's recent AI job cuts and a strategic shift towards commercial AI and 'superintelligence' development.