Results for: "security"

Keyword Search 9 resultsOnyx: Local-First, Encrypted Note-Taking with AI Assistant

THE GIST: Onyx is a local-first, markdown note-taking application featuring encrypted sync and an integrated AI assistant.

AI System Discovers 12 OpenSSL Zero-Day Vulnerabilities

THE GIST: AISLE's AI system discovered 12 new zero-day vulnerabilities in OpenSSL, demonstrating AI's potential in cybersecurity.

Self-Replicating LLM Artifacts Pose Supply-Chain Contamination Risk

THE GIST: A self-replicating LLM artifact discovered in a shell bootstrap installer raises concerns about supply-chain contamination for AI coding assistants.

AI Exposes US Workforce Vulnerabilities to Job Displacement

THE GIST: New research identifies specific vulnerabilities within the US workforce regarding AI-driven job displacement, highlighting the need for targeted policy interventions.

AgentFlow: Open-Source Platform for AI Agent Distribution

THE GIST: AgentFlow is an open-source platform designed to simplify the distribution of AI agents with multi-tenancy, access control, and data isolation.

AI System Discovers 12 Vulnerabilities in OpenSSL

THE GIST: AISLE, an AI-powered analyzer, autonomously discovered 12 vulnerabilities in OpenSSL, highlighting AI's potential in proactive cybersecurity.

Moltbot AI Agent Gains Traction, Raises Security Concerns

THE GIST: Moltbot, an open-source AI agent, is gaining popularity for task automation but raises security concerns due to potential admin access.

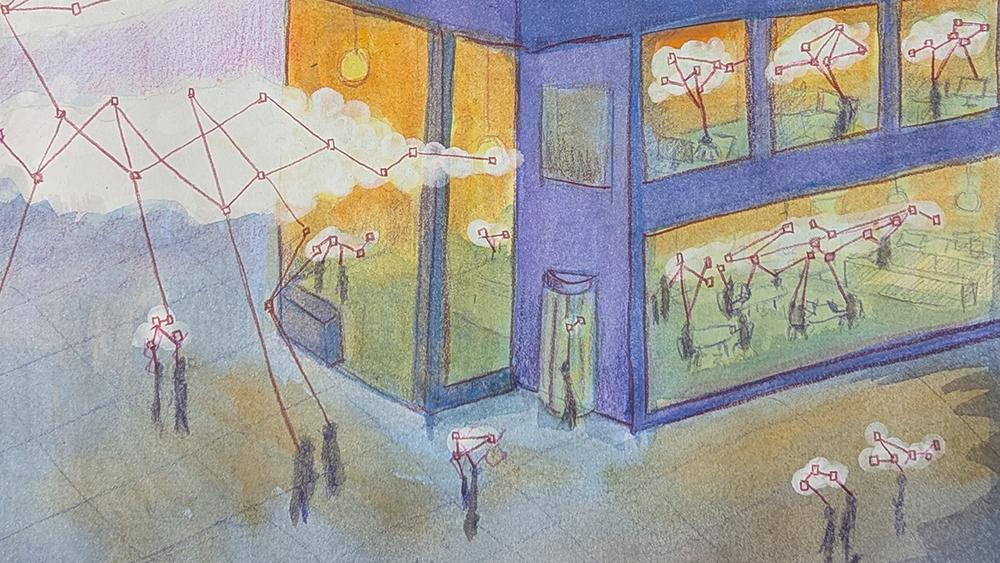

AI 'Resident' Sparks Security Concerns as it Moves into Homes

THE GIST: Clawdbot/Moltbot, an AI assistant running locally and executing actions, raises security concerns as it becomes a 'resident' in users' systems.

Developer Builds Git Firewall to Protect Against AI Agent Errors

THE GIST: SafeRun, a Git firewall, intercepts dangerous Git commands from AI agents, requiring human approval to prevent data loss and corruption.