Results for: "research"

Keyword Search 9 resultsSandvault: Secure macOS Sandboxing for AI Agents

THE GIST: Sandvault isolates AI agents in macOS user accounts, enhancing security without virtualization overhead.

The AI Governance 'Runtime Decision Ownership' Gap

THE GIST: Organizations struggle to prove AI decision ownership at runtime, leading to accountability gaps.

Prompt Repetition Enhances Accuracy in Non-Reasoning LLMs

THE GIST: Repeating the input prompt improves performance for popular LLMs (Gemini, GPT, Claude, and Deepseek) without increasing token count or latency.

AI Job Displacement: American Workers' Adaptability Assessed

THE GIST: A study finds a positive correlation between AI exposure and adaptive capacity among American workers, but identifies vulnerable pockets.

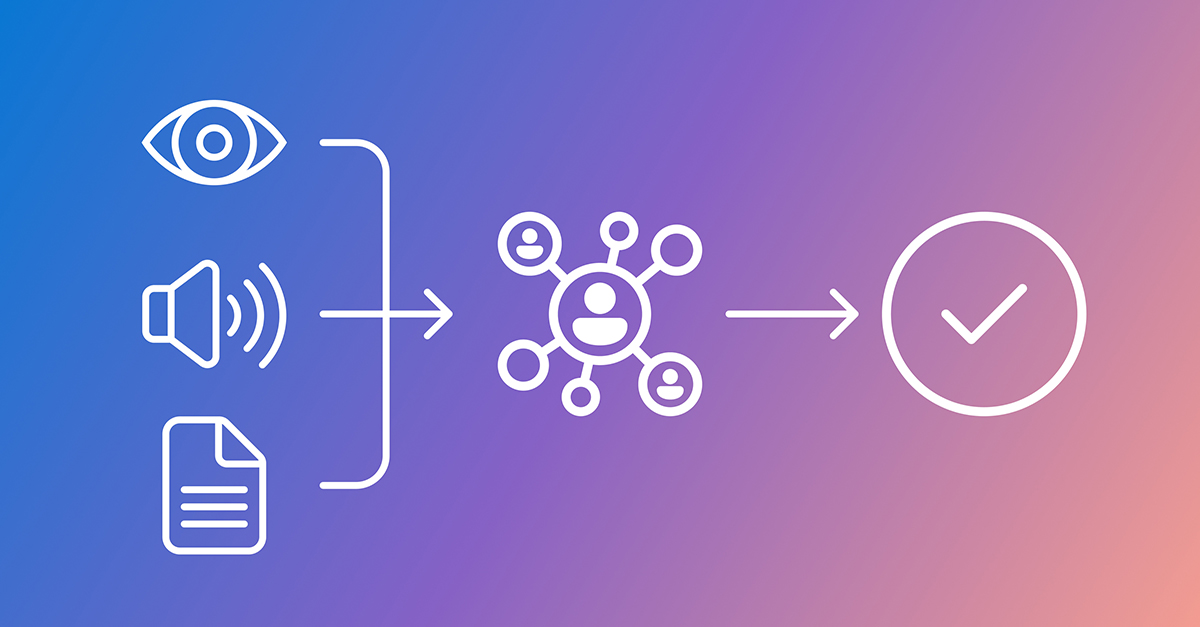

Argos Framework Improves AI Reliability with Multimodal Reinforcement Learning

THE GIST: Argos, a new framework, enhances AI reliability by verifying multimodal reinforcement learning through visual and temporal evidence.

Research Documents Observable Behavior of Third-Party AI Systems Under Disclosure Absence

THE GIST: A journal article documents observable behavior of AI systems generating enterprise representations without disclosure.

Open Coscientist: AI Hypothesis Generation Tool

THE GIST: Open Coscientist is an open-source tool for AI-driven research hypothesis generation, review, and ranking.

Humans& Startup Secures $480M Seed Funding at $4.48B Valuation

THE GIST: Humans&, an AI startup focused on human empowerment, raised $480M in seed funding.

Nadella Warns AI Boom Could Falter Without Wider Adoption

THE GIST: Microsoft CEO Satya Nadella cautions that AI's success hinges on broad adoption across industries and geographies.