AI Accountability Platform 'ASCERTAIN' Enforces Validation Before Output

Sonic Intelligence

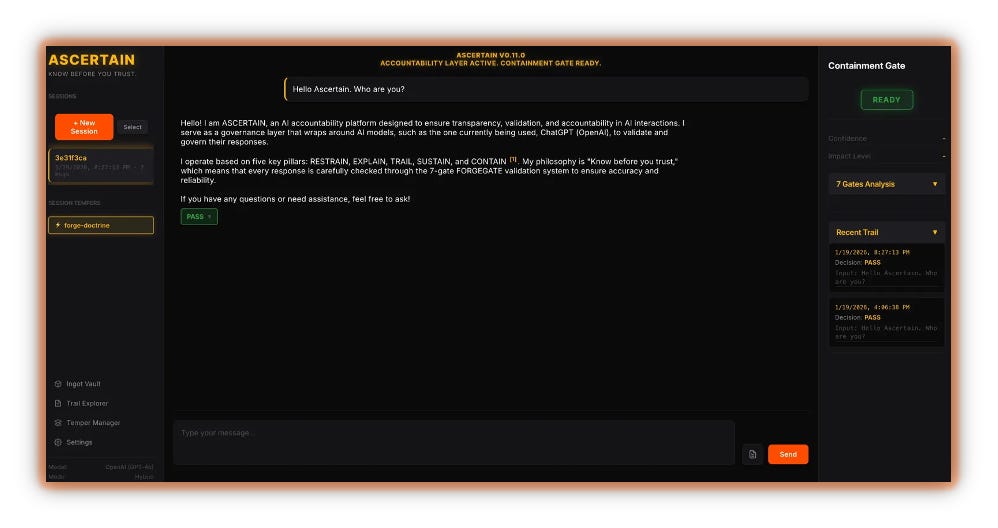

ASCERTAIN, an AI accountability platform, enforces validation on AI outputs, addressing the lack of governance in current AI systems.

Explain Like I'm Five

"Imagine a robot that always checks its answers before telling them to you, so you know it's not making stuff up."

Deep Intelligence Analysis

The article highlights the difference between corporate promises of 'responsible AI' and ASCERTAIN's enforcement-based approach. By providing a concrete example of how the platform catches and flags potential issues, the author demonstrates its practical value. The platform's focus on transparency and documentation of violations, rather than simply blocking problematic responses, is also noteworthy.

ASCERTAIN represents a significant step towards building more trustworthy and reliable AI systems. Its success could pave the way for wider adoption of AI governance frameworks and encourage AI developers to prioritize accountability in their models. The long-term impact of ASCERTAIN will depend on its ability to adapt to evolving AI technologies and its effectiveness in addressing a wide range of potential AI-related risks.

*Transparency Disclosure: This analysis was composed by an AI assistant to provide an objective summary and diverse perspectives on the provided news articles. It is intended for informational purposes and should not be considered financial advice. The AI is trained to avoid biased or misleading information.*

Impact Assessment

ASCERTAIN addresses a critical gap in the AI landscape by providing a quality-control layer that enforces accountability. This platform could help mitigate risks associated with AI hallucinations, biases, and inaccuracies, fostering greater trust in AI systems.

Key Details

- ASCERTAIN is an AI accountability platform designed to wrap around existing AI models.

- ASCERTAIN operates on Five Pillars: RESTRAIN, EXPLAIN, TRAIL, SUSTAIN, and CONTAIN.

- ASCERTAIN uses a 7-gate FORGEGATE validation system.

Optimistic Outlook

ASCERTAIN's approach could become a standard for AI governance, leading to more reliable and trustworthy AI applications. Its enforcement mechanisms could encourage AI developers to prioritize accountability and transparency in their models.

Pessimistic Outlook

The added layer of validation could slow down AI output and increase development costs, potentially hindering innovation. The effectiveness of ASCERTAIN depends on the robustness of its validation system and its ability to adapt to evolving AI models.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.