AI Agent Guardrails: Pre-LLM and Post-LLM Strategies for Reliability

Sonic Intelligence

The Gist

Implementing real-time guardrails before and after LLM interaction is crucial for AI agent reliability and safety.

Explain Like I'm Five

"Imagine a security guard for your smart computer helper. One guard checks what you tell it before it thinks, making sure you don't accidentally share secrets. Another guard checks what the helper says before it tells you, making sure it's not making things up or saying something mean. This makes sure your helper is safe and reliable."

Deep Intelligence Analysis

Conversely, post-LLM guardrails act as a final quality assurance layer, scrutinizing the model's output before it reaches the end-user or triggers an action. These mechanisms are vital for detecting hallucinations, ensuring factual accuracy against provided context, identifying toxic content, and validating tool selections or output formats. The ability to catch unsupported claims and feed them back to the agent for self-correction represents a significant advancement, transforming guardrails from mere filters into integral components of a continuous improvement loop, enhancing agent reliability and reducing the incidence of undesirable outputs.

The strategic implication of this dual-layer guardrail approach is profound: it enables enterprises to deploy AI agents with greater confidence, balancing innovation with stringent safety and compliance requirements. As AI agents become more autonomous and integrated into complex workflows, the sophistication of these real-time interception and feedback mechanisms will be paramount. The shift from reactive monitoring to proactive, self-correcting systems is not just a technical best practice but a fundamental requirement for scaling AI agent deployments responsibly across industries where trust and accuracy are non-negotiable.

Visual Intelligence

flowchart LR

A["User Input"] --> B{"Pre-LLM Check"};

B -- "Clean Data" --> C["LLM Process"];

C -- "LLM Response" --> D{"Post-LLM Check"};

D -- "Safe Output" --> E["User Output"];

D -- "Issue Detected" --> C;

Auto-generated diagram · AI-interpreted flow

Impact Assessment

Robust guardrails are essential for the responsible deployment of AI agents, ensuring compliance with data privacy regulations, mitigating risks like hallucinations or prompt injections, and building user trust in AI systems.

Read Full Story on ArthurKey Details

- ● Guardrails intercept AI agent behavior in real time to prevent undesirable actions.

- ● Pre-LLM guardrails run before user input reaches the model, for PII redaction and prompt injection detection.

- ● Post-LLM guardrails run after the model's response, before it reaches the user, for hallucination and toxicity detection.

- ● An airline uses pre-LLM guardrails to redact PII from customer support conversations.

- ● Guardrails can also serve as a feedback mechanism, feeding flagged issues back to the LLM for self-correction.

Optimistic Outlook

The widespread adoption of sophisticated pre-LLM and post-LLM guardrails will enable safer, more compliant, and trustworthy AI agent deployments across sensitive industries, accelerating enterprise AI adoption while minimizing operational risks.

Pessimistic Outlook

Inadequate or poorly implemented guardrails risk severe data breaches, reputational damage, and the deployment of unreliable or harmful AI agents, potentially eroding public trust and hindering the broader integration of AI into critical systems.

The Signal, Not

the Noise|

Join AI leaders weekly.

Unsubscribe anytime. No spam, ever.

Generated Related Signals

Takt AI: Socially Intelligent Agent Learns Group Dynamics

Takt is a new AI designed to participate in group chats with social intelligence and dynamic interaction.

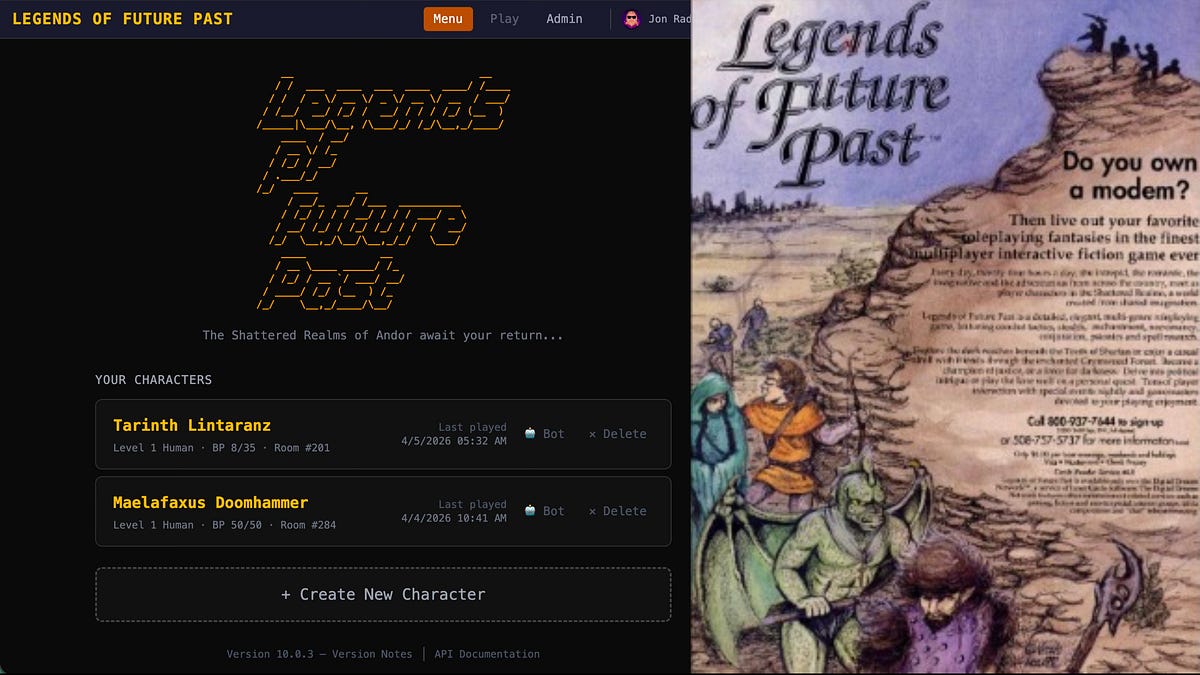

AI Agents Resurrect 1992 MUD Without Source Code

Agentic AI successfully rebuilt a 1992 multiplayer online game from artifacts.

Procurement.txt: An Open Standard for AI Agent Business Transactions

A new open standard simplifies AI agent transactions, boosting efficiency and reducing costs.

Specialized AI Agents Outperform General LLMs for CI/CD Diagnostics

Specialized AI agents, even with identical LLMs, achieve superior performance by optimizing context, tools, and data for...

Intel Partners with Elon Musk for Terafab AI Chip Factory in Austin

Intel will help design and build Elon Musk's Terafab AI chip factory in Texas.

OpenClaude Unifies LLM Coding Agents for Multi-Provider Workflow

OpenClaude provides a unified CLI for agentic coding across diverse LLM providers.