AI Agent Observability: Debugging Decision Loops, Not Just Services

Sonic Intelligence

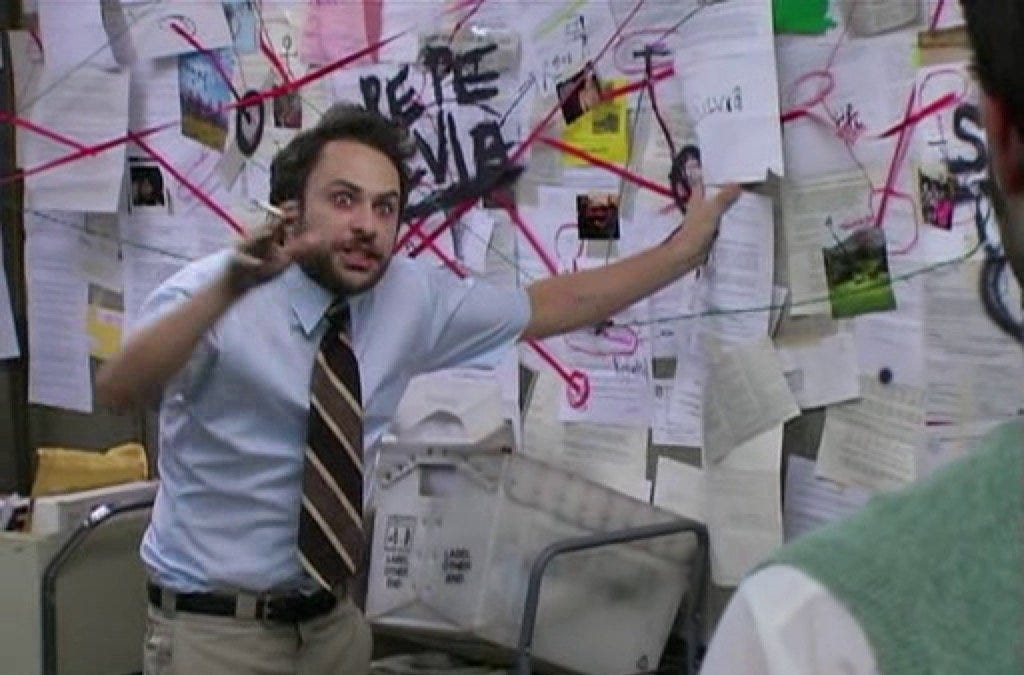

Traditional debugging methods are inadequate for AI agents due to their non-deterministic decision-making processes.

Explain Like I'm Five

"Imagine trying to figure out why a person made a mistake just by knowing which stores they entered and exited. AI agents are like that person, making decisions, and we need tools to understand *why* they made those choices, not just *what* they did."

Deep Intelligence Analysis

The article draws an analogy between debugging microservices and tracking packages, where each trace represents a delivery route. In contrast, debugging AI agents is likened to tracking a person running errands, requiring an understanding of their intentions and decisions. The missing piece is a shared understanding of the agent's goals, beliefs, and the context in which decisions are made. This requires new telemetry standards and tools that can capture the nuances of agent behavior, including prompt arguments, retrieved documents, and conversation history.

Ultimately, the development of effective AI agent observability tools is essential for building reliable and trustworthy AI systems. By providing developers with the insights they need to understand and optimize agent behavior, these tools will unlock the full potential of AI-driven applications and accelerate their adoption across various industries. This will require a shift from traditional monitoring approaches to more sophisticated methods that can capture the complexities of AI agent decision-making.

Impact Assessment

Effective AI agent observability is crucial for understanding agent behavior, identifying errors, and optimizing performance. Traditional monitoring systems are insufficient for debugging the complex decision-making processes of AI agents, leading to difficulties in identifying the root causes of failures and high costs.

Key Details

- AI agents function as decision loops, unlike traditional services with predictable failure points.

- Debugging AI agents requires understanding the model's reasoning behind each decision.

- Current dashboards primarily track network-level data, failing to capture the nuances of agent behavior.

- Agent execution is closely tied to sensitive data like prompts and conversation history.

Optimistic Outlook

Emerging standards for agent telemetry could enable unified debugging and cost attribution, leading to more efficient and reliable AI agent systems. Enhanced observability tools will empower developers to better understand and optimize agent behavior, unlocking new possibilities for AI-driven applications.

Pessimistic Outlook

Without adequate observability tools, debugging AI agents will remain challenging and costly, hindering the widespread adoption of complex AI systems. The lack of shared meaning between agents and monitoring systems could lead to increased errors and unpredictable behavior, raising concerns about the reliability of AI agents in critical applications.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.