AI Coding Assistants: A Developer's Guide to Effective Integration

Sonic Intelligence

Developers need structured guidance to effectively leverage AI coding assistants.

Explain Like I'm Five

"Imagine you have a super smart robot friend who helps you write computer instructions. This article tells you how to pick the best robot friend and talk to it so it helps you make awesome games and apps without making a mess."

Deep Intelligence Analysis

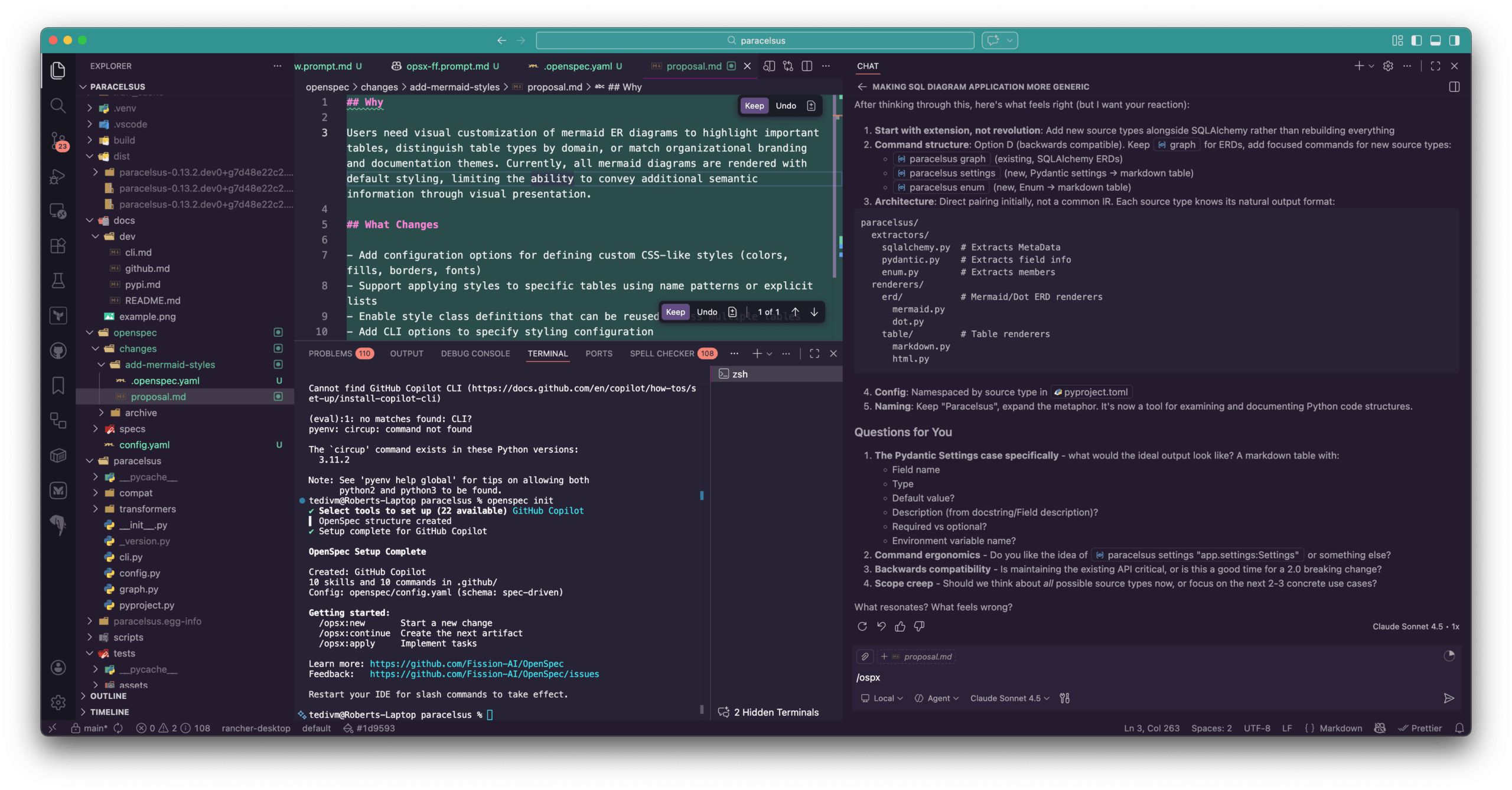

Key players in the AI coding assistant market, such as GitHub Copilot and Windsurf, offer comprehensive integrated development environments (IDEs) or robust plugins for existing platforms like VSCode. While the author notes a rapid convergence of features across these systems, suggesting a highly competitive and innovative environment, the choice of an underlying large language model (LLM) is presented as a critical differentiator. Specifically, Claude models are highlighted for their superior performance in core coding activities, including planning, testing, and documentation generation. This distinction implies that while the interface and feature sets may appear similar, the intelligence powering the assistant can significantly impact developer output and code quality.

The primary interaction paradigm with these AI assistants revolves around a chat interface, where developers use natural language prompts to direct the agent's actions. This conversational approach, while intuitive, necessitates a clear understanding of prompt engineering and the agent's capabilities to yield optimal results. The ability to select specific models and tools, alongside highlighting relevant files for context, empowers developers to tailor the AI's assistance to specific tasks. However, the simplicity of the basic interaction belies the complexity of advanced usage, hinting at a learning curve for maximizing the tools' potential.

The implications for the software industry are substantial. On one hand, widespread adoption of AI coding assistants promises unprecedented gains in developer productivity, accelerating development cycles and potentially freeing up human developers for more complex, creative problem-solving. The ability of AI to assist with mundane or repetitive tasks, generate boilerplate code, and even suggest improvements can democratize advanced coding practices. On the other hand, the absence of standardized training and best practices could lead to a fragmented approach, where the benefits are unevenly distributed, and the quality of AI-generated code varies wildly. This necessitates a proactive approach from organizations and educational institutions to develop comprehensive curricula that equip developers with the skills to effectively command and critically evaluate AI-assisted outputs, ensuring that human oversight remains paramount in the AI-first execution paradigm. The ongoing evolution of these tools demands continuous learning and adaptation to harness their full transformative potential responsibly.

[Transparency Statement: This deep analysis was generated by an AI model to synthesize information from the provided source material, adhering to factual constraints and analytical objectives.]

Impact Assessment

AI coding tools are becoming integral to software development, yet many developers lack formal guidance. This gap can lead to inefficient adoption or 'vibe coding,' underscoring the need for structured approaches to maximize productivity and code quality. Effective integration is crucial for future software engineering.

Key Details

- AI coding assistants are fundamentally altering software creation methods.

- Major AI coding systems offer full IDEs or robust plugins for existing platforms.

- Feature adoption is rapid across competing AI coding tools, ensuring quick parity.

- Claude models are identified as superior for coding tasks, including planning and testing.

- Interaction primarily occurs via a chat interface using natural language prompts.

Optimistic Outlook

The rapid evolution and feature parity among AI coding assistants suggest a highly competitive market driving continuous innovation. Developers who master these tools can significantly boost productivity, streamline complex tasks, and potentially elevate code quality through AI-assisted planning and testing, fostering a more efficient development ecosystem.

Pessimistic Outlook

The lack of standardized guidance for AI coding tools risks widespread 'vibe coding,' leading to inconsistent code quality and potential over-reliance on AI without critical human oversight. The cost associated with superior models like Claude could also create an access barrier, potentially widening the gap between well-resourced teams and others.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.