AI Boosts Code Generation Speed, But Widens 'Verification Gap' in Engineering

Sonic Intelligence

AI accelerates code generation, yet widens the critical 'verification gap'.

Explain Like I'm Five

"Imagine you have a super-fast robot that can write stories for you. It writes them super quickly! But sometimes, the robot writes something that isn't quite what you meant, or it has a tiny mistake. It's hard to check every single word the robot writes, so even though it's fast, it doesn't always mean your story is finished and perfect faster."

Deep Intelligence Analysis

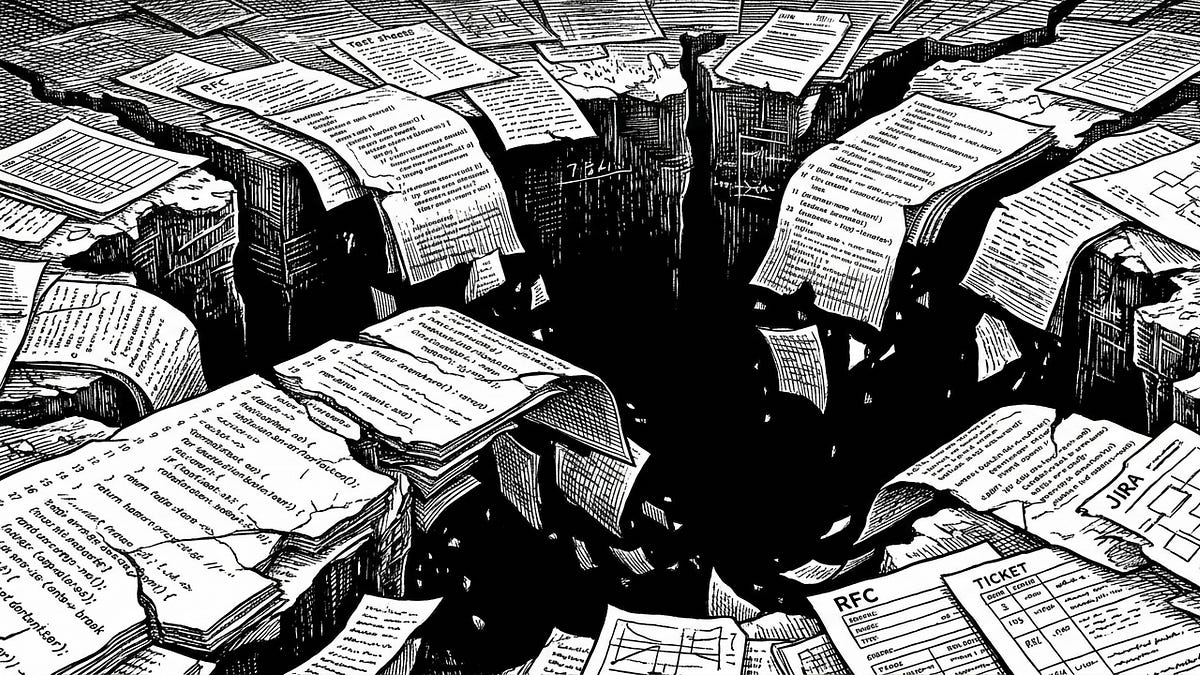

Historically, the human developer served as the central locus of understanding, holding the complete context from initial design to final verification, albeit imperfectly. With AI, this paradigm shifts: humans write prompts, models generate code and tests, and humans often only skim the output. This decoupling of intent from implementation introduces a new layer of opacity, making it more challenging to ascertain if the AI-generated solution truly aligns with the original specification, especially in highly regulated environments where correctness and auditability are paramount. The risk is that mistakes can now scale at an unprecedented rate, outpacing human judgment and leading to costly errors in production.

Strategically, this necessitates a re-evaluation of engineering workflows and a significant investment in novel verification methodologies. Simply accelerating code output without enhancing validation capabilities is a false economy. Future-proof engineering organizations must focus on developing robust AI-assisted verification tools, establishing clearer human-in-the-loop validation points, and fostering a culture that prioritizes trust and correctness over raw speed. The challenge is not merely to generate more code, but to generate more *trustworthy* code, ensuring that AI serves as an enabler of quality, not just quantity.

Impact Assessment

The prevalent industry narrative that faster code generation equates to faster engineering is fundamentally flawed. AI's ability to rapidly produce code, while beneficial for individual productivity, introduces a critical 'verification gap' that can scale mistakes faster than human judgment, particularly in complex or regulated systems where trust and correctness are paramount.

Key Details

- AI has dramatically increased the speed of code generation for individual engineers.

- This speed boost does not translate directly into faster overall engineering project delivery.

- The 'verification gap' is defined as the distance between intended and demonstrated behavior of software.

- AI exacerbates this gap by decoupling human intent from AI-generated implementation and tests.

- In complex and regulated environments, validation and verification constitute a larger portion of engineering work than initial implementation.

Optimistic Outlook

AI's capacity to reduce 'noise' and interruption-based work can significantly improve engineer satisfaction and context switching. By offloading mundane tasks, AI allows human engineers to focus on higher-level design, architectural integrity, and the crucial verification processes, ultimately leading to more robust and well-understood systems.

Pessimistic Outlook

Unchecked reliance on AI for code generation without commensurate investment in verification processes risks deploying systems with subtle, AI-introduced flaws that are difficult to trace. This could lead to increased production incidents, higher maintenance costs, and a fundamental erosion of trust in AI-assisted development, especially in safety-critical applications.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.