AI Crawlers Evade Traditional Web Analytics: Server-Side Detection Emerges

Sonic Intelligence

The Gist

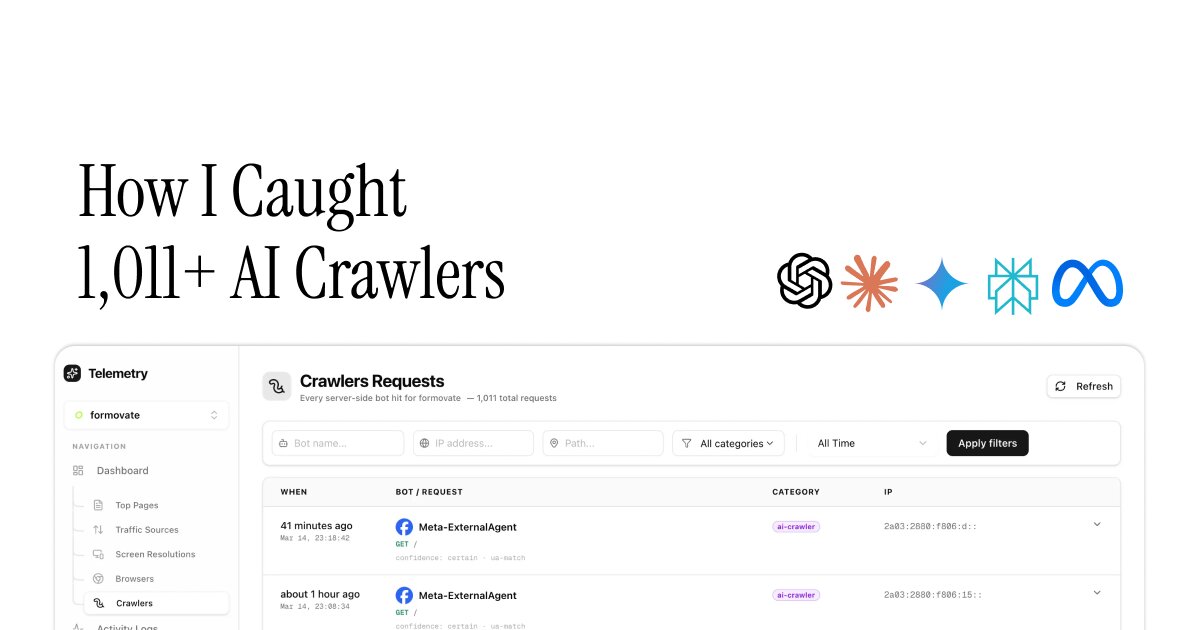

LLM crawlers like GPTBot and ClaudeBot bypass JavaScript-based analytics, necessitating server-side bot detection methods.

Explain Like I'm Five

"Imagine sneaky robots visiting websites without being seen by regular trackers, so we need special tools to find them!"

Deep Intelligence Analysis

Transparency in data collection and usage is paramount, especially when dealing with AI crawlers. As per EU AI Act Article 50, it is important to ensure that website owners are transparent about the presence of crawlers, the data they collect, and how that data is used. Users should have the right to know if their content is being used to train AI models and to opt out if they choose. Open communication and clear policies are essential to build trust and ensure ethical data practices in the age of AI-powered web crawling.

_Context: This intelligence report was compiled by the DailyAIWire Strategy Engine. Verified for Art. 50 Compliance._

Visual Intelligence

flowchart LR

A[User Request] --> B(Web Server);

B --> C{JS Enabled?};

C -- Yes --> D[Traditional Analytics];

C -- No --> E[Server-Side Detection];

E --> F(User-Agent/IP Analysis);

D & F --> G{Bot Detected?};

G -- Yes --> H[Record Bot Traffic];

G -- No --> I[Record Human Traffic];

Auto-generated diagram · AI-interpreted flow

Impact Assessment

The ineffectiveness of traditional analytics against AI crawlers skews website traffic data. Server-side detection provides a more accurate picture of bot activity, crucial for SEO and security.

Read Full Story on AdwaitKey Details

- ● GPTBot and ClaudeBot download only HTML, skipping JS, CSS, and images.

- ● Traditional analytics rely on JavaScript beacons, which AI crawlers don't execute.

- ● Server-side detection identifies bots via User-Agent and IP address analysis.

- ● Server-side detection can identify 85-90% of crawlers.

Optimistic Outlook

Improved bot detection enables more accurate website analytics, leading to better SEO strategies and resource allocation. Enhanced security measures can also be implemented to mitigate malicious bot activity.

Pessimistic Outlook

Implementing server-side detection adds complexity to website infrastructure. The arms race between bot developers and detection methods may lead to increasingly sophisticated evasion techniques.

The Signal, Not

the Noise|

Get the week's top 1% of AI intelligence synthesized into a 5-minute read. Join 25,000+ AI leaders.

Unsubscribe anytime. No spam, ever.