AI Futures Model Predicts 3-Year Delay for Full Coding Automation Amid R&D Rethink

Sonic Intelligence

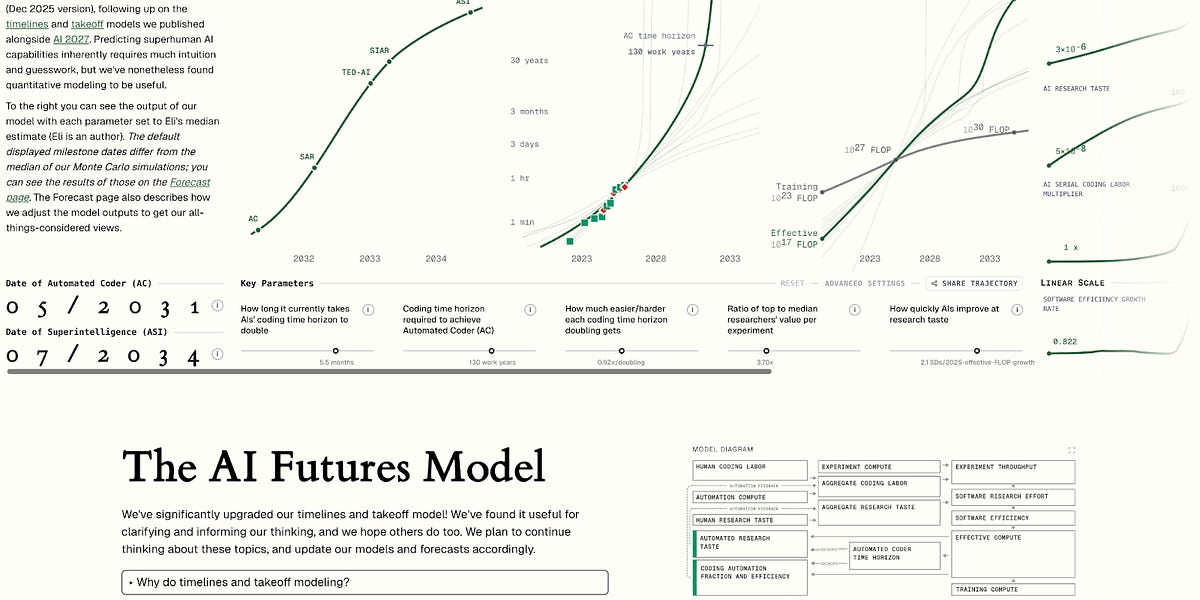

An updated 'AI Futures Model' predicts a three-year longer timeline for full coding automation compared to previous forecasts, primarily due to a less bullish outlook on pre-full-automation AI R&D speedups.

Explain Like I'm Five

"Imagine we're trying to guess when a super-smart robot will be able to write all computer code by itself, and also when it will become smarter than all humans. Our new guess says it might take about 3 years longer for the coding robot than we first thought, because we realized it won't learn quite as fast before it can do everything. It's like guessing when a flower will bloom, and then updating your guess when you see how fast it's actually growing."

Deep Intelligence Analysis

The developers of the model emphasize the inherent difficulty in predicting the future of AI, acknowledging that while their model incorporates critical dynamics, it cannot account for all factors. Crucially, many parameter values within the model rely on intuitive guesses rather than solely empirical data, a transparency that invites users to interact with the model via an interactive website to adjust parameters based on their own judgments. This approach highlights the lack of universal consensus and the speculative elements inherent in AGI forecasting. The improvement in modeling AI R&D automation, rather than new empirical evidence, was the main catalyst for the 2-4 year shift in the median for full coding automation. This suggests that refinement in theoretical understanding and modeling techniques can sometimes outweigh new observational data in shaping our expectations.

The uncertainty surrounding AI timelines is further evidenced by the wide divergence in expert opinions. A 2023 survey of AI academics, for instance, yielded a median AGI prediction of either 2047 or 2116, depending on the definition used for AGI. In contrast, aggregations from platforms like Metaculus and Manifold markets suggest a 50% probability of AGI by 2030. Such vast discrepancies emphasize the nascent state of AGI forecasting and the need for more robust, transparent methodologies. The 'AI Futures Model' aims to contribute to this by offering a structured, explicit framework for weighing various considerations, factors, and arguments, moving away from implicit, 'in-the-head' assessments.

Ultimately, the goal of such modeling is not to provide an infallible crystal ball but to enhance preparedness and understanding. By attempting to predict future trends and build models of underlying dynamics, stakeholders can gain a better sense of probabilities, allowing for more informed decision-making and better integration of future empirical data into existing forecasts. This updated model serves as a critical recalibration for anyone involved in AI strategy, investment, or policy, signaling that while progress is undeniable, the path to advanced AI capabilities may be more protracted than previously thought, offering potentially more time for societal adaptation and responsible development.

Impact Assessment

Revised AI timelines for critical milestones like automated coding and superintelligence are crucial for strategic planning across industries and governments. This adjustment highlights the inherent uncertainty in AI development, urging caution in projections and adaptive policy-making.

Key Details

- ● AI Futures Model (Dec 2025 Update)

- ● Previous model: AI 2027 forecast (published Apr 2025)

- ● New model predicts ~3 years longer timelines to full coding automation

- ● Median for full coding automation shifted 2-4 years longer

- ● 2023 survey of AI academics: AGI median of 2047 or 2116 (depending on definition)

Optimistic Outlook

More realistic timelines allow for better preparation, enabling society to develop robust ethical frameworks, safety protocols, and economic strategies to manage AI's profound impact. A slower acceleration could prevent premature deployment of advanced AI systems, fostering more responsible innovation.

Pessimistic Outlook

A longer timeline might reduce urgency for critical safety and alignment research, potentially leaving humanity unprepared if AI development unexpectedly accelerates. Discrepancies in expert forecasts could lead to complacency or misallocation of resources, delaying essential preparatory actions.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.