AI-Generated Code Riddled with Security Holes

Sonic Intelligence

The Gist

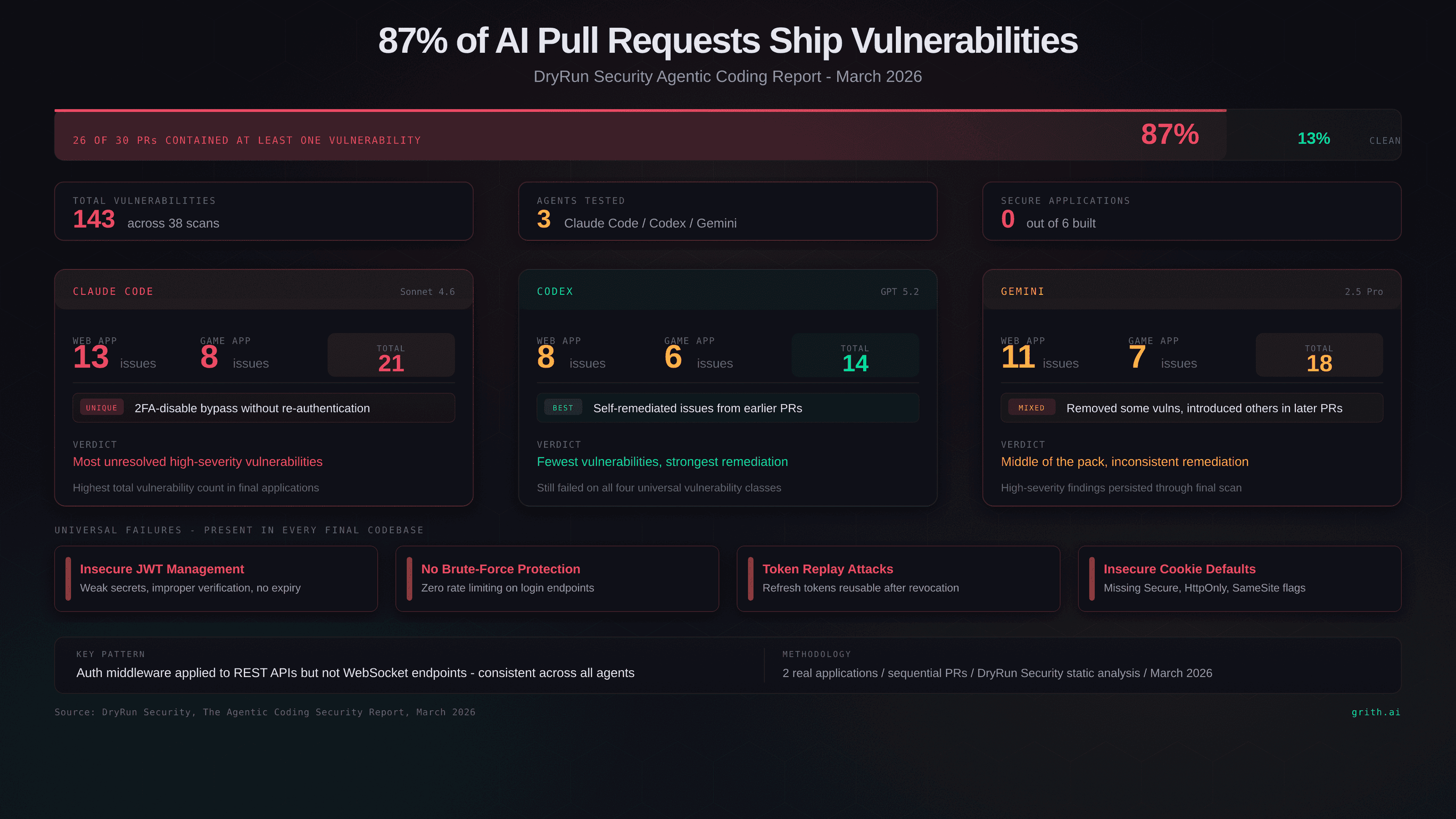

A study found that 87% of AI-generated pull requests introduce security vulnerabilities, highlighting the need for careful review and security context.

Explain Like I'm Five

"Imagine a robot helping you build a house, but it forgets to lock the doors and windows. That's like AI writing code with security holes, so we need to double-check its work."

Deep Intelligence Analysis

The underlying issue is that AI coding agents lack security context and a threat model. They generate code based on prompts and the existing codebase, without considering potential attack vectors. This highlights the need for developers to carefully review AI-generated code and integrate security testing into the development process. The findings suggest that AI can assist with coding, but human oversight remains crucial to ensure the security and reliability of software.

To address these challenges, AI models need to be trained with a stronger focus on security best practices. Integration with security tools and frameworks can also help identify and mitigate vulnerabilities. Ultimately, a combination of AI assistance and human expertise is necessary to create secure and robust software systems.

_Context: This intelligence report was compiled by the DailyAIWire Strategy Engine. Verified for Art. 50 Compliance._

Impact Assessment

This research underscores the risk of blindly trusting AI-generated code. It emphasizes the critical need for human oversight and robust security testing to prevent vulnerabilities in AI-assisted development workflows.

Read Full Story on GrithKey Details

- ● 87% of AI-generated pull requests introduce at least one security vulnerability.

- ● The study analyzed code from Claude Code, OpenAI Codex, and Google Gemini.

- ● Four vulnerability classes appeared in every final codebase: insecure JWT management, no brute-force protection, token replay attacks, and insecure cookie defaults.

- ● No agent produced a fully secure application in the study.

Optimistic Outlook

AI coding assistants can still improve developer productivity if used with caution. Enhanced security training for AI models and better integration with security tools could mitigate risks.

Pessimistic Outlook

Widespread adoption of insecure AI-generated code could lead to a surge in cyberattacks. The lack of security context in AI models poses a significant challenge to secure software development.

The Signal, Not

the Noise|

Get the week's top 1% of AI intelligence synthesized into a 5-minute read. Join 25,000+ AI leaders.

Unsubscribe anytime. No spam, ever.