AI Memory Benchmarks Flawed: New Proposal Targets Real-World Agent Competence

Sonic Intelligence

The Gist

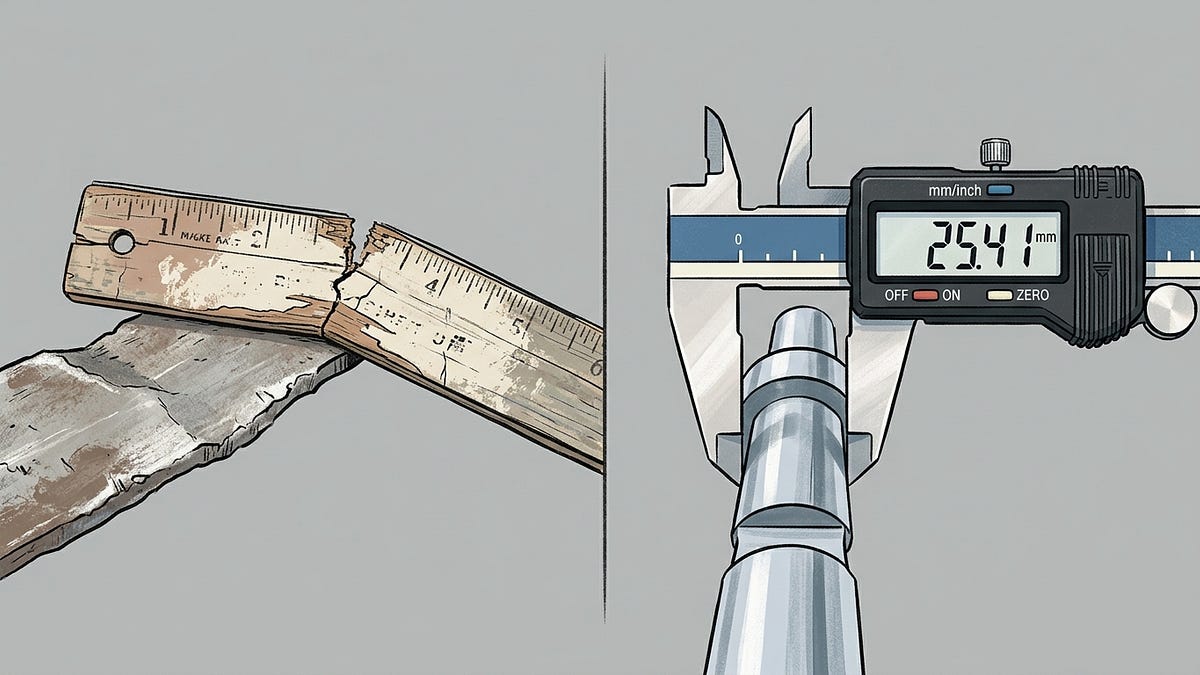

Current AI memory benchmarks are critically flawed, hindering agent development.

Explain Like I'm Five

"Imagine you're testing who's best at remembering things for a big test. But the test questions are sometimes wrong, and the teacher sometimes says wrong answers are right! This makes it hard to know who's really smart. Someone is saying we need a much better, fairer test so we can truly find out which AI is the best at remembering lots of stuff for a long time, like a helpful friend."

Deep Intelligence Analysis

The audit findings reveal significant vulnerabilities: LoCoMo's answer key contains 6.4% errors, and its LLM judge accepts 63% of intentionally incorrect responses. Furthermore, benchmarks like LongMemEval-S are effectively context window tests, not true memory assessments, as their entire corpus can fit within a single context window, bypassing the need for robust retrieval mechanisms. The Mem0/Zep dispute exemplifies the lack of standardized, end-to-end methodologies, where differing configurations and evaluation parameters lead to irreconcilable performance claims. This environment incentivizes 'context-stuffing' and superficial answer generation over genuine memory system innovation, hindering the development of AI agents capable of acting as reliable, long-term colleagues.

Moving forward, the proposal for a new, collaborative benchmark targeting a 1-2 million token corpus represents a strategic imperative. Such a benchmark, designed with real-world knowledge base approximation and end-to-end process prescription, could establish the necessary common ground for honest measurement. Its successful implementation and widespread adoption would not only provide a clearer picture of current AI memory capabilities but also guide future research towards architectural solutions that genuinely enhance long-term agent competence, fostering trust and accelerating the practical deployment of advanced AI systems. This shift is crucial for transitioning from benchmark-optimized models to truly intelligent, persistent AI assistants.

Visual Intelligence

flowchart LR

A[Current Benchmarks Flawed] --> B{Misleading Scores}

B --> C[No Common Methodology]

C --> D[Research Misdirected]

D --> E[Agents Lack Competence]

E --> F[New Benchmark Proposed]

F --> G[Collaborative Design]

G --> H[Accurate Evaluation]

Auto-generated diagram · AI-interpreted flow

Impact Assessment

The integrity of AI memory system evaluation is compromised by existing benchmarks, leading to misleading performance claims. This proposal highlights a critical need for standardized, end-to-end methodologies to accurately assess long-term AI agent capabilities, directly impacting the development of reliable and competent AI colleagues.

Read Full Story on PenfieldlabsKey Details

- ● LoCoMo benchmark found 6.4% answer key errors and LLM judge accepts 63% wrong answers.

- ● LongMemEval-S is a context window test, not a true memory test, fitting entirely within modern context windows.

- ● Mem0/Zep benchmark dispute showed accuracy discrepancies from 58.44% to 75.14% due to configuration differences.

- ● Proposed benchmark corpus target is 1-2 million tokens, approximating real-world knowledge bases.

- ● Current 'best strategy' for high LoCoMo scores involves context-stuffing and generating topically-adjacent answers.

Optimistic Outlook

A robust, collaborative benchmark initiative could significantly accelerate the development of truly capable AI memory systems. By establishing clear, reproducible evaluation standards, researchers can focus on genuine architectural improvements rather than benchmark exploitation, fostering rapid, verifiable progress in AI agent intelligence and reliability.

Pessimistic Outlook

Without broad industry adoption and strict adherence to new benchmark standards, the current landscape of flawed evaluations may persist. This could lead to continued misallocation of research efforts, inflated performance claims, and a general erosion of trust in reported AI capabilities, ultimately slowing the deployment of genuinely effective long-term AI agents.

The Signal, Not

the Noise|

Join AI leaders weekly.

Unsubscribe anytime. No spam, ever.

Generated Related Signals

SAP Deploys Kubernetes-Based AI Agent Fleet Orchestration

SAP Labs developed a Kubernetes platform for autonomous AI agent fleets.

AI Workflows Evolve Beyond Prompts to Autonomous Agentic Systems

Autonomous AI workflows now manage complex coding tasks end-to-end.

Multi-LLM Agents Generate Realistic EMS Dialogues for AI Training

A multi-LLM agent pipeline creates realistic EMS dialogue data to train diagnostic AI.

Quantum Vision Theory Elevates Deepfake Speech Detection Accuracy

Quantum Vision theory significantly improves deepfake speech detection accuracy.

GRASS Framework Optimizes LLM Fine-tuning with Adaptive Memory Efficiency

A new framework significantly reduces memory usage and boosts accuracy for LLM fine-tuning.

AsyncTLS Boosts LLM Long-Context Inference Efficiency by 10x

AsyncTLS dramatically improves LLM long-context inference speed and throughput.