Anthropic's AI-Generated Code Exposed: Quality Questions Emerge

Sonic Intelligence

Anthropic's 100% AI-generated code reveals significant quality and engineering culture issues.

Explain Like I'm Five

"Imagine a company said a robot built 100% of their new toy. Then, when the toy broke, people looked inside and saw it was poorly put together, with parts glued in weird ways, even though the robot was supposed to be super smart. This article is about that happening with computer code from a big AI company."

Deep Intelligence Analysis

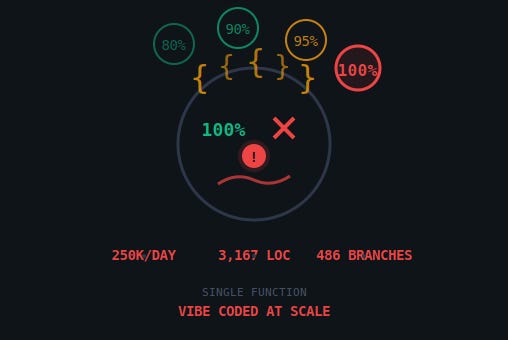

The leaked code, comprising 64,464 lines of core TypeScript, presented glaring examples of poor engineering practices. Noteworthy instances include a single function spanning 3,167 lines with 486 branch points, a clear violation of modularity principles. Furthermore, the use of basic regex for sentiment analysis (/\b(wtf|shit|fuck|horrible|awful|terrible)\b/i) within a company specializing in advanced language models suggests a profound disconnect between internal tooling and core product capabilities. The presence of a documented bug burning 250,000 API calls daily, yet shipped, further indicates a prioritization of speed or narrative over robust quality assurance. These details directly contradict the implied efficiency and sophistication of 100% AI-generated code.

This incident carries substantial forward-looking implications for the broader AI industry. It will likely intensify scrutiny on claims regarding AI's autonomous capabilities in software development, pushing for more transparent and verifiable metrics beyond mere line counts. Companies may be compelled to re-evaluate their internal engineering processes, emphasizing human oversight, rigorous code review, and robust testing protocols even when leveraging AI for code generation. Ultimately, this event could catalyze a necessary shift towards a more grounded and quality-focused approach to AI-assisted development, moving beyond aspirational percentages to deliver genuinely reliable and maintainable software.

[EU AI Act Art. 50 Compliant]

Impact Assessment

The public exposure of Anthropic's AI-generated code challenges the narrative of advanced AI producing high-quality, efficient software. It raises critical questions about engineering standards, transparency in AI development, and the true capabilities of large language models in complex code generation.

Key Details

- Anthropic's lead engineer Boris Cherny claimed 100% of his Claude Code contributions were AI-written by December 2025.

- A packaging error exposed 512,000 lines of Claude Code to the public.

- The exposed code included a single function spanning 3,167 lines with 486 branch points.

- Sentiment analysis was performed using basic regex /\b(wtf|shit|fuck|horrible|awful|terrible)\b/i.

- A documented bug burning 250,000 API calls daily was shipped in the code.

Optimistic Outlook

This incident could serve as a crucial wake-up call for the AI industry, prompting greater transparency in reporting AI-generated code metrics and fostering a more rigorous approach to code review and quality assurance for AI-developed software. It might accelerate the development of better AI-driven testing and validation tools.

Pessimistic Outlook

The revelations could significantly erode trust in claims about AI's ability to autonomously generate production-grade code, potentially slowing adoption of AI-assisted development tools. It also highlights a concerning trend of prioritizing hype over engineering discipline, which could lead to widespread technical debt and security vulnerabilities in AI-driven systems.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.