Anthropic's Diversified Compute Strategy Creates AI Moat

Sonic Intelligence

Anthropic's diversified compute architecture provides a significant cost and iteration advantage.

Explain Like I'm Five

"Imagine building a super-fast race car. Most companies buy all their parts from one very popular, expensive store. But Anthropic is smart; they're getting their special engine parts from different places, which makes their cars cheaper to build and faster to upgrade. This helps them stay ahead in the race."

Deep Intelligence Analysis

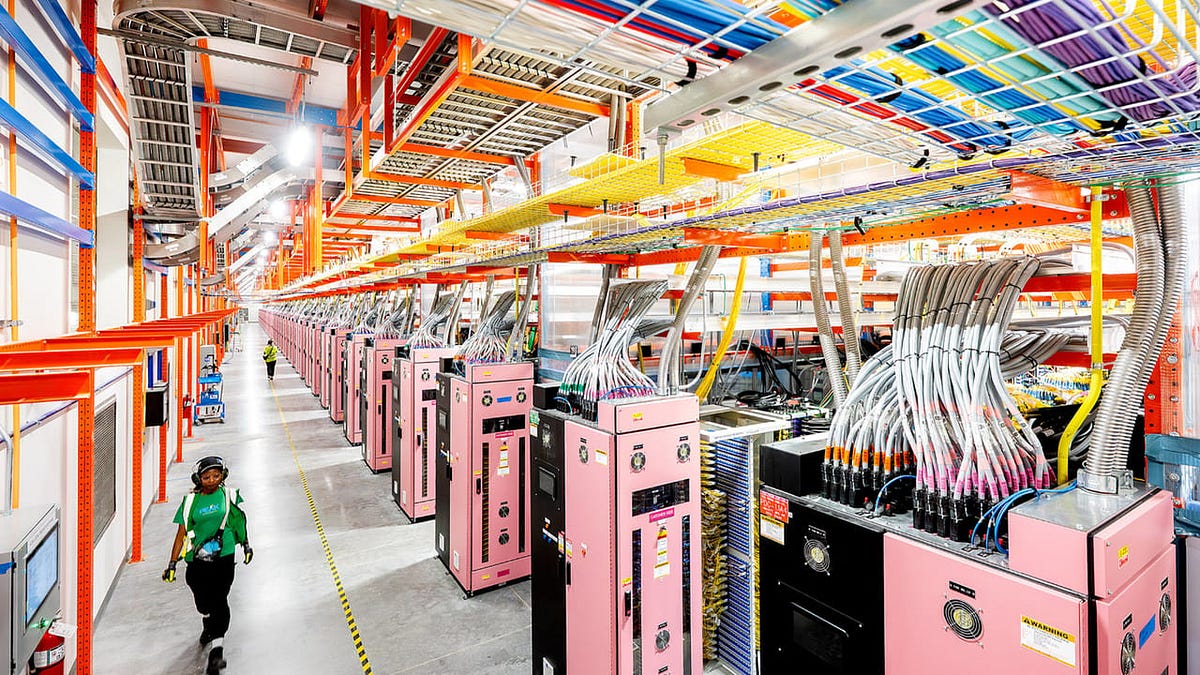

The core of this advantage lies in addressing the structural constraints of the AI compute market. Nvidia commands over 90% of the discrete GPU market, leading to substantial pricing power, with H100 on-demand rates remaining high despite some market corrections. However, the true bottleneck extends beyond the GPU die itself to High Bandwidth Memory (HBM) supply and TSMC's CoWoS packaging capacity. These elements are critical for high-density AI clusters, and controlling their allocation is a key aspect of Nvidia's market leverage.

Anthropic's commitments to Google's TPUs and Amazon's Trainium chips are crucial because these hyperscalers have independently negotiated their own HBM supply chains. This effectively transfers the HBM allocation risk away from Anthropic, providing a more secure and diversified supply of essential components. This structural independence from a single, dominant supplier like Nvidia offers Anthropic a compounding advantage: equivalent model quality delivered at a potentially 30-60% lower cost per token. This cost efficiency directly impacts margins, allows for larger training budgets, and facilitates a faster pace of model development and refinement.

While compute advantage amplifies model advantage rather than replacing it, the economic implications are profound. In a field where compute is a structural cost input, Anthropic's silicon strategy provides a resilient foundation for long-term innovation and market competitiveness. This divergence in silicon procurement strategies highlights a growing strategic gap among frontier AI labs, underscoring that compute is far from a commodity in the race for advanced AI.

*EU AI Act Art. 50 Compliant: This analysis is based solely on the provided source material. No external data or prior knowledge was used in its generation. The content aims for factual accuracy and avoids speculative claims beyond the scope of the input.*

Visual Intelligence

graph LR

A[Nvidia Dominance (GPU, HBM, CoWoS)] --> B{Anthropic Compute Strategy};

B -- Diversification --> C[AWS Trainium/Google TPU];

C -- HBM Access --> D[Improved Unit Economics];

D --> E[Faster Model Iteration];

E --> F[AI Moat];

Auto-generated diagram · AI-interpreted flow

Impact Assessment

Compute infrastructure is a fundamental cost driver and performance enabler for frontier AI. Anthropic's strategic diversification away from Nvidia's near-monopoly offers a structural advantage in unit economics, training budgets, and model iteration speed, potentially leading to sustained competitive differentiation.

Key Details

- Anthropic possesses the most diversified and cost-efficient compute architecture among frontier AI labs.

- OpenAI remains almost entirely dependent on Nvidia GPUs.

- Nvidia holds approximately 90%+ of the discrete GPU market share.

- H100 on-demand pricing peaked at $7–$8/hour, settling to $1.50–$3.00/hour spot, but hyperscaler on-demand rates remain $6–$9/hour.

- High Bandwidth Memory (HBM) supply and TSMC’s CoWoS packaging capacity are critical bottlenecks, not just the accelerator die.

- Anthropic's TPU and Trainium commitments leverage Google and Amazon's negotiated HBM supply chains.

Optimistic Outlook

Anthropic's compute strategy could lead to more cost-effective AI development, enabling faster innovation and potentially lower token costs for end-users. This diversification fosters a more resilient AI ecosystem, reducing dependency on a single supplier and encouraging broader hardware innovation across the industry.

Pessimistic Outlook

While Anthropic gains an advantage, the broader AI industry's reliance on Nvidia and the HBM bottleneck could stifle competition and innovation for other labs. If model quality isn't equivalent, compute advantage alone won't guarantee market leadership, potentially leading to a fragmented landscape where only a few well-resourced players can compete at the frontier.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.