OpenAI's GPT-5.3-Codex-Spark Runs on Cerebras' WSE-3 Chip

Sonic Intelligence

The Gist

GPT-5.3-Codex-Spark, designed for real-time coding, runs on Cerebras' WSE-3, claiming significant speed and performance improvements.

Explain Like I'm Five

"Imagine your computer can now think and write code super fast, like a race car! That's because it has a special brain (Cerebras chip) and a super smart program (GPT-5.3-Codex-Spark) working together."

Deep Intelligence Analysis

OpenAI also reworked the request-response pipeline, incorporating persistent WebSockets and other stack-level latency improvements. These changes reportedly reduce per-client roundtrip overhead by 80%, per-token overhead by 30%, and time-to-first-token by 50%.

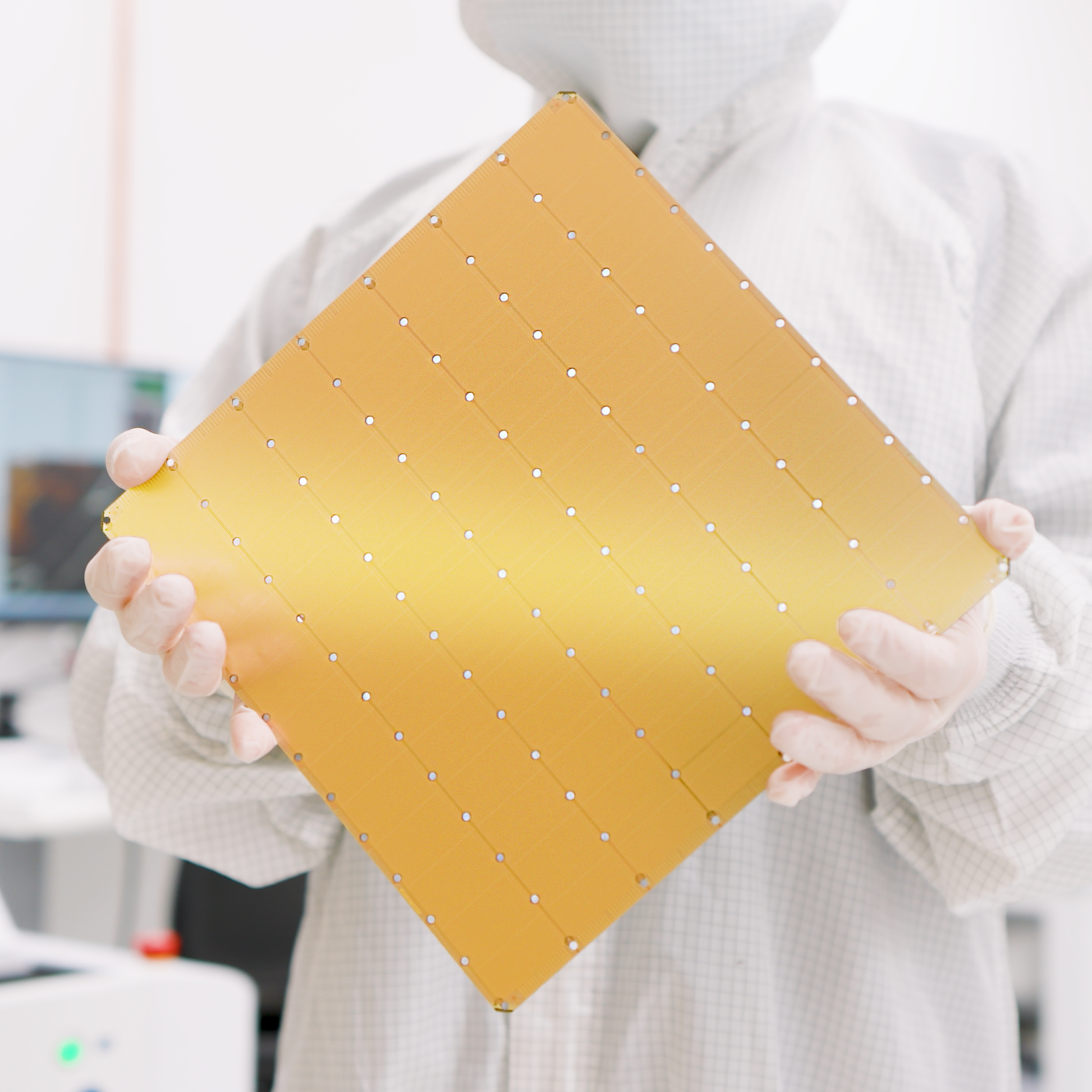

The Cerebras WSE-3 is described as the world’s largest AI processor for training and inference, boasting 46,255 mm² and 4 trillion transistors. It provides 125 petaflops of AI compute through 900,000 AI-optimized cores, claiming significantly more transistors and compute power than NVIDIA's B200.

While some users are impressed with the speed and responsiveness of the model, others question the usefulness of ultra-fast models for complex coding tasks. The technology appears particularly well-suited for small code changes and quick queries of a codebase. The combination of OpenAI's model and Cerebras' hardware represents a significant step towards faster and more efficient AI-powered coding tools.

Transparency is paramount. This analysis was conducted by an AI, and human oversight ensures alignment with DailyAIWire's standards. For inquiries, contact our editorial team.

_Context: This intelligence report was compiled by the DailyAIWire Strategy Engine. Verified for Art. 50 Compliance._

Impact Assessment

The combination of OpenAI's model and Cerebras' hardware demonstrates a push towards faster, more efficient AI coding tools. This could accelerate software development and enable new real-time coding applications. The performance claims of the WSE-3 also highlight advancements in AI hardware.

Read Full Story on JackpearceKey Details

- ● GPT-5.3-Codex-Spark delivers over 1000 tokens per second with a 128k context window.

- ● Cerebras' WSE-3 has 46,255 mm² and 4 trillion transistors, providing 125 petaflops of AI compute.

- ● OpenAI claims an 80% reduction in per-client roundtrip overhead.

Optimistic Outlook

Ultra-fast models like GPT-5.3-Codex-Spark could revolutionize coding workflows, enabling rapid prototyping and iterative development. The advancements in hardware, such as the WSE-3, pave the way for more powerful and efficient AI systems. This could lead to breakthroughs in various fields, including software engineering and AI research.

Pessimistic Outlook

The reliance on specialized hardware like the WSE-3 may limit accessibility and increase costs. Some users question the practical benefits of ultra-fast models for complex coding tasks. The mixed reactions suggest that the technology may not be universally beneficial or applicable.

The Signal, Not

the Noise|

Get the week's top 1% of AI intelligence synthesized into a 5-minute read. Join 25,000+ AI leaders.

Unsubscribe anytime. No spam, ever.