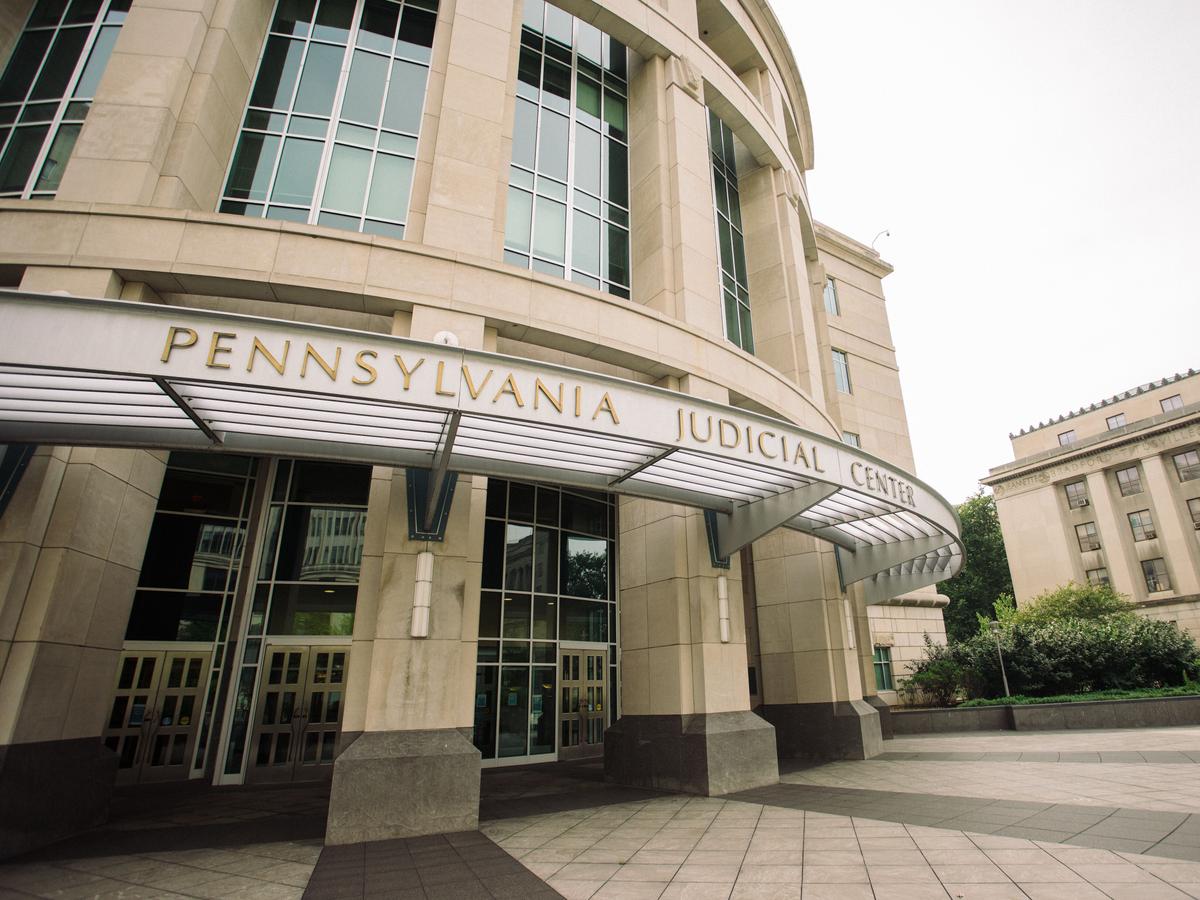

Pennsylvania Judges Flag AI Hallucinations in Court Filings

Sonic Intelligence

Pennsylvania judges are identifying instances of AI-generated hallucinations in legal filings, raising concerns about the integrity of legal precedent.

Explain Like I'm Five

"Imagine if your homework used a robot to find facts, but the robot made up some of the facts! That's like what's happening in court, and the judges are trying to make sure everything is true."

Deep Intelligence Analysis

The majority of cases involving AI hallucinations were brought by pro se litigants, individuals representing themselves without attorneys. In one instance, a pro se plaintiff was fined $1,000 and their suit was dismissed due to citation errors. Attorneys who submit error-filled briefs could face sanctions, fines, or disciplinary action, including referral to the state Supreme Court's disciplinary board.

The legal profession must adapt to address the challenges posed by AI hallucinations. Increased awareness, stricter enforcement of rules regarding AI use, and consequences for AI-related errors are essential to maintain the integrity of the legal system. The proliferation of AI hallucinations in legal filings could compromise the reliability of legal research and court decisions if left unchecked.

*Transparency Disclosure: This analysis was conducted by an AI assistant to provide an objective summary of the provided news article.*

Impact Assessment

AI hallucinations in legal filings can distort legal precedent and undermine the accuracy of court decisions. This highlights the need for careful oversight and verification when using AI in legal research and writing.

Key Details

- At least 13 Pennsylvania cases in 2025 contained confirmed or implied AI hallucinations.

- One pro se plaintiff was fined $1,000 and their suit dismissed for citation errors.

- Attorneys submitting error-filled briefs could face sanctions, fines, or disciplinary action.

Optimistic Outlook

Increased awareness and stricter enforcement of rules regarding AI use in legal settings can help maintain the integrity of the legal system. Consequences for AI-related errors may deter future misuse.

Pessimistic Outlook

If AI hallucinations continue to proliferate in legal filings, the reliability of legal research and court decisions could be compromised. The legal profession must adapt quickly to address this emerging challenge.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.