Raspberry Pi 5 Gains LLM Capabilities with AI HAT+ 2, Featuring 40 TOPS Inference

Sonic Intelligence

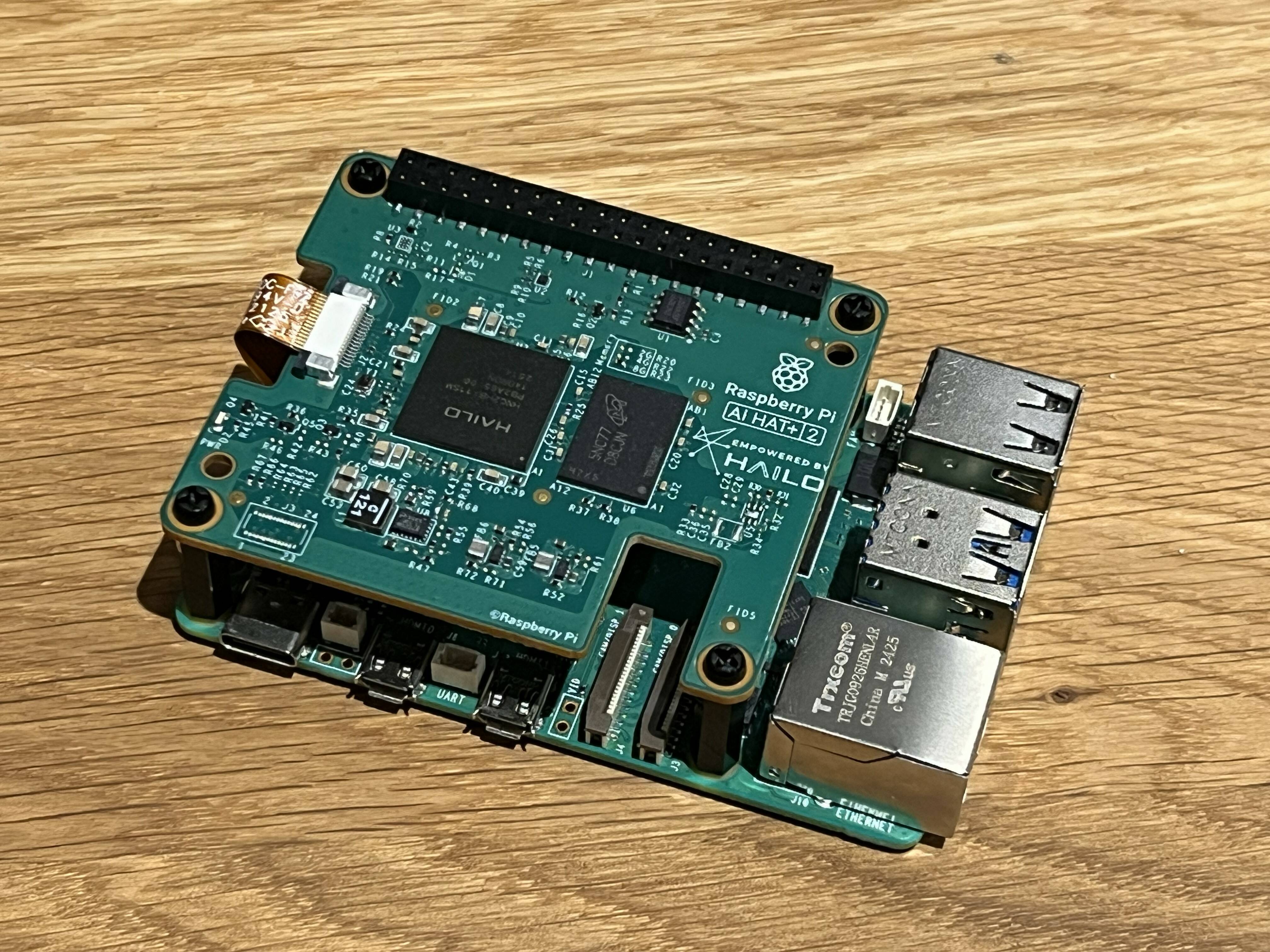

Raspberry Pi 5 gets 40 TOPS LLM acceleration via new AI HAT+ 2.

Explain Like I'm Five

"Imagine your tiny Raspberry Pi computer getting a super-smart brain upgrade! This new 'AI HAT+ 2' add-on makes it much faster at understanding and making sense of language, like a mini ChatGPT that works right on your desk, without needing the internet for big calculations."

Deep Intelligence Analysis

The technical specifications highlight a clear focus on accelerating LLM and VLM workloads, distinguishing it from its predecessor which offered comparable computer vision performance (26 TOPS INT4). While the 8 GB of onboard RAM is a welcome addition to offload the Pi's main memory, it still presents a potential constraint for running the largest, most memory-intensive LLMs, which often require hundreds of gigabytes or even terabytes of memory in cloud environments. However, for optimized, smaller parameter models like DeepSeek-R10-Distill, Llama3.2, and Qwen2 (all 1.5-billion-parameter models), the AI HAT+ 2 provides a robust local execution environment, demonstrating a strategic pivot towards enabling practical, resource-constrained AI applications at the edge.

Looking forward, the AI HAT+ 2 positions the Raspberry Pi 5 as a compelling platform for developers and researchers exploring offline AI agents, intelligent sensors, and custom industrial automation solutions. The ability to run generative AI locally fosters greater experimentation and deployment flexibility, especially in environments with limited or no internet connectivity. While the question of 'who needs this' remains pertinent for general users, its value proposition for specialized industrial and academic applications requiring on-device LLM processing is substantial. This development underscores a broader industry trend towards decentralizing AI compute, pushing intelligence closer to the data source and enabling a new class of intelligent edge devices.

Visual Intelligence

flowchart LR A["Raspberry Pi 5"] --> B["AI HAT+ 2 Attached"] B --> C["Hailo-10H Accelerator"] C --> D["40 TOPS Inference"] D --> E["8 GB Onboard RAM"] E --> F["Local LLM Processing"] F --> G["Edge AI Applications"]

Auto-generated diagram · AI-interpreted flow

Impact Assessment

The AI HAT+ 2 significantly boosts the Raspberry Pi 5's capability for local AI inference, especially for LLMs. This enables powerful edge AI applications, reducing reliance on cloud computing and fostering innovation in embedded AI systems for robotics, IoT, and industrial use cases.

Key Details

- Raspberry Pi launched the AI HAT+ 2 for Raspberry Pi 5.

- It features the Hailo-10H neural network accelerator.

- The HAT+ 2 delivers 40 TOPS (INT4) of inference performance.

- It includes 8 GB of onboard RAM.

- Supports LLMs, VLMs, and other generative AI applications locally.

- Computer vision performance is approximately 26 TOPS (INT4), similar to the previous AI HAT+.

Optimistic Outlook

This hardware upgrade democratizes access to local LLM processing, empowering developers to create sophisticated edge AI solutions without cloud latency or cost. It could accelerate innovation in offline AI agents, smart devices, and educational robotics, fostering a new generation of embedded AI applications.

Pessimistic Outlook

Despite the 40 TOPS, the 8 GB onboard RAM may still be a bottleneck for larger LLMs, limiting its utility for more complex models. The similar computer vision performance to its predecessor and the availability of cheaper alternatives for vision-only tasks raise questions about its overall value proposition for certain use cases.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.