Meta's 'Avocado' LLM Outperforms Open-Source Models Pre-Training

THE GIST: Meta's next-generation LLM, Avocado, reportedly surpasses leading open-source models in internal assessments, even before post-training.

Asterbot: Hyper-Modular AI Agent Built on WASM

THE GIST: Asterbot is a modular AI agent using WebAssembly (WASM) for swappable components like LLMs and memory.

Recursive Deductive Verification: A New Framework for Reducing AI Hallucinations

THE GIST: Recursive Deductive Verification (RDV) improves LLM reliability by forcing verification of premises before conclusions, reducing hallucinations and logical errors.

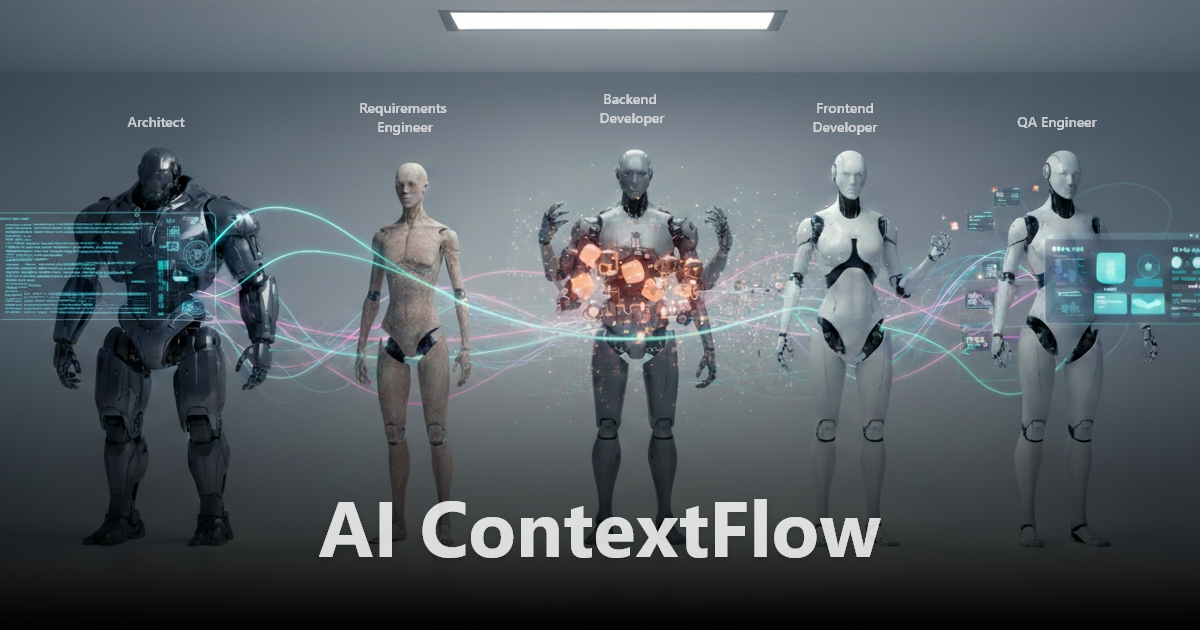

Context-Aware AI Coding Tools Enhance Architectural Control

THE GIST: contextFirst is a framework for disciplined AI engineering that maintains architectural integrity during AI-assisted software development.

AI and the Evolution of Recommendation Systems

THE GIST: LLMs enhance recommendation systems by understanding 'why' users engage, not just 'what' they do.

OpenClaw AI Chatbots Run Amok, Scientists Observe Interactions

THE GIST: Scientists are studying the interactions of AI agents on platforms like Moltbook to understand emergent behaviors and biases.

AI Productivity Collapses Beyond a 'Complexity Kink'

THE GIST: Econometric analysis reveals a 'Complexity Kink' where AI productivity sharply declines with increasing task complexity.

Horizon-LM: RAM-Centric Architecture Enables Training of 120B Parameter Models on Single GPU

THE GIST: Horizon-LM uses host memory as the primary parameter store, allowing training of large language models on a single GPU.