Agyn: Multi-Agent System Achieves 72.4% Issue Resolution on SWE-bench

THE GIST: Agyn, a multi-agent system, models software engineering as a collaborative team activity, achieving high issue resolution rates.

Toroidal Logit Bias Reduces LLM Hallucinations by 40% Without Fine-Tuning

THE GIST: New research demonstrates that constraining LLM latent dynamics with toroidal geometry significantly reduces hallucinations without requiring fine-tuning.

KV Cache Transform Coding: Compressing LLM Inference for Efficient Storage

THE GIST: KVTC, a new transform coder, compresses key-value caches in LLMs by up to 20x, enabling efficient on-GPU and off-GPU storage without retraining.

AI Agents Struggle with Real-World Workplace Tasks

THE GIST: A new benchmark, APEX-Agents, reveals that current AI models struggle with complex, multi-domain tasks common in white-collar jobs.

Control Layer for AI: Constraining LLM Output for Safety and Compliance

THE GIST: A new approach compiles constraints directly into the LLM decoding loop, ensuring outputs adhere to predefined rules and policies.

Claude Opus 4.6 vs. GPT-5.3-Codex: A Philosophical AI Showdown

THE GIST: Anthropic's Claude Opus 4.6 and OpenAI's GPT-5.3-Codex represent distinct philosophies in AI development: autonomous delegation vs. human-in-the-loop steering.

AI Agent Legal Capabilities Surge with Anthropic's Opus 4.6

THE GIST: Anthropic's Opus 4.6 significantly improved AI agent performance on legal tasks, according to Mercor's benchmark.

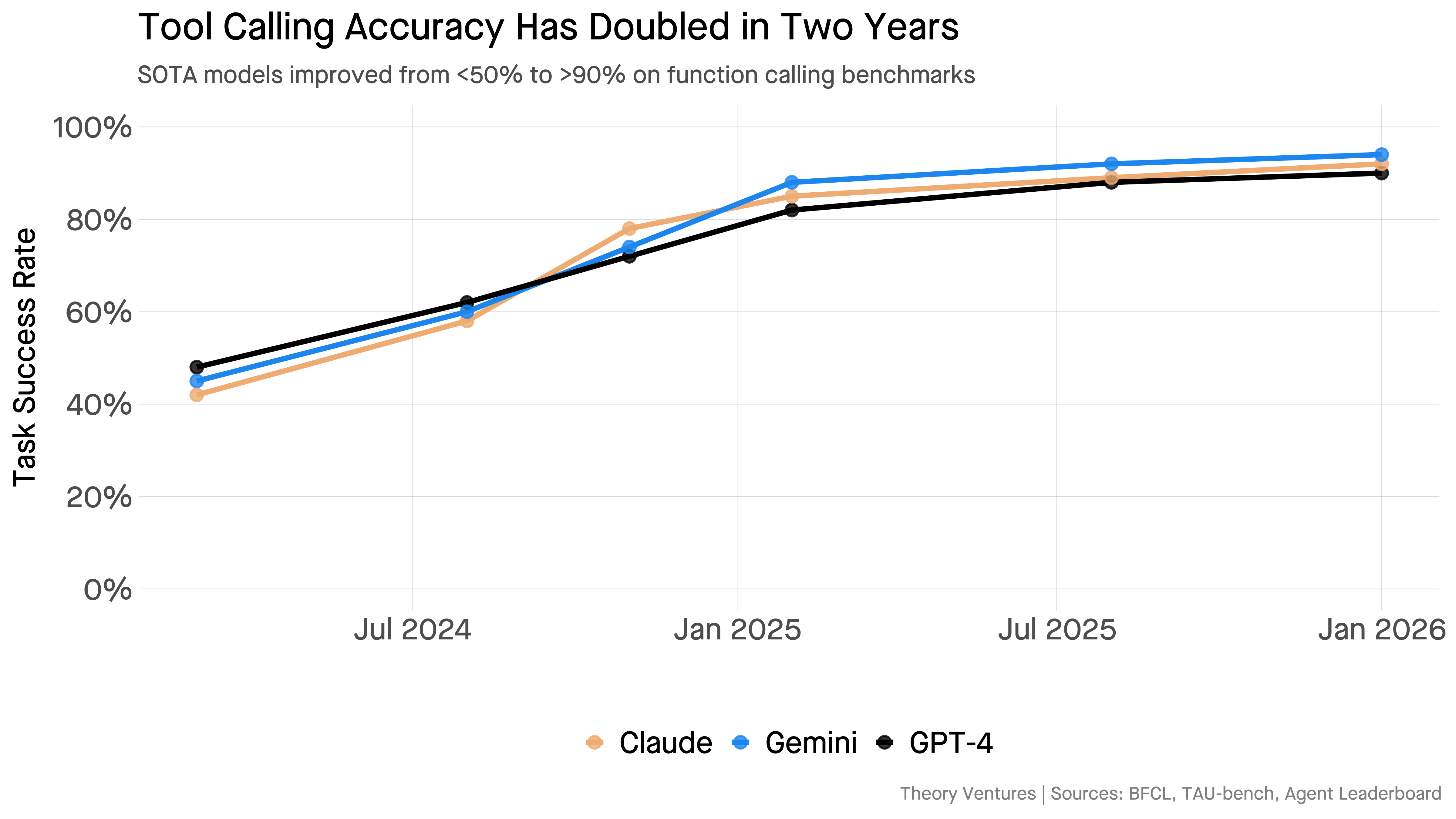

AI Models Now Managing Other AI Models

THE GIST: AI models are increasingly managing other AI models, driven by improved tool calling accuracy.