Mitigating AI Agent Attack Surfaces with Process-Scoped Credentials

THE GIST: AI agents inherit shell environment permissions, creating security risks like data theft and remote code execution via prompt injection.

The Security Risks of AI Assistants Like OpenClaw

THE GIST: AI assistants, like the viral OpenClaw, pose significant security risks due to their access to sensitive user data and potential vulnerabilities.

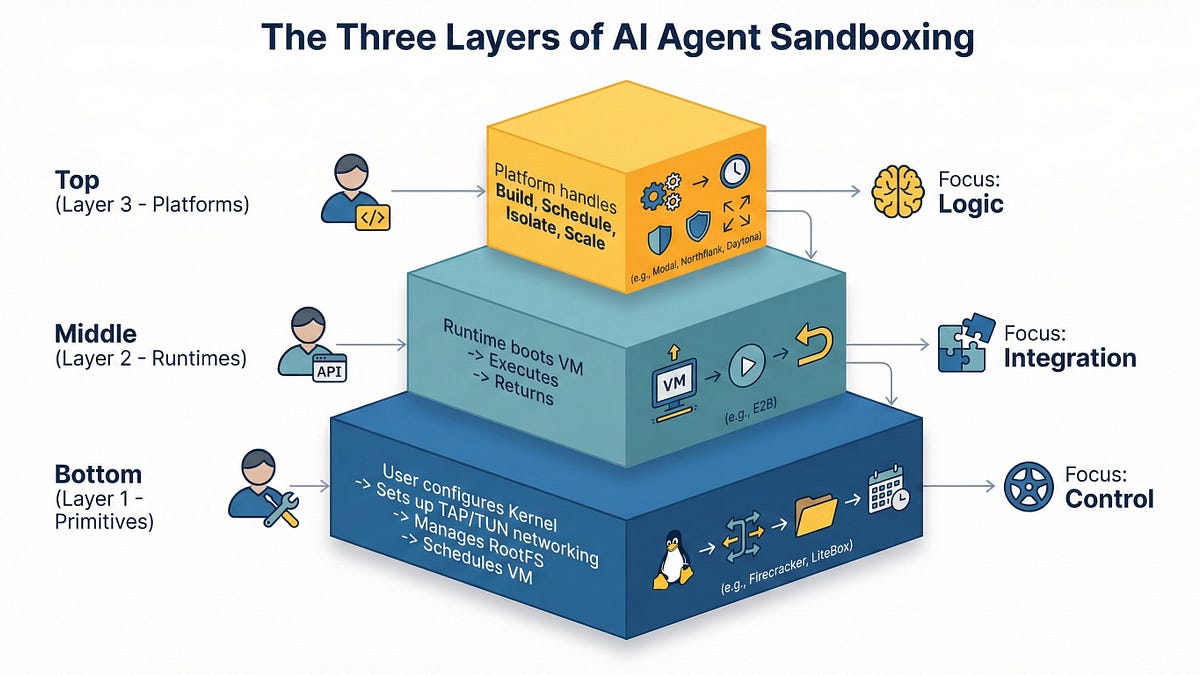

AI Agent Sandboxing: Navigating Primitives, Runtimes, and Platforms in 2026

THE GIST: In 2026, AI agent sandboxing requires careful selection between primitives, runtimes, and managed platforms due to the risks of executing untrusted code.

Rampart: Open-Source Security for Claude and AI Agents

THE GIST: Rampart is an open-source tool providing security and control for AI agents by evaluating tool calls against user-defined policies.

SatGate: An Economic Firewall for AI Agent Traffic

THE GIST: SatGate is an open-source API gateway that enforces economic governance for AI agents, preventing uncontrolled spending.

NumaSec: Open-Source AI Agent for Autonomous Penetration Testing

THE GIST: NumaSec is an open-source AI agent that autonomously performs multi-stage exploits for penetration testing, requiring no security expertise or configuration.

LLM Cracks Anthropic's 'Anonymous' Interview Data

THE GIST: Researchers used LLMs to de-anonymize Anthropic's supposedly anonymous interview data, raising data privacy concerns.

AI Agents in Infrastructure: A Security Nightmare Waiting to Happen

THE GIST: AI agents with broad infrastructure access pose significant security risks due to potential prompt injection and lack of human judgment.