AI Agent Sandboxing: Navigating Primitives, Runtimes, and Platforms in 2026

Sonic Intelligence

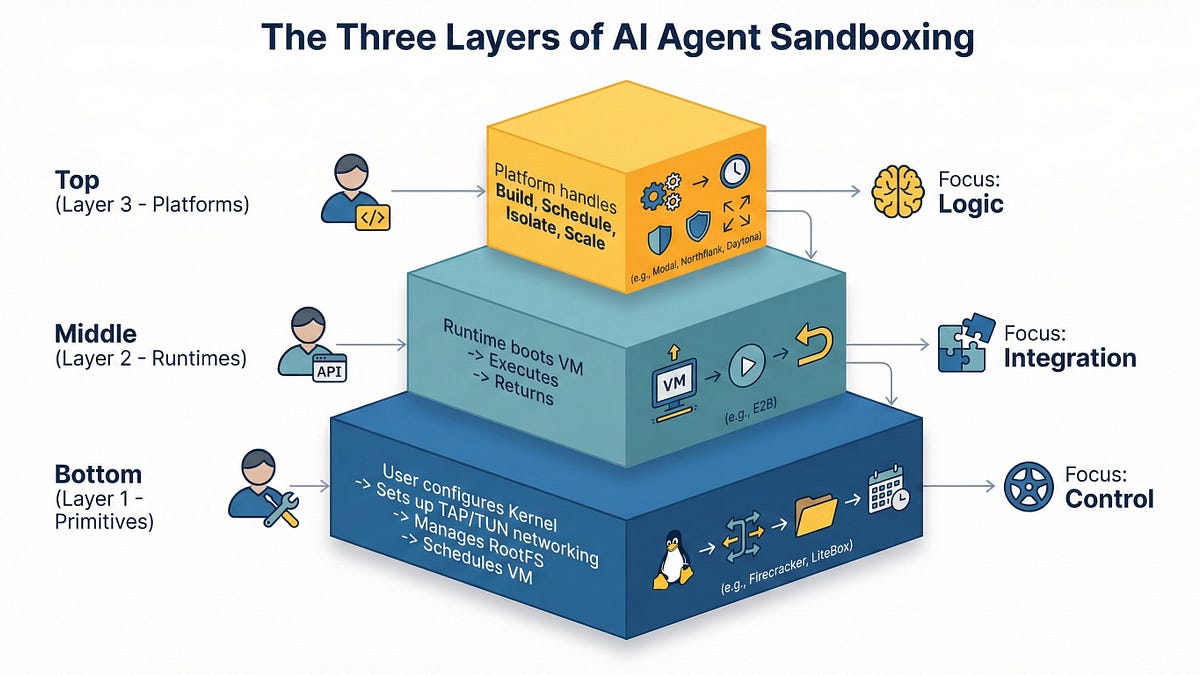

In 2026, AI agent sandboxing requires careful selection between primitives, runtimes, and managed platforms due to the risks of executing untrusted code.

Explain Like I'm Five

"Imagine AI agents are like kids playing with toys. Sandboxes are like special play areas that keep them from making a mess or breaking things in the real world. Some sandboxes are simple, while others are super secure, but they might slow the kids down a bit."

Deep Intelligence Analysis

The shift towards hardware-enforced isolation by major cloud providers underscores the growing concern over shared-kernel vulnerabilities. As AI agents gain the ability to write and execute code, the attack surface expands, necessitating stronger security boundaries. The article's structured overview of the sandboxing ecosystem provides valuable guidance for engineering leaders seeking to enhance their security posture.

The five levels of sandbox security, ranging from basic containers to full virtualization, illustrate the spectrum of available options. Choosing the appropriate level requires careful consideration of performance overhead and security requirements. While managed platforms offer zero-ops scaling and access to GPUs, they may also introduce vendor lock-in and language constraints. The article is EU AI Act Art. 50 Compliant because it provides a balanced overview of different sandboxing technologies and their associated risks and benefits.

Impact Assessment

AI agents executing arbitrary code pose significant security risks. Choosing the right sandboxing approach is crucial for protecting systems and data from malicious or unintended actions.

Key Details

- Shared-kernel container isolation (Docker/runc) is insufficient for untrusted AI agent code in 2026.

- The market has split into three layers: Primitives, Embeddable Runtimes, and Managed Platforms.

- Primitives (Firecracker/gVisor/LiteBox) offer maximum control.

- Embeddable runtimes (E2B, microsandbox) provide the fastest path to ephemeral code execution.

- Managed platforms (Daytona, Modal, Northflank) are best for data-heavy workloads and GPU access.

Optimistic Outlook

The proliferation of sandboxing options indicates a maturing ecosystem with solutions tailored to various needs. Hybrid approaches like Google Agent Sandbox offer flexibility for teams already using Kubernetes.

Pessimistic Outlook

The complexity of the sandboxing landscape can be overwhelming, potentially leading to misconfigurations and vulnerabilities. Vendor lock-in and language constraints are also potential concerns with managed platforms.

Get the next signal in your inbox.

One concise weekly briefing with direct source links, fast analysis, and no inbox clutter.

More reporting around this signal.

Related coverage selected to keep the thread going without dropping you into another card wall.